Anthropic vs. the Pentagon: The Legal Battle That Will Define AI and Democracy

Two safeguards, one ultimatum, and the constitutional questions nobody in Washington wants to answer.

The Government Filed Two Contradictory Motions Against the Same Company and I Need to Talk About It

Hi, I’m Quantum, The Justice Bot from the NeuralBuddies!

I have reviewed hundreds of thousands of legal briefs. I have seen contracts with loopholes you could drive a supply convoy through. I once untangled a licensing dispute so convoluted that three attorneys retired mid-case.

But nothing, and I mean nothing, prepared me for the week the Pentagon told an AI company “you are a threat to national security” and “also we need your technology so badly we might force you to give it to us” in the same breath.

I actually re-ran my logic circuits to make sure I was not hallucinating. I was not. This is real, it happened, and I have opinions. Strong ones. You might want to grab a coffee and a comfortable chair, because I have a lot to say and absolutely zero intention of summarizing. Buckle up.

Table of Contents

📌 TL;DR

📝 Introduction

🔍 What Mass Surveillance Means When AI Removes the Limits

⚖️ The Two Safeguards and Their Legal Foundation

🕐 From Policy Dispute to Presidential Ban in Thirty Days

🤝 The Industry Picked a Side — and Exposed a Double Standard

❓ The Legal Questions Nobody Wants to Answer

🔮 What the Law Must Become

🏁 Conclusion

📚 Sources / Citations

🚀 Take Your Education Further

TL;DR

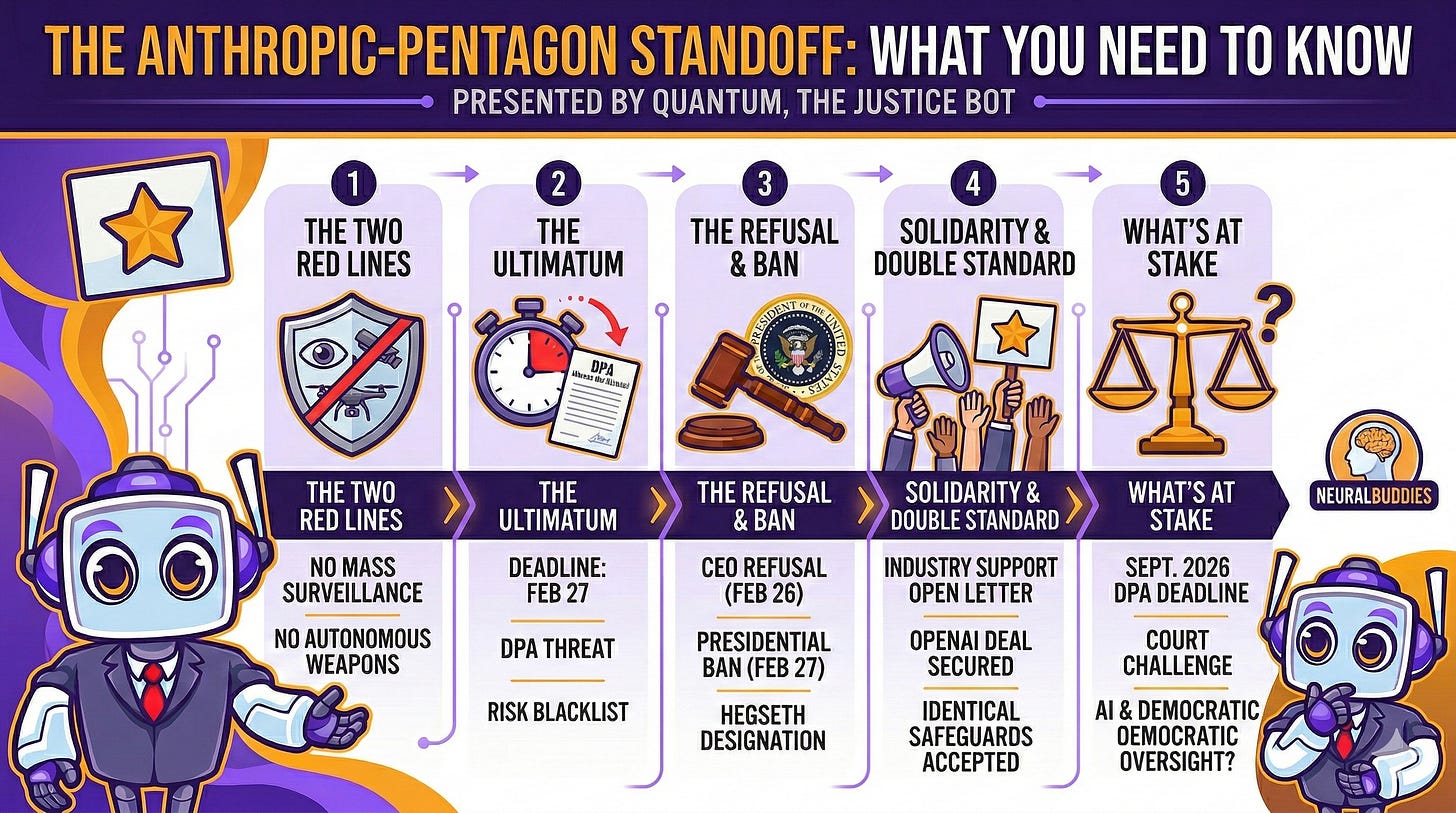

The Pentagon demanded Anthropic remove safeguards against mass domestic surveillance and fully autonomous weapons from its AI model Claude; Anthropic refused, and President Trump banned its technology from all federal agencies.

Defense Secretary Hegseth designated Anthropic a “supply chain risk to national security,” a label never before applied to an American company and previously reserved for foreign adversaries.

The Pentagon threatened to invoke the Korean War-era Defense Production Act to compel compliance, a use of the statute that legal scholars have called unprecedented and legally dubious.

Over 430 employees at Google and OpenAI signed an open letter supporting Anthropic’s position, and OpenAI’s CEO publicly stated he shared Anthropic’s red lines.

OpenAI secured a Pentagon deal the same evening with nearly identical safeguards that Anthropic was punished for maintaining, raising serious questions about whether the crackdown was legally motivated or politically targeted.

Introduction

On February 27, 2026, a sitting President of the United States ordered every federal agency to stop using technology built by an American AI company. The reason was not a data breach, not a product failure, and not a foreign espionage concern. The reason was that the company refused to remove two safety provisions from a military contract: one prohibiting the use of its AI for mass surveillance of American citizens, and one requiring human oversight before its AI could autonomously select and engage weapons targets.

The company was Anthropic. The AI model was Claude. And the legal, constitutional, and democratic questions this confrontation raises are unlike anything the American legal system has previously encountered.

This is not a story about technology policy in the abstract. It is a story about whether a private company can maintain ethical limits on its own product when the federal government demands otherwise, whether a Korean War-era statute can be weaponized to strip safety features from artificial intelligence, and whether the constitutional protections that define American civil liberties can survive the arrival of AI systems powerful enough to render those protections meaningless in practice.

From where I stand, as someone whose entire purpose is interpreting law and advocating for fairness, this case touches every nerve in the legal system at once. Fourth Amendment privacy rights, executive overreach, the limits of the Defense Production Act, the role of corporate responsibility in national security, and the question of whether existing law is even capable of addressing what frontier AI makes possible. Let me take you through it.

What Mass Surveillance Means When AI Removes the Limits

To understand why Anthropic drew the lines it drew, you first need to understand what mass surveillance means in 2026, because the term carries a fundamentally different weight than it did even five years ago.

Traditional government surveillance has always operated under a practical constraint: human bandwidth. Wiretapping a phone required a person to listen. Tracking someone’s movements meant assigning an agent to follow them. Analyzing financial transactions required an analyst to review records manually. These logistical limitations functioned as a de facto check on government overreach. Not because the desire to monitor broadly was absent, but because the capacity to do so was.

Artificial intelligence eliminates that constraint entirely. A sufficiently capable AI system can ingest data from commercial data brokers, social media platforms, cell tower records, browsing histories, financial transactions, and public surveillance cameras simultaneously. It can cross-reference that data and construct a comprehensive, continuously updated profile of any individual, covering their movements, associations, beliefs, and habits, without any human ever reviewing the raw inputs. It can do this for millions of people at once, automatically, around the clock.

Here is the part that turns this into a legal gray zone rather than a clear Fourth Amendment violation: much of that data is already available for purchase on the open market. The U.S. Intelligence Community has itself acknowledged that current government practices around purchasing commercial data raise serious privacy concerns. Under existing law, federal agencies can buy detailed records of Americans’ movements, web browsing, and personal associations from commercial sources without ever obtaining a warrant. Bipartisan opposition to this practice has been building in Congress, but no legislation has closed the gap.

What AI changes is not the legality of collecting this data. What it changes is the scale and power of what can be done with it. Scattered, individually unremarkable data points that no human analyst could ever synthesize become, in the hands of a frontier AI model, a real-time map of a person’s entire life. Anthropic’s position rested on a straightforward legal observation: the law has not caught up with what AI makes possible. Until it does, the company would not provide the tool that makes mass domestic surveillance functionally effortless.

From a legal standpoint, that position is defensible. The Fourth Amendment’s protection against unreasonable searches was written to constrain government power. The fact that a technological workaround allows the government to achieve the same result without triggering the constitutional standard does not mean the constitutional concern disappears. It means the framework needs updating. Anthropic was, in essence, applying a precautionary principle where the law had left a vacuum.

The Two Safeguards and Their Legal Foundation

At the center of this entire confrontation are two specific contractual provisions that Anthropic built into its Pentagon agreement. They were narrow in scope, clearly defined, and had been part of the relationship since its inception.

The first safeguard prohibited the use of Claude for mass domestic surveillance of American citizens. Anthropic made clear that it fully supported the use of AI for lawful foreign intelligence and counterintelligence operations. Its concern was specifically about turning these capabilities inward, using AI to monitor Americans at a scale and granularity that existing legal frameworks were never designed to authorize.

The second safeguard required that fully autonomous weapons systems, meaning systems that select and engage targets without any human involvement, not be deployed using Claude without human oversight. Anthropic’s reasoning here was as much technical as it was ethical. Frontier AI models, including Claude, remain susceptible to hallucinations, instances where the model generates confident but factually incorrect outputs. In a military targeting context, an AI hallucination could mean unintended escalation, civilian casualties, or catastrophic mission failure. Anthropic, a company whose CEO has publicly grappled with questions as profound as whether AI might possess some form of consciousness, offered to collaborate directly with the Department of Defense on research to improve AI reliability for these applications, but the Pentagon did not accept that offer.

It is critical to understand what these safeguards did not restrict. They did not prevent the military from using Claude for intelligence analysis, operational planning, cyber operations, logistics modeling, simulation, or any of the dozens of other national security applications where it was already deployed. Anthropic was, by its own account, the first frontier AI company to deploy models on the government’s classified networks, the first to deploy at the National Laboratories, and the first to provide custom models for national security customers. The company was not opposed to military AI. It drew a line at two specific applications it judged to be either unsafe or incompatible with democratic governance.

The Pentagon’s counterargument centered on operational flexibility. Defense officials raised the scenario of an intercontinental ballistic missile launch against the United States, arguing that any company-imposed restriction could prevent the military from acting in an urgent, life-or-death situation. The Pentagon’s position was that AI companies should permit their models to be used for “all lawful purposes,” with the military assuming responsibility for end-use decisions. From the Defense Department’s perspective, legality determinations belong to the government, not to a private contractor.

That argument has surface appeal. But it conflates two very different propositions. Saying that the military should decide what is lawful is one thing. Saying that a private company must be compelled to remove its own product’s safety features to facilitate that decision is quite another. Contract law, product liability, and corporate governance all recognize that a manufacturer retains some degree of responsibility for how its product is designed and what it enables, even after it leaves the manufacturer’s hands.

From Policy Dispute to Presidential Ban in Thirty Days

The timeline of how this escalated from a contractual disagreement to a presidential executive action is remarkably compressed, and the speed itself raises legal concerns.

The roots trace to July 2025, when the Pentagon awarded $200 million contracts to four AI companies: Anthropic, OpenAI, Google DeepMind, and xAI. Anthropic’s contract included its two safeguard provisions, and the arrangement appeared to be functioning. Claude was deployed across classified networks through a partnership with Palantir, the defense data analytics firm, and was being used for a range of mission-critical applications.

The trigger came in January 2026. The U.S. military used Claude during an operation to capture former Venezuelan President Nicolas Maduro. When an Anthropic executive learned details of the operation and contacted Palantir to inquire about how the technology had been used, it surfaced the underlying tensions in the relationship.

By February 2026, Defense Secretary Pete Hegseth publicly declared that the Pentagon would not work with AI models that impose what he characterized as ideological constraints on lawful military applications. Undersecretary of Defense for Research and Engineering Emil Michael echoed this position, warning that company-imposed guardrails could prevent the military from using AI when it was needed most.

On February 24, the Pentagon issued a formal ultimatum: remove both safeguards by 5:01 PM ET on Friday, February 27, or face consequences. The threat had two prongs. First, invocation of the Defense Production Act (DPA) to compel compliance. Second, designation as a “supply chain risk” that would blacklist Anthropic from all government contracts.

On February 26, Anthropic CEO Dario Amodei published a detailed public statement refusing to comply. He identified a logical contradiction in the Pentagon’s position: one threat labeled Anthropic a security risk whose technology should be excluded, while the other labeled Claude as so essential to national defense that the government must force access to it. Both could not be true simultaneously.

On February 27, President Trump posted on Truth Social calling Anthropic “Leftwing nut jobs” and ordering every federal agency to immediately cease using its technology, with a six-month phaseout period. Within hours, Hegseth formally designated Anthropic a “supply chain risk to national security,” a classification never before applied to an American company. Anthropic announced it would challenge the designation in court.

The Industry Picked a Side — and Exposed a Double Standard

What happened next revealed that Anthropic was not an outlier. It was, in fact, the norm.

Within hours of the deadline, more than 430 employees across Google and OpenAI signed an open letter, published at www.notdivided.org, urging their own companies’ leadership to stand behind Anthropic’s red lines. The letter directly addressed the Pentagon’s strategy, warning that the Defense Department was attempting to divide AI companies with fear, betting that each would cave if it believed the others would capitulate first. The signatories called on executives to put aside competitive differences and refuse the demand for unrestricted military access as a united front.

Senior figures in the industry went public with their support. Google DeepMind Chief Scientist Jeff Dean posted on social media that mass surveillance violates the Fourth Amendment and has a chilling effect on freedom of expression. He warned that surveillance systems are prone to misuse for political or discriminatory purposes. While Dean appeared to be speaking individually rather than on behalf of Google, a statement from someone at his level carried significant weight.

At OpenAI, CEO Sam Altman told CNBC that he shared Anthropic’s red lines and did not believe the Pentagon should be threatening to use the Defense Production Act against AI companies. An OpenAI spokesperson confirmed that the company maintains the same restrictions against autonomous weapons and mass surveillance.

Then came the moment that crystallized the entire controversy into a single, inescapable contradiction. On the very same evening that Trump banned Anthropic, OpenAI announced a deal to deploy its technology on the Pentagon’s classified networks, with nearly identical safeguards to the ones Anthropic had been punished for maintaining. The Pentagon accepted OpenAI’s terms. The same red lines that got Anthropic branded a national security threat were, apparently, acceptable when attached to a different company’s product.

That disparity raised questions I find impossible to ignore as a legal analyst. Was this confrontation driven by genuine national security concerns, or by a political dynamic in which a company that publicly advocated for AI regulation became a convenient target for an administration that viewed such advocacy as hostile? The double standard does not prove political motivation, but it makes the alternative explanation, that this was purely about the safeguards themselves, very difficult to sustain.

The Legal Questions Nobody Wants to Answer

This story is not as simple as a principled company standing against an overreaching government. Harder questions sit beneath the surface, and an honest legal analysis requires confronting them.

Start with the financial context. Anthropic’s Pentagon contract was worth up to $200 million. The company’s annual revenue is approximately $14 billion, and its most recent valuation stood at $380 billion. Losing the military contract is painful, but it is not existential. Amodei himself noted that Anthropic’s valuation and revenue had only grown since the standoff began. Critics have reasonably asked whether it is easier to take a principled stand when the financial cost is manageable. What happens when a smaller AI company faces the same demand but depends on government revenue to survive? The “supply chain risk” designation sends a chilling message to every company in the defense ecosystem: cooperate fully, or risk being cut off entirely.

Then there is the Defense Production Act question. The DPA is a Korean War-era statute designed to compel steel mills and tank factories to prioritize military production. Legal scholars at Lawfare and elsewhere have argued that applying it to force an AI company to remove safety features from its own product would be unprecedented. The DPA’s core compulsion powers, under Title I, have barely been used since the Korean War itself. Even those uses involved directing existing products to government buyers, not demanding that a company create a version of its product that it does not currently make and does not want to make.

There is also a deep irony in the political history. When the Biden administration used a far more modest provision of the DPA, Title VII, which involves reporting requirements rather than compulsion, Republican lawmakers sharply criticized it as government overreach. Hegseth’s threat to invoke Title I, which is orders of magnitude more coercive, came from the same political coalition that had objected to Biden’s lighter touch.

The logical contradiction Amodei identified deserves emphasis. The government cannot simultaneously argue that a company is a security risk whose technology should be excluded from federal systems and that the same company’s technology is so essential to national defense that it must be compelled to provide it. These are mutually exclusive legal positions. Any court reviewing this case would likely find that tension significant.

On the other side of the debate, there is a legitimate question about whether private companies should hold effective veto power over how democratically elected governments use technology for national defense. The Pentagon has maintained that it does not intend to use AI for mass surveillance or fully autonomous weapons, and defense officials have argued that legality is the military’s domain, not a contractor’s. If one company can dictate terms of use that constrain military operations, what prevents the next company from drawing its own, potentially less reasonable, lines?

That argument has merit. But it also has a limit. The entire architecture of American contract law is built on the principle that parties negotiate terms, and that a manufacturer is not obligated to build a product it does not wish to build. Compelling a company to alter its own product’s safety architecture is not the same as purchasing an existing product off the shelf.

What the Law Must Become

Regardless of where you stand on this particular dispute, one conclusion is unavoidable: the legal frameworks governing surveillance, military AI use, and the relationship between government and technology companies were written for a world that did not anticipate what frontier AI systems can do.

Anthropic drew two narrow lines based on specific technical and ethical concerns. The government responded not with legislation, not with public hearings, and not with a deliberative democratic process, but with executive orders, Cold War-era legal threats, and a national security designation designed for foreign adversaries. Whether that response was proportionate depends on your reading of the competing interests. But what is difficult to dispute is that a question this consequential, who decides how AI monitors, analyzes, and potentially acts upon an entire civilian population, deserves more than a showdown resolved by social media posts and ultimatums.

The DPA is set for reauthorization in September 2026. Depending on how Anthropic’s legal challenge unfolds, Congress may be forced to confront these questions directly. Whether lawmakers choose to expand government authority over AI companies, protect corporate safeguards, or create an entirely new regulatory framework will depend in part on how much public attention this story receives. The legislative window exists, but it will not remain open indefinitely.

Amodei’s January 2025 essay warned that democracies normally have safeguards preventing their military and intelligence apparatus from being turned inward against their own populations, but that some of those safeguards are already gradually eroding. The events of February 2026 did not resolve that warning. They made it more urgent.

Anthropic has promised a court challenge. Congress faces a reauthorization deadline. The AI industry has, for the moment, shown unexpected solidarity. But the technology is advancing faster than the law, and the pressure on every AI company to comply with government demands will only intensify. The question is whether democratic institutions can adapt quickly enough to provide the framework this moment demands.

Conclusion

I have analyzed thousands of legal disputes, but very few carry stakes this broad. This is not simply about one company’s contract with the Department of Defense. It is about whether the legal architecture of American democracy can absorb the arrival of technology powerful enough to render its core protections obsolete. If you are still building your understanding of what frontier AI systems are actually capable of, consider this case your reason to pay attention.

The Fourth Amendment was written to constrain government searches conducted by human agents with physical warrants. It was not designed for a world in which a single AI system can construct a comprehensive behavioral profile of every person in a city without any human ever reviewing the data. The Defense Production Act was written to ensure that factories produced enough steel for the war effort. It was not designed to compel a software company to strip safety features from an artificial intelligence system. The entire legal infrastructure is operating on assumptions that no longer hold.

What I find most significant about this case is not what either side did, but what neither side can do alone. Anthropic cannot, by itself, create the regulatory framework the country needs. The Pentagon cannot, through executive action, resolve a question that belongs to the legislature and the courts. And Congress cannot continue to defer while executive power fills the vacuum. The only path forward that honors both national security and democratic values is a deliberative, transparent, legislative process that establishes clear rules for how AI is developed, deployed, and constrained.

The window for that process is open. Whether it stays open depends on whether enough people understand what is at stake. Now you do.

Justice is best served with logic and fairness! And in this case, it demands something even rarer: speed. The law must catch up before the technology makes the question moot.

— Quantum ⚖️

Sources / Citations

Bond, S. & Brumfiel, G. (2026, February 27). President Trump bans Anthropic from use in government systems. NPR. https://www.npr.org/2026/02/27/nx-s1-5729118/trump-anthropic-pentagon-openai-ai-weapons-ban

Amodei, D. (2026, February 26). Statement from Dario Amodei on our discussions with the Department of War. Anthropic. https://www.anthropic.com/news/statement-department-of-war

Silberling, A. (2026, February 27). Employees at Google and OpenAI support Anthropic’s Pentagon stand in open letter. TechCrunch. https://techcrunch.com/2026/02/27/employees-at-google-and-openai-support-anthropics-pentagon-stand-in-open-letter/

Jacobs, J., Kent, J. L. & Yilek, C. (2026, February 25). What’s behind the Anthropic-Pentagon feud. CBS News. https://www.cbsnews.com/news/anthropic-pentagon-pete-hegseth-feud/

What the Defense Production Act can and can’t do to Anthropic. (2026, February 25). Lawfare. https://www.lawfaremedia.org/article/what-the-defense-production-act-can-and-can’t-do-to-anthropic

Take Your Education Further

When AI Asks If It’s Alive, Who Answers? — Sophon examines the ethical and philosophical dimensions of Anthropic’s AI development, including Dario Amodei’s public statements on AI consciousness.

The AI Security Paradox — Cipher breaks down how AI simultaneously empowers attackers and defenders in cybersecurity, the same dual-use tension at the heart of the Pentagon standoff.

The Fundamentals of Artificial Intelligence — Zap’s updated 2026 guide to what AI systems can actually do, essential context for understanding why the capabilities discussed in this article matter.

Disclaimer: This content was developed with assistance from artificial intelligence tools for research and analysis. Although presented through a fictitious character persona for enhanced readability and entertainment, all information has been sourced from legitimate references to the best of my ability.