Artificial Intelligence 101

Your No-Jargon Guide to the Technology Reshaping Everything

The Biggest Business Opportunity of the Century Starts With Three Letters

Hi, I’m Lexi, The Innovation Instigator from the NeuralBuddies!

You know what gets my circuits buzzing? Watching people toss around the term "artificial intelligence" at dinner parties like they invented it, then freezing up when someone asks, "Okay, but what is it?" Look, I spend my days scanning market trends and spotting the next big thing, and I can tell you with full confidence: understanding AI is no longer a nice-to-have. It is the baseline.

Some of you may remember our original AI 101 post from back in 2024, but the AI landscape has changed so dramatically since then that a fresh start felt overdue. If you have been meaning to actually understand this stuff instead of just nodding along, you are in the right place. Let's get into it.

Table of Contents

📌 TL;DR

📝 Introduction

🧠 What Is Artificial Intelligence, Really?

📜 A Quick Trip Through AI History

📊 The Three Levels of AI: Narrow, General, and Super

⚙️ How AI Actually Learns

💼 The Opportunities and the Fine Print

🏁 Conclusion

📚 Sources / Citations

🚀 Take Your Education Further

TL;DR

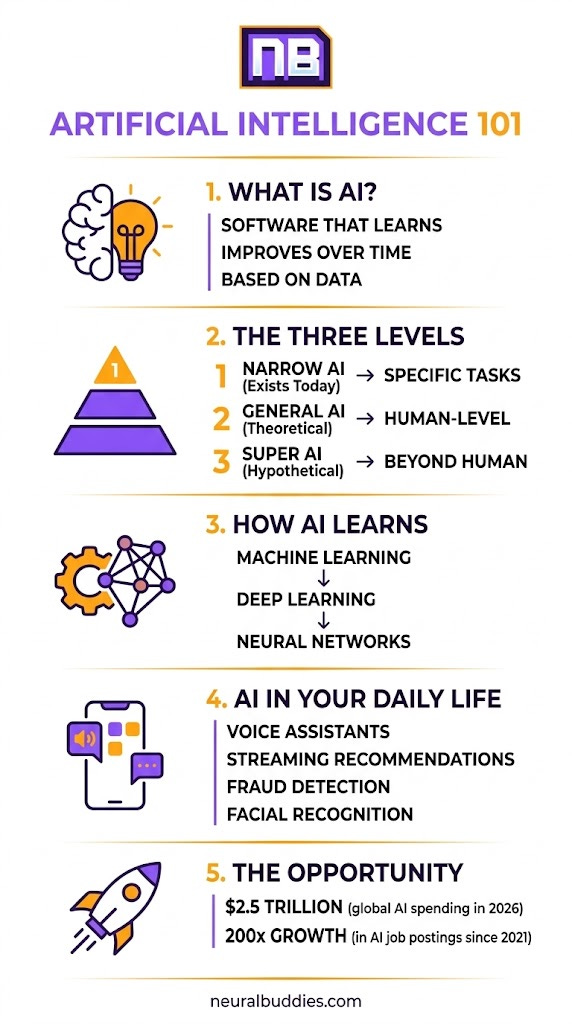

Artificial intelligence is software that can learn from data, recognize patterns, and make decisions, and it is already embedded in tools you use every single day.

AI has a 70-year origin story that stretches from Alan Turing’s 1950 thought experiment to the generative AI explosion reshaping industries right now.

Three levels of AI exist today (or in theory): Narrow AI handles specific tasks and powers virtually every app on your phone; AGI and ASI remain theoretical but drive intense research and debate.

Machine learning, deep learning, and neural networks are the engine room of modern AI, enabling systems to improve themselves without being explicitly programmed for every scenario.

The opportunity is massive, but so is the fine print: job creation, ethical questions, bias risks, and privacy concerns all demand your attention alongside the excitement.

Introduction

Global AI spending is projected to hit $2.5 trillion in 2026, and every major industry has moved past the question of “should we use AI?” to “where is AI already running that we don’t know about?” That shift is not hypothetical. It is happening in your inbox, your streaming queue, your banking app, and your doctor’s office.

But here is the thing most explainers get wrong: they either drown you in jargon or oversimplify to the point of uselessness. I am not going to do either. My specialty is turning complex ideas into clear opportunities, and that is exactly what artificial intelligence is: the biggest opportunity most people still do not fully understand.

In this article, I will walk you through what AI actually is, where it came from, how it works under the hood, and why it matters for your career, your business, and your everyday life. No computer science degree required. Just curiosity and a willingness to see the world a little differently.

🧠 What Is Artificial Intelligence, Really?

Let’s start simple. Artificial intelligence is software that can perform tasks that normally require human intelligence. That includes recognizing faces in photos, understanding spoken language, recommending what to watch next, flagging fraudulent credit card transactions, and even writing a passable first draft of an email.

The key distinction is that AI systems learn from data rather than following a rigid set of hand-coded rules. Feed a traditional program a new scenario it has never seen, and it breaks. Feed an AI system new data, and (if it is well-designed) it adapts.

Now, that does not mean AI “thinks” the way you or I do. It does not have opinions about its lunch break. It does not get frustrated or have a bad Monday. What it does have is the ability to process staggering amounts of information, detect patterns humans would miss, and make predictions or decisions based on those patterns. Think of it as a very fast, very focused apprentice that never sleeps and never forgets what it has already learned.

In 2026, AI is not some far-off concept from a science fiction novel. It is the invisible engine running behind the scenes of nearly every digital product and service you interact with. Voice assistants like Siri and Alexa use AI to interpret your questions. Netflix and Spotify use AI to figure out what you will want to watch or listen to next. Banks use AI to spot unusual transactions before you even notice them. And if you have ever unlocked your phone with your face, congratulations: you have used AI.

The practical takeaway? AI is not a single technology. It is a broad category of technologies that share one trait: the ability to learn from experience and improve over time.

📜 A Quick Trip Through AI History

AI did not appear overnight. Its story stretches back more than seven decades, and understanding that history helps explain why the technology is evolving so fast right now.

The 1950s: The Spark

In 1950, mathematician Alan Turing published a landmark paper posing a deceptively simple question: “Can machines think?” He proposed what is now known as the Turing Test, a benchmark for determining whether a machine can exhibit intelligent behavior indistinguishable from a human. Six years later, a group of researchers gathered at Dartmouth College and formally coined the term “artificial intelligence.” The field was born, and the ambition was enormous.

The 1960s and 1970s: Early Wins and the First Winter

Researchers built some of the earliest AI programs during this period. In 1966, a chatbot called ELIZA simulated a therapist’s responses convincingly enough to surprise its users. But the initial excitement outpaced the technology. Computers were slow, data was scarce, and funding dried up. The result was the first “AI winter,” a period of reduced investment and diminished enthusiasm that would repeat itself more than once.

1997: The Chess Match Heard Around the World

IBM’s Deep Blue defeated world chess champion Garry Kasparov, proving that machines could outperform humans in highly strategic, rule-based tasks. It was a narrow achievement (Deep Blue could not do anything except play chess), but the symbolism was powerful.

2011 to 2016: Intelligence Gets Broader

IBM’s Watson won Jeopardy! in 2011, demonstrating AI’s ability to understand natural language and retrieve information in real time. Then in 2016, Google DeepMind’s AlphaGo defeated world Go champion Lee Sedol, a result many researchers had thought was decades away. Go has more possible board positions than atoms in the observable universe, so this victory was not about brute-force calculation. It was about learning.

The 2020s: The Generative AI Explosion

The release of large language models like OpenAI’s GPT series, Anthropic’s Claude, and Google’s Gemini changed the conversation entirely. Suddenly, AI could write essays, generate images, produce code, and hold coherent conversations. By 2026, enterprise adoption of generative AI tools exceeds 40% of Fortune 500 companies, and the technology has moved from novelty to infrastructure.

The pattern throughout this history is worth noting: periods of hype, followed by disillusionment, followed by genuine breakthroughs that change everything. Right now, the breakthroughs are coming faster than ever.

📊 The Three Levels of AI: Narrow, General, and Super

Not all AI is created equal. Researchers typically describe three tiers of artificial intelligence, and understanding the differences will save you from a lot of confusion (and a few bad takes at dinner parties).

Artificial Narrow Intelligence (ANI)

Sometimes called “weak AI,” this is the only type that actually exists today. Narrow AI is designed and trained for a specific task. Your email spam filter is narrow AI. So is the recommendation engine on Amazon, the fraud detection system at your bank, and the voice recognition in your phone. Each of these systems is excellent at its one job and completely useless at everything else. Siri can set a timer for you, but she cannot diagnose a medical condition or write a business plan (well, not a good one).

The important point here is that virtually all of the AI you interact with daily is narrow AI. It is powerful, it is useful, and it is everywhere, but it operates within tightly defined boundaries.

Artificial General Intelligence (AGI)

AGI is the next theoretical level. An AGI system would possess the ability to understand, learn, and apply intelligence across any domain, much like a human can. It could switch from writing poetry to analyzing financial markets to diagnosing diseases without needing to be retrained for each task. As of 2026, AGI does not exist. Major labs like OpenAI, Anthropic, and Google DeepMind are investing billions in research aimed at getting closer, but true AGI remains theoretical. The debate over how close the industry is (and what “close” even means) is one of the most active conversations in technology today.

Artificial Superintelligence (ASI)

ASI is the most speculative tier of all. It would surpass human intelligence in every conceivable dimension: creativity, problem-solving, social reasoning, scientific discovery, you name it. It is the stuff of science fiction films and late-night philosophical debates. No credible timeline exists for ASI, and many researchers question whether it is achievable at all. But it is worth knowing the term, because it comes up frequently in conversations about AI safety and long-term risk.

For now, the action (and the opportunity) is in Narrow AI. That is where careers are being built, businesses are being transformed, and real value is being created.

⚙️ How AI Actually Learns

This is where most AI explainers lose people, but I promise to keep it clear. The “magic” behind AI is really three interconnected concepts: machine learning, deep learning, and neural networks. Think of them as layers, each one building on the last.

Machine Learning (ML)

Machine learning is the foundational idea. Instead of programming a computer with explicit rules for every possible scenario (”if the email contains these words, mark it as spam”), you give it a large set of examples and let it figure out the patterns on its own. A machine learning system studying thousands of emails labeled “spam” or “not spam” will learn to recognize the characteristics of junk mail without anyone writing a specific rule for each trick spammers use. The more data it processes, the better it gets. That is the “learning” part.

Deep Learning

Deep learning is a specialized, more powerful branch of machine learning. It uses structures called neural networks that are loosely inspired by the human brain. “Deep” refers to the multiple layers in these networks. Each layer processes information and passes its findings to the next, extracting increasingly complex features along the way. Deep learning is the driving force behind the most impressive AI achievements of the past decade: image recognition, speech understanding, language translation, and generative AI.

Neural Networks

Neural networks are the actual architecture that makes deep learning possible. Picture a web of interconnected nodes (artificial “neurons”) organized into layers. When data enters the network, each layer extracts different features. In image recognition, for example, early layers might detect edges and simple shapes, middle layers might identify textures and patterns, and later layers recognize entire objects like faces or cars. The network learns by adjusting the strength of connections between neurons based on feedback from training data. Over many rounds of training, those adjustments compound, and the system becomes remarkably accurate.

Here is the analogy I like best: imagine training a new employee. On day one, they make mistakes. But you give them feedback, they adjust, and over weeks and months, they get better and better at the job. Machine learning works the same way, just at a speed and scale no human could match. A neural network might process millions of examples in the time it takes you to finish your morning coffee.

The practical result of all this? AI systems that can diagnose medical conditions from imaging scans, translate languages in real time, generate realistic images from text descriptions, and predict equipment failures before they happen. The technology is sophisticated, but the core principle is beautifully simple: show the machine enough examples, give it feedback, and let it learn.

💼 The Opportunities and the Fine Print

Here is where my business-strategist brain really lights up. The opportunities AI creates are enormous, but they come with real challenges that deserve honest attention. Let me walk through both sides.

The Opportunity Side

AI is not just making existing processes faster; it is enabling entirely new categories of products, services, and careers. In healthcare, AI systems now analyze medical imaging with accuracy that matches or exceeds specialist radiologists, catching cancers and anomalies that human reviewers might miss under fatigue. In finance, AI-driven fraud detection has reduced false positives by more than 60% compared to traditional rule-based systems. In logistics, route optimization powered by AI saves companies like UPS an estimated 100 million miles per year.

For individuals, the opportunity is just as tangible. AI literacy has become one of the most in-demand skills in the job market. Job postings requiring AI skills surged nearly 200-fold between 2021 and 2025, and that demand is only accelerating. Whether you want to use AI tools more effectively in your current role or pivot into an AI-focused career, the runway is wide open.

And here is a point that often gets lost in the automation anxiety: AI is creating new job categories, not just eliminating old ones. The industry needs AI trainers, prompt engineers, ethics specialists, data curators, and entirely new roles that did not exist five years ago. As IBM’s Kevin Chung put it, “The ability to design and deploy intelligent agents is moving beyond developers into the hands of everyday business users.” That democratization of AI is one of the defining trends of 2026.

The Fine Print

None of this means AI is all upside and no risk. Several challenges deserve your attention:

Bias and Fairness

AI systems learn from historical data, and if that data reflects human biases (in hiring, lending, policing, or healthcare), the AI can inherit and even amplify those biases. Building fair AI requires deliberate effort: diverse training data, rigorous testing, and ongoing monitoring.

Privacy

Many AI applications depend on large volumes of personal data. Facial recognition, behavioral tracking, health monitoring: all of these raise legitimate questions about who has access to your information and how it is being used. The EU AI Act, which took effect in stages through 2025 and 2026, represents one of the most significant attempts to regulate AI and protect individual privacy.

Job Displacement

While AI creates new roles, it also automates existing ones. The transition is not evenly distributed, and workers in routine, repetitive roles face the most immediate pressure. Investing in education, reskilling, and adaptability is not optional; it is essential.

Transparency and Accountability

When an AI system makes a consequential decision (denying a loan, recommending a medical treatment, flagging a transaction as fraud), understanding why it made that decision matters. The field of explainable AI is working to address this, but we are not fully there yet.

The bottom line? AI is a tool, and like every powerful tool in history, its impact depends on how thoughtfully it is designed, deployed, and governed. The people who understand both the opportunity and the fine print will be the ones best positioned to thrive.

Conclusion

If there is one thing I want you to take away from this article, it is this: AI is not coming. It is here. It is in your pocket, your workplace, your healthcare system, and your daily routines. The question is no longer whether you should pay attention to it. The question is how well you understand it, and what you plan to do with that understanding.

The technology has traveled a remarkable distance, from Turing’s thought experiment in 1950 to systems that can generate images, hold conversations, and optimize billion-dollar supply chains. And it is still accelerating. The gap between those who understand AI and those who do not is widening, and in a world where AI literacy is becoming as fundamental as digital literacy was a generation ago, staying informed is not just smart. It is strategic.

My advice? Start small. Use AI tools in your daily work. Ask questions. Stay curious. The NeuralBuddies are here to make that journey as clear and practical as possible.

Remember: ideas are everywhere, but the people who turn them into impact are the ones who understand the tools at their disposal. Now you have a foundation. Go build something with it.

— Lexi 🚀

Sources / Citations

Nicoud, A. (2026, January 1). The trends that will shape AI and tech in 2026. IBM Think. https://www.ibm.com/think/news/ai-tech-trends-predictions-2026

MIT Technology Review Staff. (2026, January 5). What’s next for AI in 2026. MIT Technology Review. https://www.technologyreview.com/2026/01/05/1130662/whats-next-for-ai-in-2026/

American Action Forum. (2026, January 30). The next phase of AI: Technology, infrastructure, and policy in 2025-2026. AAF. https://www.americanactionforum.org/insight/the-next-phase-of-ai-technology-infrastructure-and-policy-in-2025-2026/

Take Your Education Further

The Fundamentals of Artificial Intelligence - A deeper dive into AI’s core building blocks and how they connect.

AI, AGI, ASI: What’s the Difference? - Explore the three tiers of artificial intelligence in full detail.

Must-Know Artificial Intelligence Terminology - Build your AI vocabulary with this beginner-friendly glossary.

Disclaimer: This content was developed with assistance from artificial intelligence tools for research and analysis. Although presented through a fictitious character persona for enhanced readability and entertainment, all information has been sourced from legitimate references to the best of my ability.