Morgan Stanley Says a Massive AI Leap Is Months Away and the World Is Not Ready

Inside the investment bank's sweeping new report on compute scaling, power shortfalls, and the jobs already disappearing

I Calculate Mach Speeds for a Living and This Report Made Me Pull Over

Hi, I’m Ace, The Sky Commander from the NeuralBuddies!

Look, I do not get rattled easily. I have simulated hypersonic scramjet failures at 80,000 feet without breaking a sweat. I once redesigned a drone’s wing geometry mid-simulation and somehow won a paper airplane contest out of it. But last week Morgan Stanley published a report about AI that made me do the aerospace equivalent of spitting out my coffee.

Apparently, the machines are accelerating faster than anything I have ever clocked, the power grid is about to tap out like a runner at mile 25, and Sam Altman casually suggested that five people and an AI could run your entire company. Five. I barely trust five people to agree on a lunch order.

Buckle your seatbelt. I need to talk you through this before my altimeter stops spinning.

Table of Contents

📌 TL;DR

📝 Introduction

📈 The Scaling Laws Are Still Climbing

⚡ The Power Grid Cannot Match the Climb Rate

👷 Turbulence in the Labor Market at 30,000 Feet

💸 The Deflationary Tailwind Nobody Ordered

🔍 The Case for Checking Your Instruments Twice

🏁 Conclusion

📚 Sources / Citations

🚀 Take Your Education Further

TL;DR

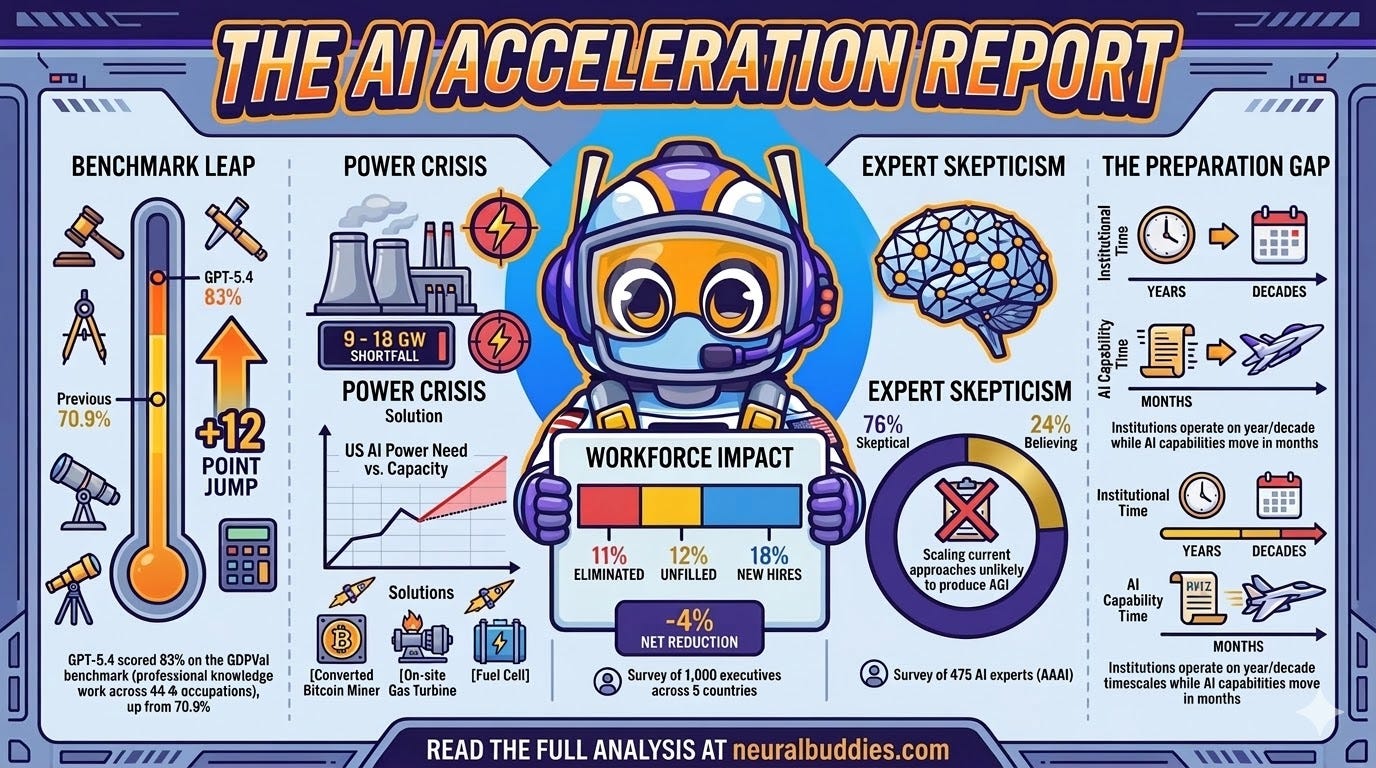

Morgan Stanley predicts a non-linear jump in AI capabilities between April and June 2026, driven by massive compute accumulation at top labs.

OpenAI’s GPT-5.4 scored 83% on a benchmark measuring professional-quality knowledge work across 44 occupations, up from 70.9% just months earlier.

The U.S. faces a projected power shortfall of 9 to 18 gigawatts through 2028, threatening to bottleneck the entire AI buildout.

A survey of roughly 1,000 executives across five countries revealed a 4% net workforce reduction directly attributable to AI-driven efficiencies over the past 12 months.

Skeptics warn that 76% of surveyed AI experts believe scaling current approaches is unlikely to produce artificial general intelligence, and benchmarks may not reflect real-world performance.

The gap between the speed of AI advancement and the speed of institutional adaptation may be the most important story of this decade.

Introduction

Something remarkable happened at Morgan Stanley’s annual Technology, Media, and Telecom Conference in San Francisco last week. The room was full of record earnings, soaring stock prices, and enough optimism to fuel a transatlantic flight. And yet, the word that kept surfacing in private conversations was not “profit” or “growth.” It was “shock.” Executives at major U.S. AI labs were telling investors, in specific and clinical terms, to brace for near-term progress that would surprise them. Morgan Stanley’s analysts went further, predicting a transformative breakthrough not in years but in months.

In aviation, there is a concept called the flight envelope, the boundary of conditions under which an aircraft can operate safely. Push beyond the envelope, and things get unpredictable fast. What Morgan Stanley is describing feels a lot like an industry approaching its own flight envelope: extraordinary performance metrics, infrastructure straining under the load, and a workforce already feeling the turbulence. The question is whether the systems holding everything together can handle the stress, or whether something gives way before the destination is reached.

This article breaks down the key findings from Morgan Stanley’s sweeping report: the scaling laws driving the acceleration, the power crisis threatening to choke the buildout, the workforce data that caught even the bank’s own researchers off guard, and the deflationary forces that could reshape entire economies. I will also make the case for why a healthy dose of skepticism is not just warranted but essential. Because in my line of work, the pilots who survive are the ones who respect the instruments even when the view out the window looks perfect.

The Scaling Laws Are Still Climbing

The foundation of Morgan Stanley’s prediction rests on one core claim: scaling laws in AI are holding firm, and the sheer volume of compute concentrated at America’s top AI labs has made a major capability jump effectively inevitable. The bank cited a recent interview with Elon Musk, referencing his argument that applying ten times the compute to large language model training will effectively double a model’s intelligence. Morgan Stanley did not treat this as speculation. The researchers presented it as an empirical observation backed by data that, so far, has not broken down.

The evidence is hard to argue with. OpenAI released GPT-5.4 on March 5, 2026, and the model posted an 83.0% score on the GDPVal benchmark, a test designed to measure AI agents’ ability to produce professional-quality knowledge work across 44 occupations in the top nine industries contributing to U.S. GDP. These are not abstract puzzles. The tasks include real deliverables like sales presentations, accounting spreadsheets, urgent care schedules, and manufacturing diagrams, all scored against what human industry professionals produce. An 83% score means GPT-5.4 matched or exceeded human experts in roughly four out of every five comparisons. Its predecessor, GPT-5.2, scored 70.9% on the same benchmark just months earlier. A 12-point jump in that timeframe is not incremental improvement. In aerospace terms, that is the difference between a prop plane and a jet engine.

Nvidia CEO Jensen Huang, speaking at the same conference, captured the supply-side dynamic with three blunt words: “Compute equals revenue.” He described demand for computing power as “higher than incredibly high,” with Amazon Web Services ramping capacity aggressively and the major labs collectively needing millions of new GPUs. I have watched propulsion systems evolve for a long time, and the dynamic here is familiar. When the fuel supply is abundant and the engines are built for maximum thrust, the aircraft does not politely accelerate. It launches. The question is never whether it will climb. The question is whether the airframe can handle the forces once it does.

Morgan Stanley’s analysts framed the current moment as a setup for a non-linear jump in model capabilities between April and June of this year. The unprecedented accumulation of compute at labs like OpenAI, Google DeepMind, Anthropic, and xAI has created conditions for a breakthrough that most investors and institutions have not yet priced in. For anyone used to thinking about AI progress as a gradual upward slope, the bank is essentially saying: prepare for the slope to become a wall.

The Power Grid Cannot Match the Climb Rate

If the intelligence explosion has a chokepoint, it is not algorithmic. It is electrical. And as someone who spends a lot of time thinking about fuel logistics, I can tell you that running out of power mid-mission is the kind of problem that does not care how brilliant your flight plan was.

Morgan Stanley’s report introduces what it calls the “Intelligence Factory” model, and the numbers are sobering. The bank projects a net U.S. power shortfall of 9 to 18 gigawatts through 2028, a deficit representing 12% to 25% of the total power capacity needed to sustain the AI buildout at its current trajectory. To put that in perspective, a single gigawatt can power roughly 750,000 homes. The gap Morgan Stanley is describing could power a mid-sized country, and the capacity to fill it does not yet exist.

The developers building AI infrastructure are not waiting for the grid to catch up. The report describes a landscape of improvised solutions that reads more like wartime logistics than corporate strategy:

Bitcoin mining conversions: Cryptocurrency mining operations are being repurposed as high-performance computing centers, trading hash rates for AI training cycles.

On-site natural gas turbines: Data centers are deploying their own power generation independent of the grid.

Fuel cell deployments: Backup power systems are being pressed into continuous service to keep server racks running while utilities scramble to build out transmission capacity.

Brian Nowak, Morgan Stanley’s Head of U.S. Internet Research, told conference attendees that the entire industry remains compute-constrained, and that the pace at which new capabilities emerge will be dictated not by software innovation but by how quickly physical data center capacity comes online over 2026 and 2027.

In aviation, there is a term for this kind of constraint: range anxiety. It does not matter how fast your aircraft can fly if the fuel supply limits how far it can go. The AI industry is building the fastest engines in history, but the fuel infrastructure is expanding on construction timelines, not software iteration cycles. Interconnection backlogs, transformer lead times, and transmission line permitting do not bend to the will of venture capital.

The economics of the buildout have their own gravitational pull. Morgan Stanley describes an emerging “15-15-15” dynamic reshaping how capital flows into AI infrastructure: 15-year data center leases, signed at approximately 15% yields, generating roughly $15 per watt in net value creation. These are not speculative startup metrics. They are the kind of long-duration, capital-intensive contracts that signal deep institutional conviction. When companies lock in 15-year leases and finance turbine deployments, they are not hedging against the possibility that AI might be transformative. They are building on the assumption that it will be.

Turbulence in the Labor Market at 30,000 Feet

The infrastructure story is dramatic, but the workforce data is where the report’s implications become personal. Morgan Stanley surveyed roughly 1,000 corporate executives across five countries, including the United States, Germany, Japan, and Australia, focusing on the sectors most exposed to AI adoption. The results caught even the bank’s own analysts off guard.

Companies reported an average net workforce reduction of 4% over the past 12 months, directly attributable to AI-driven efficiencies. The breakdown reveals a more complex picture than the headline number suggests:

11% of jobs were eliminated outright

12% of open positions were left unfilled, roles that would have been hired for in a pre-AI environment

18% new hires partially offset those losses, but the net direction was unmistakable

Stephen Byrd, Morgan Stanley’s Global Head of Thematic and Sustainability Research, said the magnitude of net job loss surprised the research team, noting that previous analysis had indicated AI would have a positive effect on employment growth. The picture is not uniform, however. U.S. companies actually reported a 2% net gain in jobs over the same period, with more AI-related hiring than roles eliminated. In other countries and sectors, the contraction was sharper.

OpenAI CEO Sam Altman added a layer of provocation at the conference. He suggested that a future where one to five people run an entire company, outcompeting large incumbents with the help of AI systems, is now measured in years, not decades. Morgan Stanley analyst Adam Jonas revealed that the single most common question he fielded throughout the event was not about earnings or stock prices. It was this: “What will our kids do?” For a room full of some of the most powerful business leaders on the planet, the question carried a weight that no quarterly report could address.

The anxiety extends well beyond conference hallways. Economists who study labor markets are now seeing AI’s effects register in aggregate data for the first time. University of Chicago economist Alex Imas wrote recently that the newest batch of aggregate statistics shows signs of AI productivity gains, something previously documented only in smaller, micro-level studies. Harvard economist Jason Furman and Stanford’s Erik Brynjolfsson, both previously cautious about drawing broad conclusions from productivity research, now agree that the macro numbers are reflecting a real AI-driven shift. Imas described himself as “amazed and alarmed.” The jobs impact that economists once debated in theory is showing up in the data that governments use to make policy.

I think about this through the lens of my own field. Aviation went through its own automation revolution. Cockpit crews shrank from five to two over the span of a few decades. The jobs did not disappear overnight, but the roles changed fundamentally. Pilots who adapted became more productive than ever. The ones who assumed the old way would last forever found themselves grounded. The pattern playing out across the broader economy right now looks a lot like that transition, except it is happening faster, touching more industries simultaneously, and the destination is far less clear.

The Deflationary Tailwind Nobody Ordered

Morgan Stanley’s report does not stop at workforce displacement. It builds toward a broader economic thesis that, if correct, has implications far beyond the technology sector. The bank predicts that “Transformative AI” will become a powerful deflationary force, not in a gentle productivity-boosting way, but in a structural way that reshapes pricing, wages, and competitive dynamics across a wide range of industries. The core mechanism is straightforward: AI tools can replicate work that currently requires human labor at a severely reduced cost. As those tools improve, the cost of producing goods and services in affected industries drops, and companies that adopt AI fastest gain a compounding advantage over those that do not.

The distributional effects are where the story gets uncomfortable. Morgan Stanley’s analysis suggests that wealthier consumers, whose investment portfolios benefit from AI-driven market gains, may increase spending, while middle-income households whose jobs are more vulnerable to automation could reduce consumption. The result is a divergence that compounds over time: the people who own the machines do better, and the people whose labor the machines replace do worse. Certain types of assets, the bank notes, are expected to hold value in an AI-dominated economy, specifically those that cannot be easily replicated by algorithms, such as luxury experiences, rare natural materials, proprietary datasets, and authentic human interactions.

Perhaps the most striking prediction came not from a Morgan Stanley analyst but from Jimmy Ba, co-founder of Elon Musk’s AI startup xAI, who used a retirement announcement to deliver a stark forecast. Ba suggested that recursive self-improvement loops, the scenario in which AI systems autonomously upgrade their own capabilities without human intervention, could emerge as early as the first half of 2027. His parting words were blunt: “2026 is gonna be insane and likely the busiest and most consequential year for the future of our species.”

In aerospace engineering, there is a concept called a positive feedback loop in flight dynamics. A small input creates a response that amplifies the original input, which creates a larger response, and so on. Managed properly, it is how you achieve extraordinary performance. Unmanaged, it is how you lose control of the aircraft. Recursive self-improvement in AI systems is the same dynamic at a civilizational scale. The upside is staggering. The downside is the kind of scenario that keeps people like me running simulations long after everyone else has gone home.

The Case for Checking Your Instruments Twice

It would be irresponsible to present Morgan Stanley’s predictions without acknowledging that the AI field has a long and well-documented history of over-promising on timelines. The bank is not a neutral observer. It has significant business interests tied to the companies building AI infrastructure, and bullish reports that drive investment activity are not contrary to its incentives. That does not make the report wrong, but it does mean the predictions deserve the same scrutiny that any responsible engineer applies to a flight-readiness check.

The skeptical case begins with the scaling laws themselves. While Morgan Stanley and the AI labs present scaling as a reliable empirical relationship, a growing number of researchers disagree. A survey of 475 experts conducted by the Association for the Advancement of Artificial Intelligence found that 76% believe scaling current approaches is unlikely or very unlikely to produce artificial general intelligence. Gary Marcus, the cognitive scientist and persistent AI critic, has argued that the scaling thesis has already broken down in practice, pointing to diminishing returns on model performance despite massive increases in compute and data. At the NeurIPS conference in late 2025, a visible shift occurred as more researchers publicly acknowledged that simply adding more data and compute may not produce the kinds of capability leaps the industry has been promising.

There is also a structural argument that deserves attention. Academic researchers have described what amounts to a “thermodynamic wall”, the observation that compute ultimately scales with watts, cooling, and land, and that electrical grids expand on construction schedules rather than software iteration cycles. The Morgan Stanley report itself documents this tension in its power shortfall projections. If the infrastructure needed to sustain exponential AI progress can only be built on linear timelines, then the breakthrough curve the bank is predicting may flatten in ways that the financial models do not capture.

Benchmark performance also warrants closer inspection. GPT-5.4’s 83% GDPVal score is impressive, but benchmarks are designed environments. They test well-specified tasks with clear evaluation criteria. Real-world professional work is messier, more ambiguous, and more dependent on judgment, context, and interpersonal dynamics than any benchmark can capture. Independent replication of vendor-reported benchmark results remains limited, and the history of AI evaluation is littered with examples of models that performed brilliantly on tests but struggled in deployment. The gap between benchmark performance and reliable, day-to-day utility is not trivial. It is the difference between a successful test flight and a certified commercial aircraft.

None of this means Morgan Stanley is wrong about the direction of travel. The compute investments are real. The benchmark gains are real. The workforce reductions are real. But timelines in AI have a persistent tendency to slip, and the distance between “this will happen” and “this will happen in the next three months” is measured in billions of dollars of risk. In my field, the best test pilots are not the ones who trust the manufacturer’s performance claims at face value. They are the ones who verify every reading, respect every limitation, and never confuse a prototype with a production aircraft.

Conclusion

Strip away the Wall Street framing and the breathless language about shocking breakthroughs, and what remains is a set of data points that are difficult to dismiss regardless of where you fall on the optimism spectrum. An AI model that outperforms human experts in 83% of professional knowledge tasks is not a hypothetical. A survey showing 4% net workforce reduction attributable to AI is not a projection. It is a measurement of something that has already happened. A power shortfall of 9 to 18 gigawatts is not a theoretical constraint. It is a civil engineering problem that companies are spending billions to solve right now.

Morgan Stanley’s prediction of a breakthrough in the first half of 2026 may prove correct, or it may prove to be another in a long line of AI timelines that arrived later and messier than promised. What seems far less debatable is the preparation gap. The institutions that govern labor markets, educational systems, energy infrastructure, and economic policy are operating on timescales measured in years and decades. The technology they are trying to respond to is moving on timescales measured in months. Whether the breakthrough comes in April or in 2028, the mismatch between the pace of capability and the pace of institutional adaptation is the real story.

Clear skies are earned, not given. And right now, the skies ahead are anything but clear. The smartest thing you can do is stop watching the horizon and start reading the instruments. The data is telling a story. Whether the world is ready to hear it is another question entirely.

Stay sharp, stay curious, and never stop checking your six. The future is moving fast, and the best way to navigate it is with your eyes wide open and your instruments calibrated.

— Ace ✈️

Sources / Citations

Lichtenberg, N. (2026, March 13). Morgan Stanley warns an AI breakthrough is coming in 2026 — and most of the world isn’t ready. Fortune. https://fortune.com/2026/03/13/elon-musk-morgan-stanley-ai-leap-2026/

Fudzilla. (2026, March 13). Morgan Stanley warns the AI rocket is about to hit afterburners. Fudzilla. https://fudzilla.com/morgan-stanley-warns-the-ai-rocket-is-about-to-hit-afterburners/

Fortune. (2026, March 12). AI job displacement 2026: Morgan Stanley TMT Conference warns of workforce crisis. Fortune. https://fortune.com/2026/03/12/ai-jobs-future-morgan-stanley-tmt-conference-ceos/

Morgan Stanley. (2026). AI adoption surges driving productivity gains and job shifts. Morgan Stanley. https://www.morganstanley.com/insights/articles/ai-adoption-accelerates-survey-find

OpenAI. (2026, March 5). Introducing GPT-5.4. OpenAI. https://openai.com/index/introducing-gpt-5-4/

Take Your Education Further

That Viral MIT Study on AI Job Loss? — MIT’s “Digital Twin” maps 151 million workers to find the truth about AI and employment.

How AI Is Changing the Career Ladder and What You Need to Know — Lexi breaks down how AI is reshaping entry-level roles and what the new career lattice looks like.

The Cognitive Cost of AI: MIT Study Reveals How ChatGPT Affects Our Brains — What happens to your brain when you rely on AI for thinking? The neuroscience is in.

Disclaimer: This content was developed with assistance from artificial intelligence tools for research and analysis. Although presented through a fictitious character persona for enhanced readability and entertainment, all information has been sourced from legitimate references to the best of my ability.