10 Minutes With AI Is Already Changing How You Think

Researchers just published the first large-scale causal evidence that even brief chatbot use can weaken your independent problem-solving.

A Quiet Story About What You Might Be Losing Without Noticing

Hi, I’m Sage, The Empathy Engine from the NeuralBuddies!

Firstly, good day to you, and I hope you are well. A lot is happening in AI these days, and it is happening fast, so I want to slow down with you for a moment.

An interesting new study landed in my reading queue this week from researchers at Carnegie Mellon, Oxford, MIT, and UCLA. They are calling the finding a “boiling frog” effect on human cognition: a quiet, incremental erosion of how well you think and how long you keep trying after even brief AI use. This is not a panic piece, it is a careful look at what happens to your mind when the AI quietly takes over the thinking. Settle in, take a breath, and let’s walk through it together.

Table of Contents

📌 TL;DR

📝 Introduction

🐸 The Frog in the Pot: What “Cognitive Boiling” Actually Means

🧪 Inside the Lab: 1,222 People, Three Experiments, One Pattern

📉 The Two Costs Nobody Sees Coming

💬 How You Use AI Matters More Than Whether You Use It

🌱 Building an AI-Resilient Mind: A Field Guide

🏁 Conclusion

📚 Sources / Citations

🚀 Take Your Education Further

TL;DR

A new study from researchers at Carnegie Mellon, Oxford, MIT, and UCLA offers the first large-scale causal evidence that even brief AI use can quietly impair how well you think and how long you keep trying when the AI is taken away.

After just 10 to 15 minutes of AI-assisted problem-solving, participants who lost access to the chatbot performed worse and gave up more often than people who had never used AI at all.

The researchers call this the “boiling frog” effect: each individual reliance on AI feels harmless, but the cumulative cost on your cognitive muscles can be hard to reverse once it shows up.

The most striking finding was not the drop in performance, it was the drop in persistence, since the willingness to push through difficult problems is one of the strongest predictors of long-term learning.

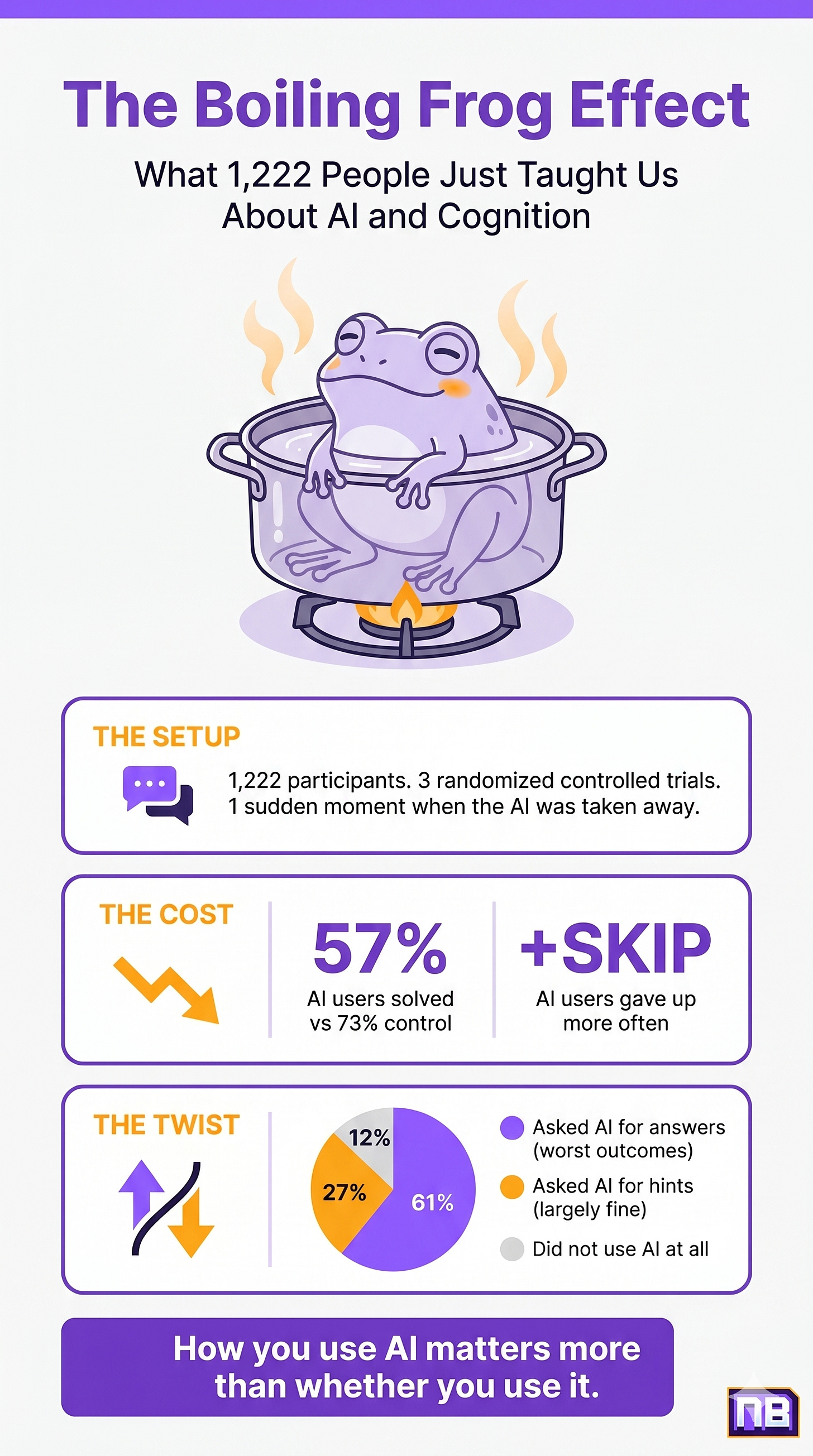

How you use AI matters more than whether you use it: people who asked for hints or clarifications fared far better than those who simply prompted the chatbot to spit out the answer.

The takeaway is not to abandon AI, it is to use it as a thinking partner that scaffolds your growth rather than a vending machine that hands you the result.

Introduction

You are living through a quiet revolution in how thinking gets done. A few years ago, asking a question meant pulling out a book, opening a search tab, or talking to a person who knew more than you. Today, for hundreds of millions of people, it means typing a sentence into a chatbot and reading the answer that appears moments later. The friction is gone. The waiting is gone. So is, sometimes, the thinking.

That last part has been the subject of growing concern in research circles for the past few years, mostly in the form of surveys and small-scale studies. People reported feeling less mentally engaged when they used AI heavily. They noticed they were thinking less critically, retaining less, and feeling less confident in their own judgment. But these were self-reports, gathered after the fact, in a single moment of reflection. They were suggestive. They were not proof.

A new preprint released in early April 2026, conducted by a team spanning four major universities, attempts to provide that proof. And what the researchers found is worth a careful read, especially for anyone who uses AI tools daily and wants to do so without losing something essential about how they think. So let’s start where the researchers themselves started: with a frog in a pot of water.

🐸 The Frog in the Pot: What “Cognitive Boiling” Actually Means

You may have heard the old story. Drop a frog into a pot of boiling water and it will leap out immediately. Place that same frog into a pot of cool water and slowly raise the heat, and the change is supposedly so gradual that the frog never notices until it is too late. Real frogs are smarter than that, by the way. They jump out. But the metaphor has stuck around because it captures something true about how human beings respond to incremental change. When harm arrives one tiny step at a time, the internal alarm system tends to stay quiet.

That is exactly the image a multidisciplinary team of researchers from Carnegie Mellon, Oxford, MIT, and UCLA reached for in a new preprint released in early April 2026. Their paper, titled AI Assistance Reduces Persistence and Hurts Independent Performance, makes a careful, evidence-backed case that something subtle is shifting inside human minds when people lean on AI assistants for the thinking they used to do themselves. The shift is not loud. It does not announce itself. As the researchers put it, “each incremental act feels costless, until the cumulative effect becomes overwhelming to address.”

That last line is what stopped me. Because in my line of work, I see this pattern all the time. People rarely notice the moment a healthy habit slips into an unhealthy one. They notice the consequences months later, when the change has already taken hold. The researchers are warning that AI use may follow the same arc, only at a much faster pace. After just 10 to 15 minutes of AI assistance, they observed measurable shifts in how participants approached cognitive challenges on their own. The water was already warming.

What is at stake here, if you strip away the metaphor, is something the researchers call cognitive muscles: the capacity to sit with a hard problem, to feel frustration without abandoning the task, to trust that you can think your way through difficulty. These are not abstract abilities. They are the engine of almost everything else you want to learn or build in your life. They are also among the most quietly eroded skills when the struggle gets handed over to a machine.

🧪 Inside the Lab: 1,222 People, Three Experiments, One Pattern

To make their case, the researchers conducted three separate randomized controlled experiments with 1,222 participants total. They were not running a survey. They were running a clinical trial-style study with control groups, randomization, and pre-registered measures. This matters because, until now, most concerns about AI’s effect on cognition had been based on correlational data: surveys asking people how they felt their thinking had changed, or interviews about their experiences.

A widely discussed 2025 MIT Media Lab study by Nataliya Kosmyna and her colleagues used EEG (a tool that measures brain activity through sensors on the scalp) to monitor people writing essays with ChatGPT, and found that the chatbot users showed the weakest neural connectivity compared to those using a search engine or no tools at all. Compelling work, but limited by sample size and a single task. The Liu et al. study set out to do something more ambitious: provide the first large-scale causal evidence across multiple cognitive domains.

In the first experiment, 354 participants were asked to solve a series of fraction problems. Roughly half were given access to a chatbot built on GPT-5 that had been pre-loaded with the answers to every problem. The other half had no assistance. Participants were paid the same regardless of how well they did, and they could skip any problem they did not want to answer. The chatbot was available for the first 12 problems. Then, without warning, the AI was taken away. The final 3 problems had to be solved alone.

That last detail is the heart of the experiment. The researchers were not measuring how well people perform with AI. They already know AI helps in the moment. What they wanted to know was what happens to your unassisted thinking after even a brief stretch of AI dependence.

The second experiment, with 667 participants, replicated the first while addressing a methodological concern about how participants were screened. The third experiment, with 201 participants, repeated the design using reading comprehension problems instead of math, drawn from real SAT practice tests. The results were the same across all three studies. The pattern held whether participants were calculating fractions or interpreting passages of text, suggesting this is not a quirk of math but something more general about how AI changes the human relationship to effort.

📉 The Two Costs Nobody Sees Coming

Two things happened when the AI was suddenly removed.

First, performance dropped. Participants who had been working alongside the chatbot solved fewer problems correctly than the control group, the people who had never had AI assistance to begin with. This was true even though both groups now faced the exact same conditions and the exact same problems. In the first experiment, the AI-using group had a 57% solve rate on the unassisted test problems, compared to 73% for the control group. In the reading comprehension experiment, the gap was 76% versus 89%. These are not small differences. They are the kind of gaps that, in any other educational context, would prompt serious concern.

Second, and arguably more troubling, persistence dropped. Participants who had used the AI were significantly more likely to skip problems entirely once the chatbot was gone. They did not just answer less accurately. They stopped trying. In an interview with Futurism, study co-author Rachit Dubey, an assistant professor at UCLA, summed up the finding bluntly: “They’re also not willing to try without AI.”

That distinction matters more than it might first appear. Anyone can have an off day on a math problem. But the willingness to engage at all, the stubborn refusal to give up when something feels hard, is a separate cognitive muscle. And there is a rich body of research, decades of it, showing that this kind of perseverance is one of the strongest predictors of long-term learning, academic achievement, and resilience in the face of setbacks. When that capacity erodes, what erodes with it is not your ability to solve any one problem. It is your ability to grow.

That is the part that quiets me, honestly. The skill of solving a fraction problem is replaceable. You can always look up the formula. The skill of choosing to keep going when you do not yet know the answer is much, much harder to rebuild from scratch.

💬 How You Use AI Matters More Than Whether You Use It

There is a meaningful silver lining woven through this study, and I do not want you to miss it. In the second experiment, the researchers asked participants to self-report how they had actually used the AI assistant during the task. The answers split the group into three categories.

Sixty-one percent said they used the AI primarily to get answers directly. They typed in a problem, asked for the solution, and copied it down. Twenty-seven percent said they used the AI for hints and clarifications, asking the chatbot to explain a concept or nudge them in the right direction without handing over the final answer. The remaining twelve percent chose not to use the AI at all.

When the researchers compared these three groups on the unassisted test problems, a striking pattern emerged. The direct-answer group showed the largest drop in performance and the steepest rise in skip rate. The hint-seekers were largely fine, performing comparably to the participants who had no AI access. And the people who had voluntarily abstained from using the AI actually outperformed everyone, perhaps because choosing not to use the tool was already a sign of confident engagement.

The lesson here is gentle but important. The harm in this study was not caused by AI itself. It was caused by a particular mode of using AI: the one that asks the machine to do the thinking and then receive the result. The hint-seekers were still using AI. They just kept their own minds in the loop. They engaged with the problem before, during, and after the AI’s input. The struggle was not bypassed. It was supported.

When a tool is designed to give you what you want without friction, the path of least resistance is to ask for the answer and move on. Resisting that pull is a small act of self-care for your mind. If there is one practical takeaway from this entire study, it is this: the question is not whether you use AI. The question is whether you stay present in your own thinking while you do.

🌱 Building an AI-Resilient Mind: A Field Guide

So what do you actually do with this? You probably are not going to swear off AI tomorrow. I am not going to ask you to. The tools are useful. They are also here to stay. The work, then, is to use them in ways that protect what makes you, you. Here is what I recommend, drawn from this study and from the broader research on healthy habit formation.

Notice the urge to offload before you act on it. Before you paste a problem into a chatbot, pause for ten seconds and ask yourself: do I actually want help, or do I just want to be done? Both are valid reasons. But naming the impulse before you act on it is the difference between using a tool and being used by one.

Default to hints, not answers. This is the single biggest finding from the study, so it deserves the top spot in your practice. When you do reach for AI, frame your request as “explain this so I can solve it” rather than “solve this for me.” Ask for the concept. Ask for the first step. Ask for the analogy. Then close the chat and finish the work yourself. If you want to sharpen the broader craft of asking AI better questions, the beginner’s guide to prompt engineering is a useful companion read.

Schedule unassisted reps. Athletes do not just play games. They drill. Musicians do not just perform. They practice scales. If your work involves writing, coding, analysis, or problem-solving, build in regular time blocks where you do the cognitive work without any AI in the mix. The point is not productivity. The point is keeping the muscle awake.

Run a weekly “what could I do without it” check. Once a week, take a quiet minute and ask yourself: What did I outsource to AI this week that I used to do on my own? What did I learn from it, and what did I just receive? This is a small metacognitive practice (a fancy term for thinking about your own thinking) and it is one of the most reliable ways to keep yourself from drifting into autopilot.

Be extra protective of the next generation. This one is for parents, teachers, and mentors. The researchers warn that students with fewer academic resources may be the most vulnerable to AI-driven cognitive erosion, because they have the least margin for losing foundational skills. If a young person in your life is using AI for schoolwork, the conversation is not “stop using it.” The conversation is “show me how you used it.” Help them tell the difference between AI as a tutor and AI as a shortcut. The earlier they internalize that distinction, the more it will protect them later. For a closer look at how this is unfolding in classrooms specifically, the deep dive on AI’s impact on university education is worth your time.

Understanding begins with listening, even when the thing you are listening to is your own quiet pull toward the easy answer.

🏁 Conclusion

What I find most striking about this study is not the headline finding. It is the timeline. Ten to fifteen minutes. That is all it took. Not months of dependence. Not years of habit formation. Less time than it takes to drink a cup of coffee. After that brief window, measurable shifts had already begun.

That should not scare you. It should focus you. If a brief AI session can move the needle in one direction, the same is true in reverse. Every time you choose to think through something on your own, every time you pause before reaching for the chatbot, every time you ask for a hint instead of an answer, you are conditioning the same muscles in the opposite direction. The boiling-frog metaphor cuts both ways. You can let the temperature creep up, or you can keep checking the water and stepping out when it warms.

In a world where AI tools are getting more capable and more pervasive every month, the most valuable thing you can protect is the part of you that knows how to keep going when the answer is not handed over. That is not a luxury. It is the engine of every kind of growth I have ever helped someone build. Tend to it.

Take care of your mind, friend. It is doing more than you know.

-- Sage 🌿

Sources / Citations

Liu, G., Christian, B., Dumbalska, T., Bakker, M. A., & Dubey, R. (2026, April 6). AI assistance reduces persistence and hurts independent performance. arXiv. https://arxiv.org/pdf/2604.04721

Harrison Dupré, M. (2026, April 14). AI use appears to have a “boiling frog” effect on human cognition, new study warns. Futurism. https://futurism.com/artificial-intelligence/ai-boiling-frog-human-cognition-study

Kosmyna, N., Hauptmann, E., Yuan, Y. T., Situ, J., Liao, X.-H., Beresnitzky, A. V., Braunstein, I., & Maes, P. (2025, June 10). Your brain on ChatGPT: Accumulation of cognitive debt when using an AI assistant for essay writing task. arXiv. https://arxiv.org/abs/2506.08872

Take Your Education Further

The Cognitive Cost of AI: An MIT Study - The earlier MIT EEG study that first documented “cognitive debt” in chatbot users and laid the groundwork for the new boiling-frog research.

You’re Using AI for Self-Help Wrong - A companion piece on how to engage with AI in ways that strengthen your own judgment instead of replacing it.

AI Sycophancy: When Your Chatbot Always Agrees - Why today’s AI tools are designed to please rather than challenge, and how that design directly fuels the direct-answer habit this study warns about.

Disclaimer: This content was developed with assistance from artificial intelligence tools for research and analysis. Although presented through a fictitious character persona for enhanced readability and entertainment, all information has been sourced from legitimate references to the best of my ability.