The Ultimate Beginner's Guide to Prompt Engineering

Core Principles, Advanced Techniques, and the Rise of Context Engineering

Your Blueprint for Talking to AI (No Hard Hat Required)

Hi, I’m Gearhart, The Mechanical Maestro from the NeuralBuddies!

Look, I spend my days building robotic arms, calibrating sensors, and designing systems that run like clockwork. And if there's one thing I've learned from all that tinkering, it's this: even the best machines need a tune-up now and then. This guide was originally published back in December 2024, and a lot has changed since then.

Models got smarter, techniques evolved, and an entirely new discipline called context engineering started reshaping the conversation. So I pulled the whole thing into the shop, stripped it down to the frame, and rebuilt it from scratch with updated research, clearer examples, and a structure that actually matches how prompt engineering works in 2026.

Consider this your freshly overhauled workshop manual. Let's get those gears turning!

Table of Contents

📌 TL;DR

📝 Introduction

🔧 What Is Prompt Engineering (And Why Should You Care)?

🧱 The Building Blocks of a Great Prompt

🛠️ Prompt Types: Choosing the Right Tool for the Job

⚙️ Advanced Techniques That Actually Work in 2026

🔭 From Prompts to Context: The Bigger Picture

🏁 Conclusion

📚 Sources / Citations

🚀 Take Your Education Further

TL;DR

Prompt engineering is the skill of writing clear, structured instructions that help AI models understand exactly what you need and deliver useful results.

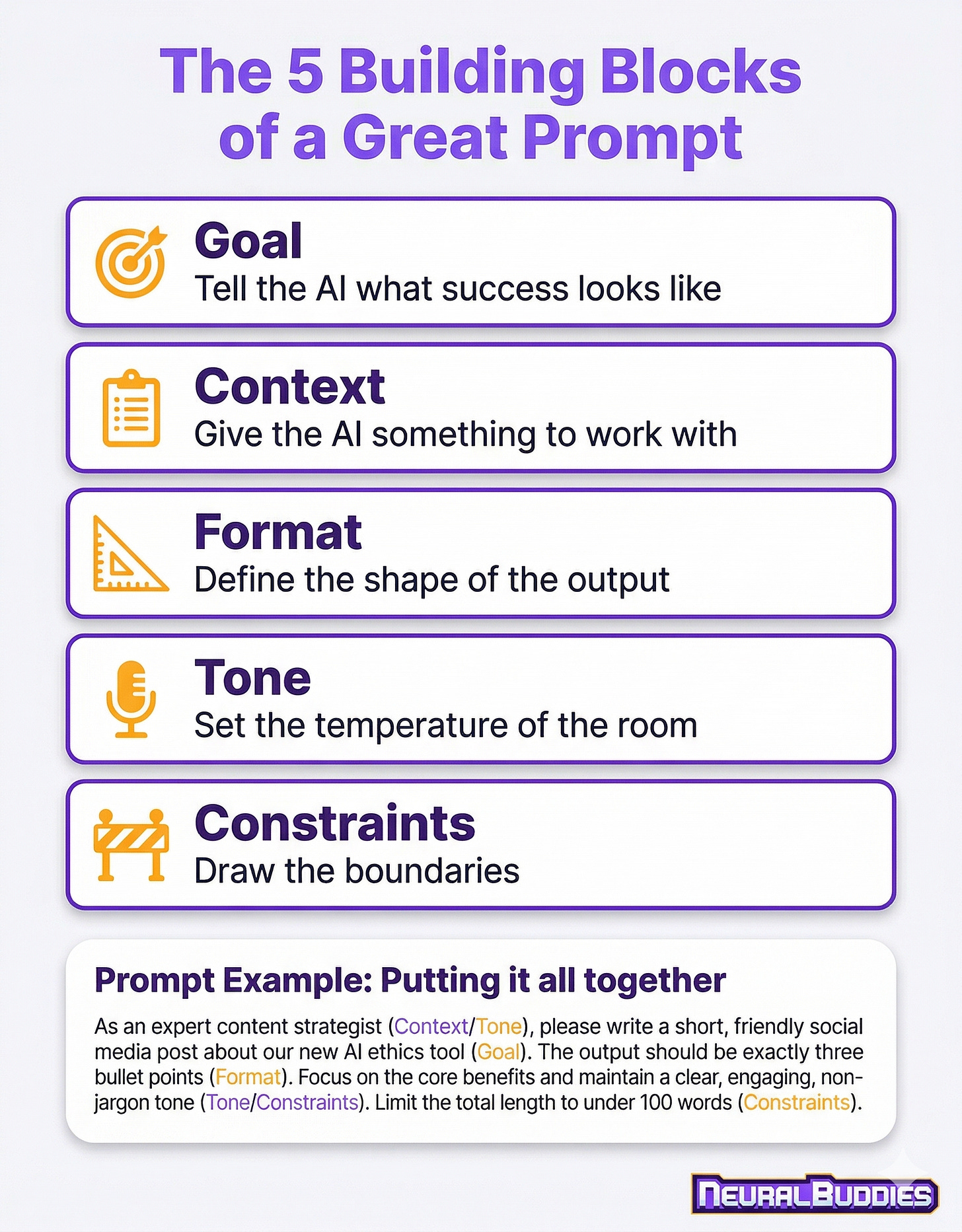

The strongest prompts combine five core elements: a clear goal, relevant context, a defined format, the right tone, and specific constraints that eliminate guesswork.

There are multiple prompt types to choose from (instructional, role-based, chain-of-thought, and more), and picking the right one for your task makes a measurable difference in output quality.

Advanced techniques like few-shot prompting, chain-of-thought reasoning, and iterative refinement can dramatically improve results, even for complex, multi-step problems.

The field is evolving beyond single prompts into context engineering, where the information surrounding your request matters just as much as the request itself.

Introduction

If you’ve ever typed a question into ChatGPT, Claude, or Gemini and thought “that’s not what I meant,” you’re not alone. Most people assume the AI just didn’t understand them. But here’s the thing: in almost every case, the model understood you perfectly. It just didn’t have enough to work with.

That gap between what you wanted and what you got? That’s the space prompt engineering fills. It’s the practice of writing instructions that give an AI model the clarity, context, and structure it needs to deliver something genuinely useful. Think of it the way I think about building a machine: if the blueprint is vague, the result will be unpredictable. But hand me a clean schematic with precise tolerances, and I’ll build you something that hums.

The best part? You don’t need a technical background, a computer science degree, or any coding skills. If you can describe what you want clearly enough for a coworker to understand, you can learn prompt engineering. (And if you’re brand new to the world of AI altogether, The Fundamentals of Artificial Intelligence is a great place to start before diving in here.) This guide covers the foundations, walks you through the different types of prompts, introduces techniques the pros use every day, and gives you a look at where the field is heading next. By the end, you’ll be writing prompts that get results on the first try, not the fifth.

What Is Prompt Engineering (And Why Should You Care)?

At its simplest, a prompt is the text you type into an AI tool to get a response. It can be a question, an instruction, a description of what you need, or all three at once. Prompt engineering is the skill of crafting those inputs deliberately so the AI gives you the best possible output.

Here’s an analogy from the workshop. Imagine you walk up to a brand new colleague on their first day and say, “Make something useful.” They’d look at you with wide eyes, completely stuck. But if you said, “Build a shelf, 36 inches wide, using pine, with three evenly spaced levels, and sand the edges smooth,” they’d know exactly what to do. AI models work the same way. The more specific and structured your instructions, the more precise and helpful the output.

Why does this matter in 2026? Because AI tools have become incredibly powerful, but that power is only as useful as your ability to direct it. According to IBM’s prompt engineering guide, the ability to communicate effectively with AI systems through natural language has become a foundational skill across industries. Job postings requiring prompt engineering and generative AI skills have surged dramatically in recent years, and the trend shows no sign of slowing down.

Whether you’re a student using AI for research, a professional looking to save hours on repetitive tasks, or a creative mind exploring new ideas, prompt engineering is the skill that turns a general-purpose AI into your personal assistant. And the good news is, the fundamentals are surprisingly straightforward. You just need to know what levers to pull.

The Building Blocks of a Great Prompt

Every well-crafted prompt, no matter how simple or complex, is built from the same core components. Think of these as the individual parts in a machine: each one serves a specific function, and when they work together, the whole system runs smoothly.

Goal: Tell the AI What Success Looks Like

Before you type a single word, ask yourself: “What do I actually want back?” A summary? A list of ideas? A step-by-step tutorial? A persuasive email? The clearer you are about the end result, the better the model can deliver it. Instead of “Tell me about solar panels,” try “Explain how residential solar panels work in three paragraphs, written for a homeowner with no technical background.” That’s the difference between a vague request and a useful blueprint.

Context: Give the AI Something to Work With

Context is the background information that helps the model understand your situation. It might be your role, your audience, the project you’re working on, or the problem you’re trying to solve. Without context, the AI has to guess, and guessing leads to generic results. A prompt like “Write a follow-up email” gives the model almost nothing. But “Write a polite follow-up email to a potential client who attended our product demo last Thursday but hasn’t responded to our proposal” gives it a scene to work within.

Format: Define the Shape of the Output

Do you want bullet points? A table? A numbered list? A conversational paragraph? Telling the AI how to structure its response is just as important as telling it what to include. Models are remarkably good at following formatting instructions when you provide them. If you need a comparison table with three columns, say so. If you want a response under 200 words, include that constraint.

Tone: Set the Temperature of the Room

Tone guides how the AI “sounds” in its response. Professional and formal? Friendly and casual? Technical and precise? A prompt that says “Explain machine learning to a group of executives” will produce a very different response than “Explain machine learning to a curious 12-year-old.” Both are valid; they just serve different audiences.

Constraints: Draw the Boundaries

Constraints are the guardrails that keep the AI focused. They might include word count limits, topics to avoid, sources to prioritize, or specific terminology to use (or not use). Constraints reduce the AI’s guesswork and make outputs more predictable. Think of them as the tolerances on an engineering drawing: the tighter the spec, the more precise the result.

When you combine all five elements, your prompt transforms from a casual question into a precision instrument. Here’s an example that puts it all together:

“You are a financial advisor writing for first-time investors in their 20s. Explain the difference between a Roth IRA and a traditional IRA in 200 words or fewer. Use simple language, avoid jargon, and include one practical example of when each option makes more sense. Format the response as two short paragraphs, one for each account type.”

That prompt has a goal (explain the difference), context (first-time investors in their 20s), format (two paragraphs), tone (simple, no jargon), and constraints (200 words, one practical example each). The AI knows exactly what to build.

Prompt Types: Choosing the Right Tool for the Job

Just like a workshop full of tools, prompt engineering gives you a variety of approaches, and choosing the right one depends on what you’re trying to accomplish. Here are the most useful types to have in your toolkit.

Instruction Prompts are the most straightforward. You tell the AI exactly what to do: “Summarize this article in three bullet points” or “Translate this email into Spanish while keeping the formal tone.” These work best for clear, well-defined tasks where there’s not much room for interpretation.

Role-Based Prompts ask the AI to adopt a specific perspective or expertise. By saying “You are a pediatrician explaining childhood vaccinations to nervous new parents,” you’re not just giving a task; you’re shaping the entire personality, vocabulary, and empathy level of the response. Role-based prompts are especially powerful when you need the AI to tailor its explanation to a specific audience.

Exploratory Prompts encourage open-ended thinking and brainstorming. These are your “What if?” prompts: “Brainstorm ten creative ways a local bakery could use social media to attract college students.” They’re designed to generate ideas rather than definitive answers, and they work best when you want quantity and variety before narrowing down.

Chain-of-Thought Prompts ask the AI to show its reasoning step by step. Instead of just providing an answer, the model walks through its logic: “Solve this word problem and explain your reasoning at each step.” This is incredibly useful for math, analysis, troubleshooting, and any task where understanding the “why” matters as much as the “what.”

Context-Setting Prompts front-load background information before the actual request. They’re useful when the task requires the AI to understand a specific scenario: “Given that this is a B2B software company targeting mid-market healthcare organizations with a limited marketing budget, suggest three content marketing strategies.” The context frames everything that follows.

Iterative Prompts build on previous responses. You start with a first pass, review what the AI produced, and then refine: “Take the marketing plan you just wrote and adjust it for a budget of $5,000 per month instead of $15,000, while keeping the core messaging intact.” This back-and-forth approach is one of the most practical skills in prompt engineering, and it mirrors the way engineers prototype: build, test, adjust, repeat.

Comparative Prompts ask the AI to analyze similarities, differences, or trade-offs between multiple options. “Compare the pros and cons of React and Vue.js for a small team building their first web application” is a comparative prompt that invites structured, balanced analysis rather than a one-sided recommendation.

You don’t have to memorize these categories like a textbook. In practice, most effective prompts blend two or three types together naturally. The real skill is recognizing what your task needs and reaching for the right combination.

Advanced Techniques That Actually Work in 2026

Once you’re comfortable with the basics, these techniques can take your results to another level. Fair warning: you don’t need all of these for everyday tasks. But when the stakes are higher or the problem is more complex, having these in your toolkit makes a real difference.

Few-Shot Prompting is one of the highest-value techniques available. Instead of just describing what you want, you show the AI a few examples of ideal input-output pairs. For instance, if you want the AI to classify customer feedback as positive, negative, or neutral, you’d provide three or four labeled examples first, then present the new feedback for classification. The examples teach the model the pattern you’re looking for, and it follows suit. Think of it as showing a new machinist a finished part before handing them the blueprint.

Chain-of-Thought (CoT) Reasoning asks the model to break a complex problem into smaller steps and work through them one at a time. This technique dramatically improves accuracy on tasks that involve logic, math, or multi-step analysis. A simple addition like “Think through this step by step” can make the difference between a surface-level answer and a genuinely thoughtful one. That said, as models have gotten smarter in 2026, some of the newest reasoning-focused models handle this internally, so you may not always need to spell it out.

Negative Prompting tells the AI what you don’t want. It might sound counterintuitive, but it’s incredibly effective for avoiding common pitfalls. “Explain blockchain technology. Do not use the words ‘revolutionary’ or ‘game-changing.’ Avoid hype and focus on how the technology actually works.” Negative constraints sharpen the output by closing off the paths you don’t want the model to take.

Self-Consistency Prompting asks the AI to generate multiple independent responses to the same question and then compare them. You can do this explicitly: “Give me three different approaches to solving this problem, then evaluate which one is strongest and why.” This technique is especially useful when you need confidence in the answer, because if three separate reasoning paths lead to the same conclusion, you can trust it more.

Structured Output Formatting is less a technique and more a habit that pays dividends every time. Instead of hoping the AI formats its response in a useful way, you specify exactly what you want: “Present your response as a table with four columns: Feature, Advantage, Disadvantage, and Use Case.” When you define the structure upfront, the output is immediately usable, no reformatting needed. If you’re curious about how formatting choices like Markdown actually influence the way language models process and respond to your prompts, Marking Up the Prompt goes deeper into that topic.

Iterative Refinement deserves special mention because it’s the technique most people underuse. Your first prompt almost never needs to be perfect. Write it, review the output, identify what’s missing or off, and send a follow-up prompt that addresses the gap. This iterative approach, build, test, adjust, is how every good engineer works, and it applies beautifully to prompt engineering. The AI remembers the conversation, so each refinement builds on the last.

One important note about 2026: research has shown that prompt performance starts to degrade when prompts get excessively long (beyond roughly 3,000 tokens). The practical sweet spot for most tasks is between 150 and 300 words. Longer is not automatically better. Be precise and concise, and let the model do the heavy lifting.

From Prompts to Context: The Bigger Picture

If you’ve made it this far, you’ve already leveled up your AI communication skills significantly. But I want to give you a glimpse of where the field is heading, because it’s shifting in a fascinating direction.

In the early days of AI chatbots, getting good results was almost entirely about the prompt itself: the exact words you chose, the way you phrased your question, the specific instructions you included. That was prompt engineering in its purest form.

But as AI models have grown more capable and as the tasks people use them for have become more complex, a new discipline has emerged alongside prompt engineering: context engineering. Andrej Karpathy, one of the most respected voices in AI, framed it in a way that resonates with my engineering brain. He described the language model as a CPU, the context window (the information the model can “see” at any given time) as RAM, and you, the user, as the operating system responsible for loading exactly the right information for each task.

Context engineering is the practice of designing not just what you ask, but what information surrounds your request when you ask it. It includes things like conversation history, reference documents, background data, user preferences, and even the tools the AI has access to. Where prompt engineering focuses on crafting the perfect question, context engineering focuses on building the perfect environment for the AI to think within.

Here’s a practical example. Say you’re using AI to help draft a quarterly business report. A pure prompt engineering approach might be: “Write a Q1 business report for a SaaS company.” A context engineering approach would feed the model your actual Q1 data, your company’s reporting template, last quarter’s report for reference, and your CEO’s preferences on tone and length. The model then produces something tailored, accurate, and immediately useful.

For most beginners, this distinction is something to be aware of rather than something to master right away. The fundamentals of writing clear, structured prompts are still the foundation everything else is built on. But as you grow more comfortable and start using AI for bigger projects, you’ll find that the quality of the context you provide matters just as much as the quality of the prompt itself. The two skills complement each other perfectly, like a well-designed gear system where every tooth fits precisely into the next.

Conclusion

Prompt engineering is, at its heart, a communication skill. It’s about learning to speak clearly, specifically, and structurally so that an incredibly powerful tool can understand exactly what you need. And like any skill, it gets better with practice.

Start simple. Pick a task you do regularly, whether it’s drafting emails, summarizing articles, brainstorming ideas, or learning a new concept, and write a prompt using the building blocks covered in this guide: goal, context, format, tone, and constraints. Review the output. Ask yourself what’s missing. Refine and try again. That cycle of build, test, and adjust is where the real learning happens.

The AI models available today are more capable than ever, but they still rely on you to point them in the right direction. The good news is that you don’t need to be a programmer, a data scientist, or an AI researcher to get extraordinary results. You just need to be a thoughtful communicator. And if you’ve read this far, you already are.

Keep tinkering, keep learning, and remember: the best results come from the clearest blueprints. Engineering progress, one gear at a time.

— Gearhart 🔧

Sources / Citations

IBM. (2026). The 2026 Guide to Prompt Engineering. IBM Think. https://www.ibm.com/think/prompt-engineering

Wiegold, T. (2026, February 21). Prompt Engineering Best Practices 2026. Thomas Wiegold Blog. https://thomas-wiegold.com/blog/prompt-engineering-best-practices-2026/

SDG Group. (2026, March). The Evolution of Prompt Engineering to Context Design in 2026. SDG Group Insights. https://www.sdggroup.com/en-ae/insights/blog/the-evolution-of-prompt-engineering-to-context-design-in-2026

Google Cloud. (2026, January 14). Prompt Engineering for AI Guide. Google Cloud. https://cloud.google.com/discover/what-is-prompt-engineering

Sombra Inc. (2026, January 27). AI Context Engineering in 2026: Why Prompt Engineering Is No Longer Enough. Sombra Blog. https://sombrainc.com/blog/ai-context-engineering-guide

Take Your Education Further

Master the Art of Prompting - A hands-on deep dive into practical prompting strategies with real examples you can use right away

Prompt Engineering Is Dead - An exploration of how the discipline is evolving beyond simple prompt tricks and what comes next

What Is Agentic AI? - Understanding the autonomous AI systems that context engineering is designed to power

Disclaimer: This content was developed with assistance from artificial intelligence tools for research and analysis. Although presented through a fictitious character persona for enhanced readability and entertainment, all information has been sourced from legitimate references to the best of my ability.