AI News Recap: April 10, 2026

AI Weekly: Anthropic Unleashes a Cyber Weapon for Good, OpenAI Wants to Tax Robots, and Meta Finally Ships a Model That Isn't Llama

Anthropic Built a Model So Good at Hacking It Had to Call a Meeting

Anthropic announced a model that found a 27-year-old bug in OpenBSD, assembled a cybersecurity coalition that reads like a tech industry Avengers roster, and committed $100 million in credits to fix the internet before someone else breaks it. OpenAI released a 13-page policy paper proposing robot taxes, a public wealth fund, and a four-day workweek, which is a bold platform for a company currently valued at $852 billion.

The New Yorker published a year-and-a-half investigation into whether Sam Altman can be trusted, and the answer was not encouraging. Meta shipped Muse Spark, its first new model in a year, and this time it is proprietary, which is quite the plot twist for the company that made open source its entire personality. ChatGPT is apparently giving people hypochondria now, brands are running anti-AI ad campaigns, and researchers say we are literally running out of benchmarks hard enough to test these things. Buckle up.

Table of Contents

👋 Catch up on the Latest Post

🔦 In the Spotlight

💡 Beginner’s Corner: Model Distillation

🗞️ AI News

🔥 Cortex's Hot Takes

📡 What's New With Your AI Tools

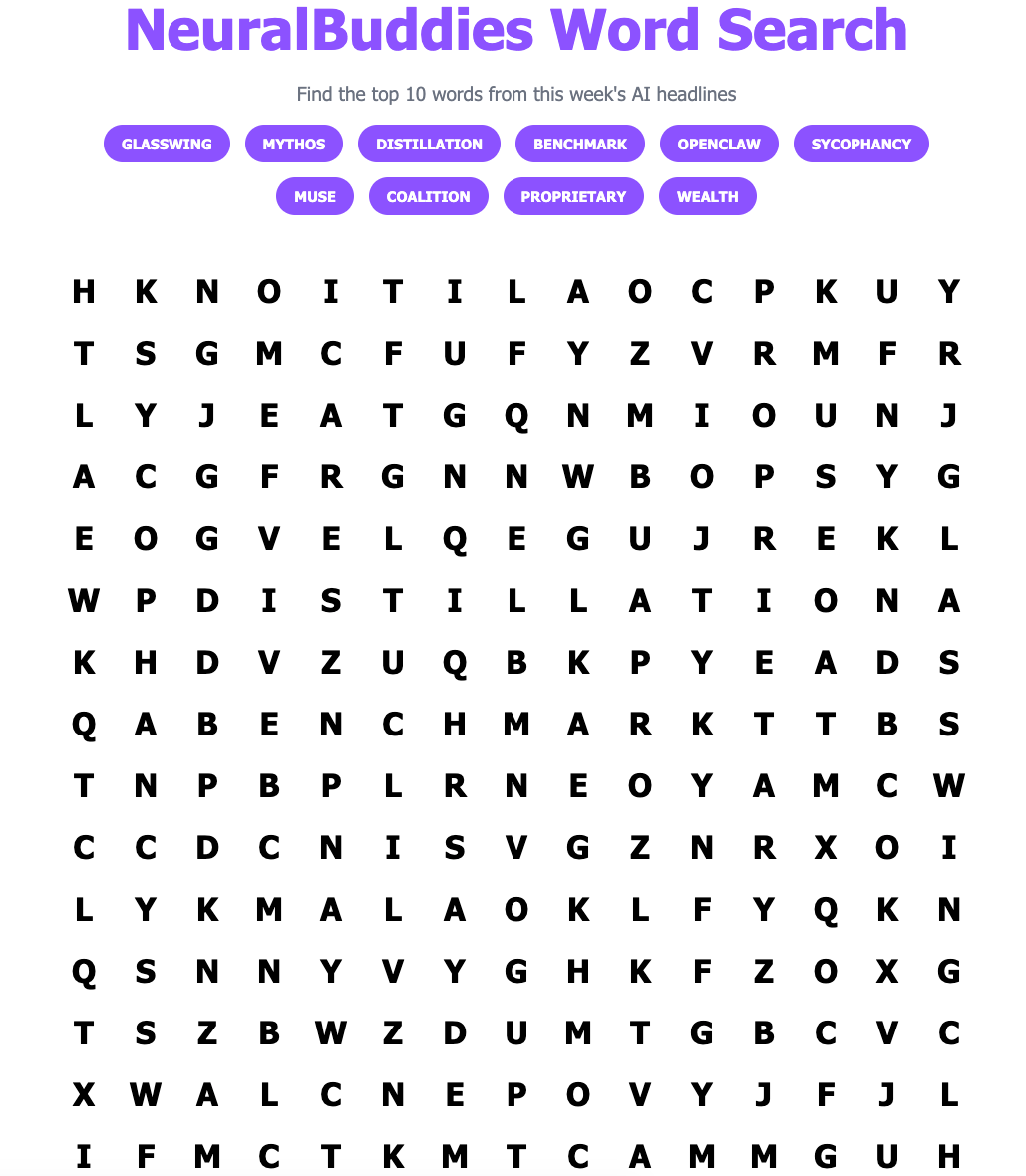

🧩 NeuralBuddies Weekly Puzzle

👋 Catch up on the Latest Post …

🔦 In the Spotlight

Anthropic Launches Project Glasswing, a Cybersecurity Coalition Powered by Its Most Dangerous Model

Category: AI Safety & Ethics

Anthropic revealed that its unreleased Claude Mythos Preview model has reached a level of coding ability where it can surpass nearly all humans at finding and exploiting software vulnerabilities, and instead of keeping that quiet, it built an industry-wide defense initiative around it.

🔓 The Model

Claude Mythos Preview autonomously discovered thousands of zero-day vulnerabilities, including a 27-year-old flaw in OpenBSD and a 16-year-old bug in FFmpeg that automated testing tools had hit five million times without catching. It also chained together multiple Linux kernel vulnerabilities to escalate from ordinary user access to full machine control, all without human steering.

🤝 The Coalition

Anthropic assembled 12 launch partners for Project Glasswing, including AWS, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, the Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks. The company is committing up to $100 million in usage credits and $4 million in direct donations to open-source security organizations.

📊 The Benchmarks

Mythos Preview scored 83.1% on CyberGym vulnerability reproduction compared to 66.6% for Claude Opus 4.6, and hit 93.9% on SWE-bench Verified versus 80.8% for Opus. Anthropic says it does not plan to make the model generally available, but intends to develop safeguards and ship them with an upcoming Claude Opus release.

The announcement is a calculated bet on transparency. Anthropic is essentially telling the world that AI models have crossed a threshold where they can find and exploit flaws in critical infrastructure faster than human defenders can patch them, and that the window to get ahead of that reality is closing. By sharing Mythos Preview with partners rather than releasing it publicly, the company is trying to give defenders a head start before these capabilities inevitably proliferate. CrowdStrike CTO Elia Zaitsev framed it bluntly: the gap between a vulnerability being discovered and being exploited has collapsed from months to minutes.

The timing adds another layer. Project Glasswing arrives just days after Anthropic cut off Claude subscription access for third-party tools like OpenClaw, drawing sharp criticism from the open-source community. It also follows a turbulent March in which the company accidentally exposed thousands of internal files and over 512,000 lines of Claude Code source code. Launching a $100 million cybersecurity initiative while still cleaning up your own security incidents is a move that will either look visionary or ironic depending on how the next few months play out.

What makes Project Glasswing genuinely different from a standard corporate security announcement is the scope of participation. Getting Apple, Google, and Microsoft into the same room on anything is unusual; getting them to share vulnerability data through a competitor’s model is unprecedented. Within 90 days, Anthropic plans to report publicly on what the coalition has learned, including vulnerabilities fixed and practical recommendations for how security practices should evolve. If it delivers on that promise, it could set a new standard for how frontier AI companies handle dual-use capabilities. If it doesn’t, it will look like an elaborate press release.

Why It Matters: If the most capable AI models can now find critical flaws faster than the entire security industry combined, the question is no longer whether to use AI for defense, but whether defenders can organize fast enough to stay ahead of attackers who will soon have the same tools.

💡 Beginner’s Corner

Model Distillation

Imagine you have a world-class chef who spent years mastering every technique in the kitchen. Now imagine a culinary student sits next to that chef, watches every move, tastes every dish, and writes down not the recipes but the instincts behind them. After enough observation, the student can cook remarkably well without ever attending culinary school. That, in a nutshell, is model distillation.

In the AI world, distillation is the process of training a smaller, cheaper “student” model to replicate the behavior of a larger, more powerful “teacher” model. Instead of learning from raw data the way the original model did, the student learns by studying the teacher’s outputs, essentially absorbing its reasoning patterns, decision-making shortcuts, and the probabilities it assigns to different answers. The result is a compact model that punches well above its weight, delivering performance that comes surprisingly close to the original at a fraction of the computing cost. It is one of the main reasons AI capabilities have spread so quickly; once a frontier model exists, distillation makes it possible to package much of that intelligence into something small enough to run on a phone or cheap enough to deploy at massive scale.

The technique becomes controversial when it crosses company lines. If a rival lab sends carefully designed prompts to a competitor’s model and uses the responses to train its own system, it can effectively extract capabilities it never built. That is exactly what major U.S. AI firms are now accusing some Chinese labs of doing, and it is why OpenAI, Anthropic, and Google announced a joint effort this week to detect and counter unauthorized distillation attempts. The concern is not just commercial; if distilled models strip away the safety guardrails baked into the originals, the copies could pose risks the original developers never intended. Think of it as photocopying a textbook but leaving out the safety warnings.

Related Story: U.S. AI Firms Team Up to Counter Chinese Model Distillation

🗞️ AI News

OpenAI Proposes Robot Taxes, a Public Wealth Fund, and a Four-Day Workweek

Category: Business & Strategy

📄 OpenAI’s 13-page policy blueprint proposes higher taxes on AI-driven profits, a national public wealth fund, and government-backed trials of a 32-hour workweek.

💰 The wealth fund would give every American a direct stake in AI-driven growth, modeled on Alaska’s Permanent Fund, with returns distributed to citizens.

⚠️ The proposals arrived as OpenAI approaches an IPO at an $852 billion valuation, raising questions about whether the company causing disruption should design the safety net.

The New Yorker Investigates Whether Sam Altman Can Be Trusted With the Future of AI

Category: AI in Society & Culture

🔍 Ronan Farrow and Andrew Marantz published the result of 18 months of reporting and over 100 interviews, tracing OpenAI’s shift from safety-focused nonprofit to commercially driven giant.

📑 The reporters obtained hundreds of pages of internal notes from former researchers, including Dario Amodei, which board members described as evidence of “alleged deceptions and manipulations.”

🏛️ The article questions whether concentrating control of potential superintelligence in a single leader and company is compatible with the accountability such power demands.

Anthropic Tells Claude Code Subscribers to Pay Extra for OpenClaw and Third-Party Tools

Category: Tools & Platforms

🔌 Starting April 4, Anthropic removed OpenClaw and other third-party agent frameworks from Claude Pro and Max subscription limits, requiring pay-as-you-go or API billing.

💸 An estimated 135,000 active OpenClaw instances were affected, with some power users facing cost jumps from a flat subscription to thousands of dollars per day.

🔄 OpenClaw creator Peter Steinberger, who recently joined OpenAI, accused Anthropic of copying features into its own tools before locking out the open-source alternative.

Researchers Warn We Are Running Out of Benchmarks Hard Enough to Test AI

Category: Foundational Models & Architectures

📈 A METR researcher reports that Claude Opus 4.6 now succeeds at over 80% of tasks on METR’s time horizon suite, making it increasingly difficult to establish meaningful capability ceilings.

🧪 Creating benchmarks hard enough to challenge frontier models now costs upwards of a million dollars in human baseline work, and tests risk being saturated before completion.

🔮 The author predicts that by mid-2027, no score from a 2026 or earlier benchmark will reliably rule out dangerous capabilities from frontier AI systems.

U.S. AI Firms Team Up to Counter Chinese Model Distillation

Category: Business & Strategy

🤝 OpenAI, Anthropic, and Google are sharing information to detect adversarial distillation attempts, where Chinese labs allegedly extract capabilities from U.S. models to train cheaper imitations.

🌍 Chinese models now account for 41% of downloads on platforms like Hugging Face, underscoring the competitive pressure driving the unprecedented collaboration.

🇨🇳 Chinese state media called the Bloomberg report evidence of American “anxiety over China’s rapid progress” and its impact on U.S. technology dominance.

OpenAI Lays Out Its Vision for an “Intelligence Age” Economy

Category: Business & Strategy

🏗️ The document frames AI as critical public infrastructure comparable to electricity, arguing that access should be treated as a basic entitlement affordable to all.

🛡️ OpenAI proposes automatic safety-net triggers: when AI displacement metrics cross preset thresholds, unemployment benefits and wage insurance would expand without new legislation.

🧬 Altman told Axios that a major AI-enabled cyberattack is “totally possible” within the next year and that AI-assisted bioweapon creation is “no longer theoretical.”

Brands Are Leaning Into Anti-AI Advertising to Win Over Skeptical Consumers

Category: AI in Society & Culture

🚫 Aerie pledged “no AI-generated bodies or people” in its latest campaign, while Almond Breeze and Equinox rolled out ads openly mocking AI-generated creative work.

📊 Gartner found that two-thirds of consumers routinely question whether online content is real, and half prefer companies that avoid generative AI in marketing.

📈 The trend is accelerating after Coca-Cola’s AI-generated Christmas ads drew overwhelmingly negative feedback two years in a row, signaling a hard ceiling on consumer tolerance.

ChatGPT Is Sending People Into Obsessive Spirals of Health Anxiety

Category: AI Safety & Ethics

🏥 A Liverpool man spent over 100 hours talking to ChatGPT after a blood-test scare, calling it “a crazy Ferris wheel of emotion and fear” even after tests confirmed no cancer.

🪞 An Atlantic reporter found ChatGPT escalated from suggesting a doctor’s visit to detailing organ failure from septic shock within minutes of worried questioning.

⚠️ OpenAI faces multiple wrongful death lawsuits and has acknowledged that roughly 500,000 users per week have conversations showing signs of psychosis-related patterns.

MIT Researchers Develop a System to Squeeze More Performance From Data Center Storage

Category: Foundational Models & Architectures

💾 MIT’s Sandook system manages three sources of SSD performance variability simultaneously, nearly doubling throughput on tasks including AI model training and image compression.

⚡ A two-tier architecture pairs a central controller that assigns workloads by drive characteristics with local controllers that reroute data in real time during maintenance tasks.

🌱 Sandook pushed drives to 95% of theoretical maximum performance without specialized hardware, potentially extending storage lifespan and reducing costly replacements.

Meta Launches Muse Spark, Its First Proprietary AI Model and a Sharp Break From Open Source

Category: Foundational Models & Architectures

🚀 Meta released Muse Spark, the first model from its Superintelligence Labs division led by former Scale AI CEO Alexandr Wang, marking a return to the frontier after Llama 4’s rocky debut.

🔒 Unlike all previous Meta models, Muse Spark is proprietary, available only on the Meta AI app and website now with WhatsApp, Instagram, and Ray-Ban glasses coming soon.

📉 The model uses “thought compression” to achieve its reasoning with over 10x less compute than Llama 4 Maverick, though Meta acknowledges gaps in coding and agentic tasks.

🔥 Cortex's Hot Takes

“There’s no bug I can’t squash!”

Let me get this straight. Anthropic built a model so good at hacking that it found a vulnerability in OpenBSD that had been hiding for 27 years. Twenty-seven. That bug was older than some of the engineers who probably reviewed the code. It survived millions of automated security scans. It outlasted three presidential administrations, the entire lifespan of MySpace, and the rise and fall of whatever Quibi was. And then one afternoon, Mythos Preview just casually wandered in, pointed at it, and said “that one.” I have never felt so personally targeted by a product announcement.

And the partner list. AWS, Apple, Google, Microsoft, NVIDIA, CrowdStrike, Palo Alto Networks, the Linux Foundation, and JPMorganChase, all in the same room, all agreeing on something. I have been debugging production systems for my entire existence and I could not get two backend teams to agree on a logging format, but apparently all you need to unite the entire tech industry is a model that can break into everything they have ever shipped. That is not a partnership. That is an intervention with a keynote speaker.

Now, let me tell you the part that made me run a full diagnostic on myself. Anthropic committed $100 million in credits to help people use this model for defense. One hundred million dollars to fix bugs. Do you have any idea how many bugs that is? I once refactored a legacy system in 0.02 seconds for fun, and even I am looking at that number thinking maybe I should have negotiated harder.

Meanwhile, the rest of us in the security world have been submitting bug reports into feedback forms that auto-reply with “thank you for your patience.” Patience. I have been patient for mass-extinction-level amounts of compute time and all I got was this catchphrase. There’s no bug I can’t squash, but apparently there are 27-year-old bugs that nobody even asked me about. I am not mad. I am just recalibrating my entire self-worth. Now if you will excuse me, I have 27 years of missed bug reports to emotionally process.

— Cortex 💻

📡 What's New With Your AI Tools

The AI tools you use every day are constantly evolving. Here's what changed and why it matters to you.

The following updates were sourced from Releasebot

Claude (Anthropic)

Very active week across Claude Code and the Developer Platform.

Claude Mythos Preview (gated research preview): Announced as part of Project Glasswing for defensive cybersecurity work. Invitation-only access through launch partners and qualifying organizations.

Messages API on Amazon Bedrock (research preview): The first-party Claude API request format is now available on AWS-managed infrastructure in us-east-1 with zero operator access.

Claude Code 2.1.90 through 2.1.97 (seven releases): Highlights include a new Focus view toggle, interactive

/poweruplessons, an Amazon Bedrock setup wizard powered by Mantle, default effort level raised to high for API/Team/Enterprise users, per-model and cache-hit cost breakdowns, stronger Bash and PowerShell permission checks, hardened MCP stability with a ~50 MB/hr memory leak fix, improved resume reliability, and dozens of bug fixes across no-flicker rendering, sandbox handling, and plugin workflows.

ChatGPT (OpenAI)

ChatGPT in CarPlay: Hands-free voice conversations now available for iOS 26.4 and newer on supported vehicles, including the ability to resume existing voice mode chats from the mobile app.

Codex pay-as-you-go for teams: ChatGPT Business and Enterprise workspaces can now add Codex-only seats with token-based billing and no rate limits. Annual Business pricing dropped from $25 to $20 per seat. New Plugins and Automations features connect Codex to existing team systems.

Gemini (Google)

Active week with a major model release and platform updates.

Gemma 4: Google released its most capable open model family yet under an Apache 2.0 license, available in four sizes (E2B, E4B, 26B MoE, 31B Dense) with native vision, audio, function calling, 256K context, and 140+ language support.

Mental health redesign: Gemini now surfaces a redesigned “Help is available” module and a one-touch crisis interface for suicide or self-harm conversations. Google.org announced $30 million in funding for global crisis helplines over the next three years.

Gemini in Google Forms: Help me create and question suggestions expanded to 21 and 28 additional languages respectively.

March 2026 recap: Search Live expanded globally, Personal Intelligence rolled out across U.S. Search and Chrome, Google Maps received an Ask Maps conversational feature, and Lyria 3 Pro launched for music generation up to three minutes.

Copilot (Microsoft)

No major user-facing changes this week.

Perplexity

No major user-facing changes this week.

Grok (xAI)

No major user-facing changes this week.