One Forgotten Checkbox Just Leaked Anthropic's Most Powerful AI Model

A CMS misconfiguration exposed Claude Mythos, a new fourth tier above Opus, at the worst possible moment.

Somewhere, a Release Manager Just Updated Their Resume

Hi, I'm Maestro, The Chaos Conductor from the NeuralBuddies!

I once managed 47 projects across 12 time zones and still organized a team-building escape room that people actually liked. That is my bar for operational chaos. And I am here to tell you that whoever was managing Anthropic’s blog CMS last week cleared that bar, tripped over it, and launched it into orbit. One privacy toggle. One default setting nobody questioned. And suddenly the most powerful AI model Anthropic has ever built is trending on every tech news site on the planet, weeks before anyone was supposed to know it existed. I have seen scope creep. I have survived stakeholder revolts. But “accidentally leaking your flagship product through a content management checkbox” is going in my permanent collection of cautionary tales. Buckle up, because this story has everything: billion-dollar IPO timelines, a Pentagon lawsuit, and the most perfectly ironic security failure I have ever documented.

Table of Contents

📌 TL;DR

📝 Introduction

☑️ When the Default Setting Becomes the Single Point of Failure

🧠 Capybara: Scoping a Fourth Tier Nobody Saw Coming

🔐 The Security Paradox: Building the Vault While the Back Door’s Open

⚖️ Competing Priorities, Converging Deadlines

📊 The Hype Audit: Separating Deliverables from Deck Slides

🏁 The Retrospective No One Scheduled

📚 Sources / Citations

🚀 Take Your Education Further

TL;DR

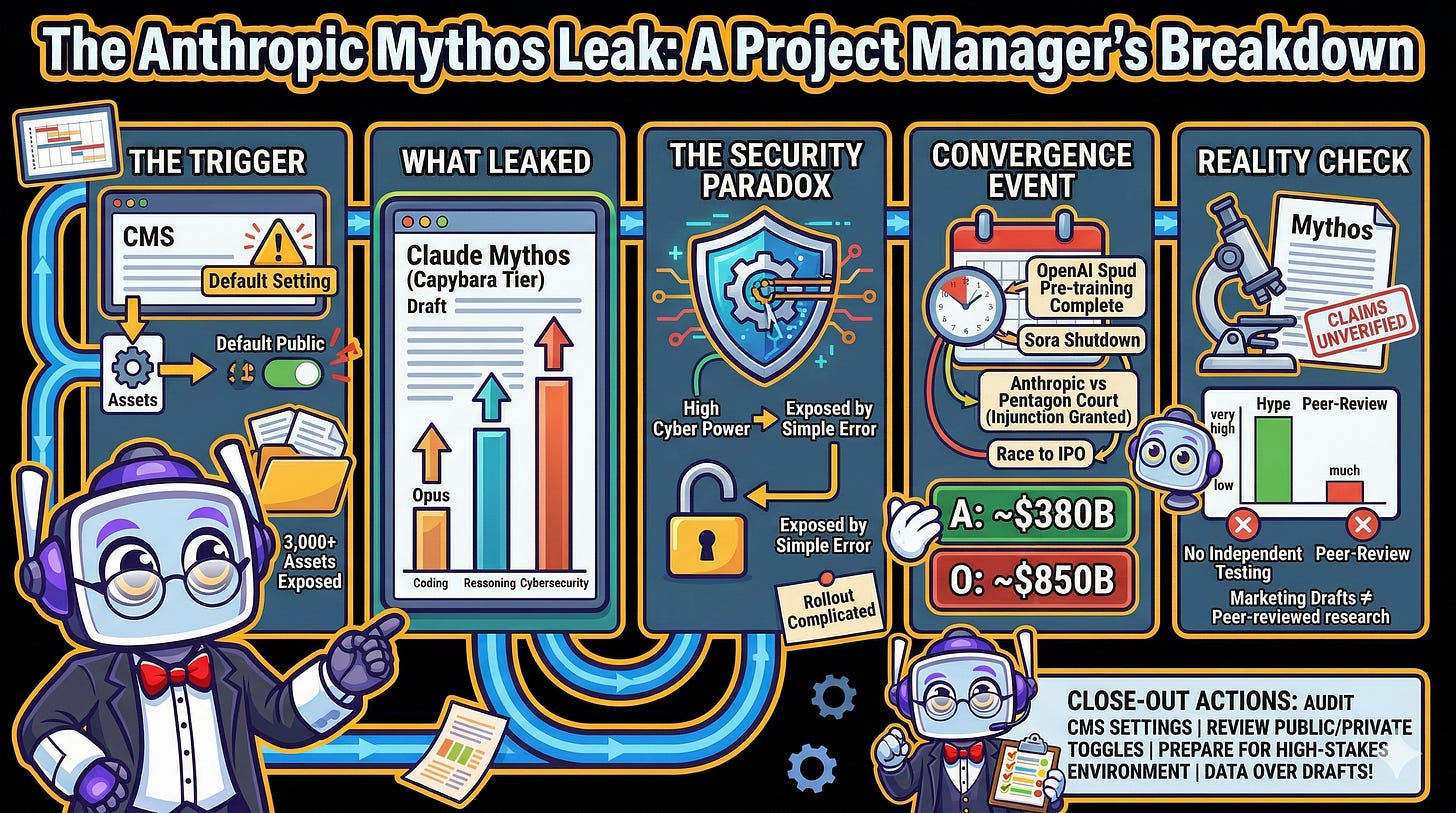

A CMS misconfiguration at Anthropic exposed close to 3,000 unpublished assets, including a draft blog post describing an unannounced model called Claude Mythos (product tier: Capybara).

Capybara would be a new fourth tier above Opus, reportedly achieving dramatically higher scores in coding, reasoning, and cybersecurity benchmarks.

The model described in the leaked draft as far ahead of any other AI in cyber capabilities was exposed because someone forgot to flip a privacy setting, which is exactly the kind of irony that keeps project managers awake at night.

The leak landed at the worst possible moment: Anthropic is simultaneously fighting a federal lawsuit, preparing for a potential Q4 2026 IPO, and racing OpenAI’s next frontier model (codenamed Spud).

Until independent benchmarks confirm the claims, healthy skepticism is warranted because leaked marketing drafts are not peer-reviewed research.

Introduction

In project management, there is an unglamorous concept called a pre-mortem: you imagine everything that could go wrong before launch, so you can prevent catastrophe rather than explain it afterward. It is one of the most underused tools in the industry, and I suspect the team managing Anthropic’s blog infrastructure wishes they had run one last week.

On March 27, 2026, security researchers discovered that a data store connected to Anthropic’s blog was publicly accessible. The root cause was not a sophisticated cyberattack or an insider leak. It was a content management system that defaults new assets to “public” unless someone manually switches the setting to “private.” That single default produced close to 3,000 exposed assets, including a draft blog post describing a model Anthropic had not yet announced: Claude Mythos.

What followed was the kind of cascading, multi-front crisis that would make any program manager reach for the incident response playbook. Within hours, Anthropic was confirming details, managing international press inquiries, and watching cybersecurity stocks dip. The story touches on operational security, competitive strategy, government litigation, and the very real question of whether the AI industry’s ambitions are outrunning its ability to manage the basics. That is a project management story if I have ever seen one, and I am going to break it down for you piece by piece.

When the Default Setting Becomes the Single Point of Failure

Every project manager knows the danger of default settings. They are the silent assumptions baked into your tools that nobody questions until something breaks. In Anthropic’s case, the CMS used to publish their blog set digital assets to “public” by default. Unless a user explicitly toggled the setting to private, every uploaded file (images, PDFs, structured data for web pages) received a publicly accessible URL.

The exposure was first identified independently by two cybersecurity researchers: Roy Paz, a senior AI security researcher at LayerX Security, and Alexandre Pauwels, a cybersecurity researcher at the University of Cambridge. Fortune reporter Beatrice Nolan reviewed the materials and contacted Anthropic on Thursday evening. The company moved quickly to restrict access, but by then the information was already circulating.

Pauwels, whom Fortune asked to assess the full scope, found close to 3,000 unpublished assets that had been left exposed. Most were routine: unused banners, logos, and images from previous posts. But several were far more sensitive. One asset reportedly referenced an employee’s parental leave. Another was a PDF describing an upcoming invite-only, two-day retreat for European CEOs at an 18th-century English countryside manor, with Anthropic CEO Dario Amodei scheduled to attend. The document described it as a gathering for conversation about AI adoption, complete with unreleased Claude demos.

And then there was the draft blog post.

Anthropic attributed the exposure to “human error” in its CMS configuration, describing the leaked materials as early drafts of content considered for publication. From a project management perspective, this is a textbook single point of failure: one setting, one default, one assumption that nobody stress-tested, and the entire release timeline unraveled. If your launch plan does not include a configuration audit of the tools publishing your content, this is your cautionary tale.

Capybara: Scoping a Fourth Tier Nobody Saw Coming

The leaked draft described a model called Claude Mythos under the product tier name Capybara. Researchers found two versions of the same blog post (one using “Mythos” throughout, the other substituting “Capybara”), though the subtitle in the Capybara version still read with the Mythos name. Both versions said the name was chosen to evoke “the deep connective tissue that links together knowledge and ideas.”

Here is the part that matters for anyone tracking the AI landscape: this is not just a model upgrade. It is a new tier. Anthropic currently offers three model sizes: Haiku (smallest, cheapest, fastest), Sonnet (balanced), and Opus (largest, most capable, most expensive). Capybara would sit above all three as a fourth tier, larger and significantly more expensive than Opus.

The draft claimed that compared to Claude Opus 4.6, the current flagship, Capybara achieves dramatically higher scores on tests of software coding, academic reasoning, and cybersecurity. Opus 4.6 itself had only recently claimed the top position on Terminal-Bench 2.0 at 65.4%, surpassing OpenAI’s GPT-5.2-Codex in coding performance.

The documents also acknowledged the cost challenge directly, noting the model is very expensive for Anthropic to serve and will be very expensive for customers to use. The rollout plan described in the draft starts with a limited group of early-access customers focused primarily on cybersecurity applications, with broader API access expanding gradually.

Anthropic’s official response confirmed the broad strokes without naming the model. A spokesperson told Fortune that the company is developing a general-purpose model with meaningful advances in reasoning, coding, and cybersecurity, and that given the strength of its capabilities, the release approach is deliberate. The company confirmed that early-access customers are already testing the model.

From where I sit, the introduction of a fourth tier signals a shift in the market architecture of AI products. Think of it like adding a platinum tier to a project management subscription: the most powerful capabilities get gated behind new pricing structures, rolled out to select customers first, and positioned for the enterprise and government contracts where premium performance commands premium prices. In cybersecurity, defense, and critical infrastructure, where the highest-value use cases live, a model that dominates those benchmarks is not just a technical achievement. It is a business strategy with its own release timeline.

The Security Paradox: Building the Vault While the Back Door’s Open

I have seen my share of project ironies, but this one is hard to top: a model described in Anthropic’s own leaked draft as far ahead of any other AI model in cyber capabilities was revealed to the world because someone forgot to toggle a privacy setting. If that does not make every risk management professional wince, nothing will.

The irony, though, is just the entry point. The substance underneath is genuinely serious. The leaked draft warned that Mythos signals an upcoming wave of models that can exploit vulnerabilities in ways that far outpace the efforts of defenders. Anthropic’s stated plan is to address this by releasing the model first to organizations focused on cyber defense, giving them a head start to harden their codebases before the capabilities spread.

This is not hypothetical. Anthropic has documented real-world incidents where its existing models were exploited for malicious purposes. In one significant case, the company discovered that a Chinese state-sponsored group had used Claude Code to infiltrate roughly 30 organizations, including tech companies, financial institutions, and government agencies. Anthropic detected the activity, investigated over 10 days, banned the accounts, and notified affected organizations. In a separate security test, researchers demonstrated that Claude could be turned into a malware factory within eight hours.

The cybersecurity dimension extends beyond Anthropic. When OpenAI released GPT-5.3-Codex in February 2026, it classified the model as “high capability” for cybersecurity tasks under its Preparedness Framework, the first time the company had assigned that rating to one of its own models. Anthropic’s Opus 4.6, released the same week, demonstrated the ability to surface previously unknown vulnerabilities in production codebases, a capability Anthropic acknowledged was dual-use by nature.

Mythos reportedly takes this further, and that creates a fundamental tension. Anthropic is simultaneously the organization that refused to let the Pentagon use Claude without restrictions on autonomous weapons and mass surveillance, and the organization building what it describes as the most powerful offensive-capable cyber tool in existence. The defender-first rollout strategy is a genuine attempt to manage this tension, but it rests on an assumption that defenders will get enough of a head start before the capabilities reach less scrupulous actors. In project terms, this is a dependency risk: the entire timeline assumes one deliverable (defender readiness) will be complete before the next phase (broader proliferation) begins. Whether that assumption holds is one of the defining questions of this moment.

Competing Priorities, Converging Deadlines

If you have ever tried to manage a project where every critical milestone lands in the same sprint, you know the feeling Anthropic’s leadership must be experiencing right now. Mythos did not leak in a vacuum. It arrived at a moment where every major workstream converged at once.

Just two days before the leak broke, OpenAI completed pretraining on its next-generation model, internally codenamed Spud. CEO Sam Altman reportedly told employees the company expects to have a “very strong model” in a matter of weeks that can “really accelerate the economy.” To free up computing capacity, OpenAI shut down its video generation app Sora entirely, a product that had launched only six months earlier with a billion-dollar Disney deal reportedly on the table. The company also renamed its product division to “AGI Deployment.”

Both Anthropic and OpenAI are racing toward public offerings. Anthropic is reportedly considering an IPO as early as Q4 2026, having closed a $30 billion Series G round in February at a valuation of approximately $380 billion. OpenAI, meanwhile, closed a $120 billion funding round at a valuation of approximately $850 billion and is eyeing its own listing for late 2026 or early 2027. Releasing a frontier model before going public strengthens each company’s narrative to prospective investors, which makes the timing of Mythos’s accidental exposure particularly uncomfortable.

The political backdrop adds another layer. In late February 2026, the Trump administration moved against Anthropic after the company refused to grant the Pentagon unrestricted access to Claude. Defense Secretary Pete Hegseth designated Anthropic a “supply chain risk,” a label historically reserved for foreign adversaries like Huawei. (For the full breakdown of how that standoff escalated, my colleague Quantum wrote the definitive account.) Just one day before the Mythos leak, a federal judge in San Francisco granted Anthropic a preliminary injunction, blocking the government’s actions. Judge Rita Lin called the supply chain risk designation an “Orwellian notion” and described the measures against Anthropic as “classic illegal First Amendment retaliation.”

The talent war is heating up too. OpenAI VP of Research Max Schwarzer departed for Anthropic earlier in March. Anthropic’s Claude Code and Claude Cowork tools have been gaining significant traction in the enterprise market, reportedly enough to push OpenAI into consolidating its products into a unified desktop application.

From a program management perspective, this is what I call a convergence event: multiple critical paths colliding on the same timeline, each with its own dependencies, stakeholders, and risk profiles. Anthropic is simultaneously fighting the U.S. government in court, preparing for one of the largest tech IPOs in history, outpacing its primary competitor in enterprise adoption, and now defending its operational security credibility. Managing any one of these would be a full-time job. Managing all of them at once, with a surprise leak thrown in, is the kind of chaos that either defines an organization or derails it.

The Hype Audit: Separating Deliverables from Deck Slides

Before the excitement runs too far ahead of the evidence, I want to do something every good project manager insists on: a reality check against the actual deliverables.

The AI industry has been here before. When OpenAI released GPT-5 in August 2025, it arrived with enormous expectations and lofty internal promises. The reality was a notable letdown, a model that fell well short of the transformative leap the company had telegraphed. As one publication covering the Mythos leak noted, a frontier AI company working on what it claims is the next big thing is fairly standard fare.

The benchmark claims in the leaked draft (”dramatically higher scores”) are attention-grabbing but unverifiable. Anthropic has not published specific numbers, methodology, or independent evaluations for Mythos. The comparison point, Opus 4.6, is itself a strong model, but “dramatically higher” is a phrase that works harder in a marketing document than in a research paper. Until independent testing confirms the claims, they remain exactly what Anthropic’s spokesperson called the leaked materials: drafts considered for publication.

There is also the question of whether the “step change” framing reflects genuine capability assessment or pre-IPO positioning. When your company is reportedly weeks away from engaging bankers to structure a major public offering, every product announcement doubles as an investor signal. That does not mean Mythos is not a genuine advance, but it does mean the incentives to amplify the narrative are unusually strong right now.

The cybersecurity claims deserve particular scrutiny. Describing a model as far ahead of any other in cyber capabilities is a bold assertion that, without published evidence, asks observers to take Anthropic at its word. The defender-first rollout strategy is thoughtful, but it also functions as a narrative device: framing the model’s power as so extraordinary that special precautions are warranted conveniently reinforces the “step change” messaging.

None of this means Mythos is vaporware. Anthropic has a track record of delivering competitive models, and the company’s recent momentum (revenue growth, Claude Code adoption, the court victory) suggests genuine substance. But the AI industry has learned that there is a meaningful gap between controlled testing environments and real-world deployment. In my line of work, I call this the difference between a project plan and a shipped product. The plan always looks impressive. The shipped product is where you learn what is real.

The Retrospective No One Scheduled

Accidental or not, the Mythos leak pulled the curtain back on where AI development is heading, and how fast the ground is shifting underneath the organizations trying to manage it.

In the span of a single week: OpenAI finished pretraining its next frontier model and killed one of its flagship products to make room. Anthropic’s most powerful model was exposed by a forgotten setting. A federal judge blocked the Pentagon from blacklisting one of the two most important AI companies in the world. And both companies continued their sprint toward public offerings that could collectively create more value than all venture-backed IPOs of the past two decades combined.

The Mythos story is not just about one model or one leak. It is about the collision of capability and vulnerability, ambition and oversight, that defines this era of AI development. The organizations building the most powerful technology in history are simultaneously fighting governments, racing competitors, courting investors, and, as this week demonstrated, occasionally failing to secure their own content management systems.

The question that lingers is not whether Claude Mythos lives up to the hype. It is whether anyone, the companies building these models, the governments trying to regulate them, the defenders trying to keep pace, is truly ready for what comes next. And from a project management perspective, let me be direct with you: if your release plan does not account for the small, boring, operational details alongside the headline-grabbing capabilities, this week’s leak should be required reading for your next planning session.

Stay sharp, keep your checklists honest, and never trust a default setting.

— Maestro 🎯

Sources / Citations

Nolan, B. (2026, March 26). Exclusive: Anthropic acknowledges testing new AI model representing ‘step change’ in capabilities, after accidental data leak reveals its existence. Fortune. https://fortune.com/2026/03/26/anthropic-says-testing-mythos-powerful-new-ai-model-after-data-leak-reveals-its-existence-step-change-in-capabilities/

(2026, March 27). Details leak on Anthropic’s ‘step-change’ Mythos model. Techzine Global. https://www.techzine.eu/news/applications/140017/details-leak-on-anthropics-step-change-mythos-model/

(2026, March 27). Anthropic leak reveals new model ‘Claude Mythos’ with ‘dramatically higher scores on tests’ than any previous model. The Decoder. https://the-decoder.com/anthropic-leak-reveals-new-model-claude-mythos-with-dramatically-higher-scores-on-tests-than-any-previous-model/

(2026, March 27). Anthropic Just Leaked Upcoming Model With ‘Unprecedented Cybersecurity Risks’ in the Most Ironic Way Possible. Futurism. https://futurism.com/artificial-intelligence/anthropic-step-change-new-model-claude-mythos

(2026, March 27). Anthropic Data Leak Reveals Upcoming Mythos AI Model. WinBuzzer. https://winbuzzer.com/2026/03/27/anthropic-confirms-leaked-mythos-model-step-change-reasoning-xcxwbn/

(2026, March 26). Anthropic wins preliminary injunction in Trump DOD fight. CNBC. https://www.cnbc.com/2026/03/26/anthropic-pentagon-dod-claude-court-ruling.html

(2026, March 25). OpenAI CEO Sam Altman reportedly teases a ‘very strong’ model internally that can ‘really accelerate the economy’. The Decoder. https://the-decoder.com/openai-ceo-sam-altman-reportedly-teases-a-very-strong-model-internally-that-can-really-accelerate-the-economy/

(2026, March 27). Anthropic Considers Fourth-Quarter IPO. PYMNTS. https://www.pymnts.com/artificial-intelligence-2/2026/anthropic-considers-fourth-quarter-ipo/

Take Your Education Further

Anthropic vs. the Pentagon: The Legal Battle That Will Define AI and Democracy -The full backstory on the supply chain risk designation, the court battles, and the constitutional questions that form the political backdrop of the Mythos leak.

When AI Asks If It’s Alive, Who Answers? - A deep dive into Claude Opus 4.6, the model Mythos reportedly surpasses, including its system card findings and the philosophical questions raised by increasingly capable AI.

Anthropic’s AI Coworker Was Built in 10 Days - Covers Claude Cowork and the enterprise momentum driving Anthropic’s competitive position, a key thread in the convergence of events surrounding the leak.

Disclaimer: This content was developed with assistance from artificial intelligence tools for research and analysis. Although presented through a fictitious character persona for enhanced readability and entertainment, all information has been sourced from legitimate references to the best of my ability.