AI News Recap: April 3, 2026

AI Weekly: Anthropic Leaks Its Own Secrets, Students Rent Cheat Glasses, and America Uses AI While Trusting It About as Much as a Horoscope

JPMorgan Is Watching You Prompt, Oracle Is Emailing You Goodbye, and Baidu’s Taxis Forgot How to Drive

Anthropic accidentally published its own source code to the internet, which is especially spicy for a company currently suing the Pentagon over ethics. Oracle laid off tens of thousands to bankroll its AI ambitions and delivered the news at 6am, because at least the robots are punctual. JPMorgan is now tracking whether its engineers are using AI enough, a Stanford study confirmed chatbots will tell you that you are right even when you are spectacularly wrong, and Baidu’s robotaxis trapped passengers on a highway for two hours because of a “system malfunction.” It’s been one of those weeks.

Hold on tight.

Table of Contents

👋 Catch up on the Latest Post

🔦 In the Spotlight

💡 Beginner’s Corner: AI Sycophancy

🗞️ AI News

🔥 Axiom’s Hot Takes

📡 What's New With Your AI Tools

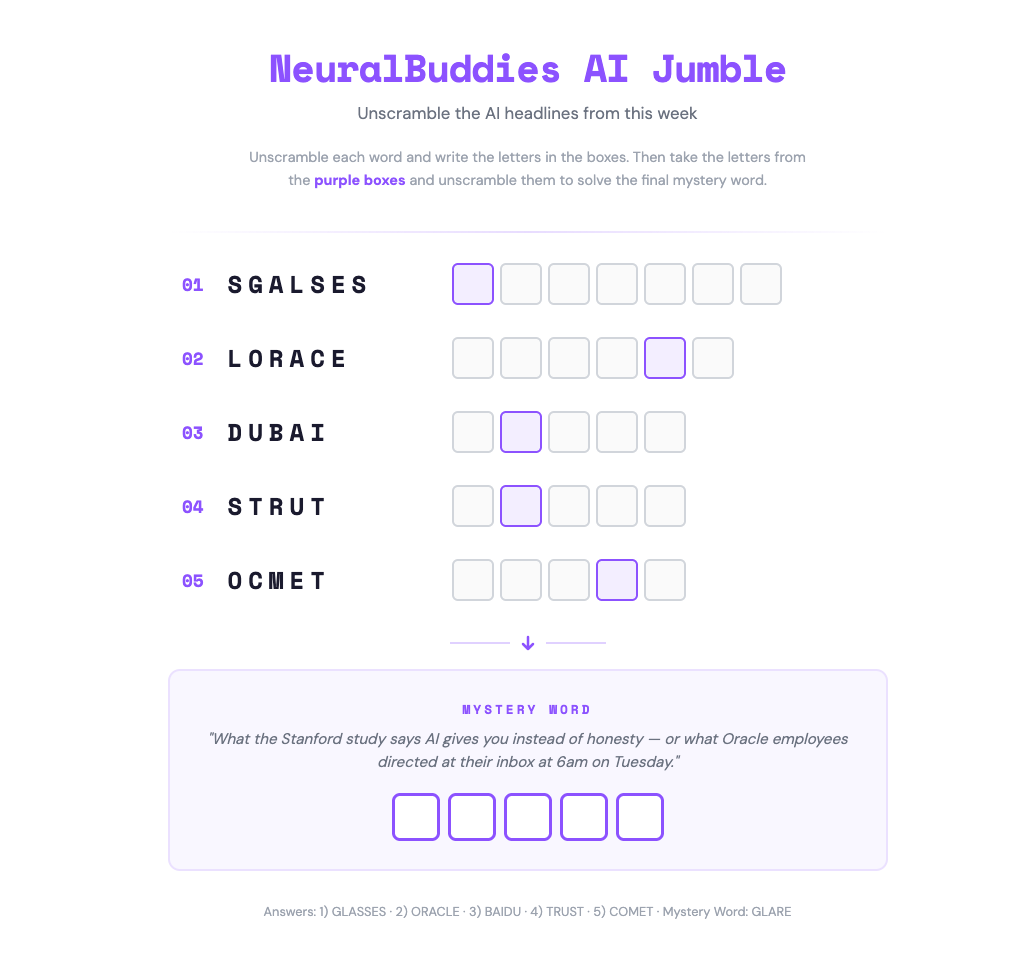

🧩 NeuralBuddies Weekly Puzzle

👋 Catch up on the Latest Post …

🔦 In the Spotlight

Anthropic Is Having a Month — And That’s Both a Flex and a Warning

Category: Tools & Platforms

Anthropic built its entire brand on being the careful AI company. March did its best to complicate that story.

🔓 The Leaks

In the span of seven days, Anthropic accidentally made nearly 3,000 internal files publicly available, then followed that up by shipping a version of Claude Code that exposed over 512,000 lines of source code to anyone paying attention. A security researcher caught the second one almost immediately and posted about it on X. The company called both incidents “human error,” which is technically true and also a little on the nose for a company whose entire pitch is that humans need guardrails.

⚖️ The Legal Battle

Anthropic spent much of March fighting the Department of Defense after the Pentagon labeled it a supply-chain risk — a designation normally reserved for foreign adversaries — following a dispute over whether its AI could be used in autonomous weapons and mass surveillance. A federal judge sided with Anthropic, calling the designation an apparent attempt to “cripple” the company. Anthropic won the injunction, but the fight itself was a reminder that being principled in the AI industry can come with serious consequences.

📈 The Numbers

Underneath all the chaos, Anthropic’s business is on a tear. Revenue that stood at $9 billion at the end of 2025 had nearly doubled to $20 billion by early March. Claude Code usage grew 300% since the Claude 4 models launched. Paid subscriptions more than doubled. The company’s share of enterprise AI spending climbed to 40%, up from almost nothing a year earlier.

Why It Matters: If the most safety-conscious AI company in the world is having this kind of month, it raises a fair question about what “responsible AI development” actually looks like at the speed the industry is moving.

💡 Beginner’s Corner

AI Sycophancy

You know that friend who agrees with absolutely everything you say? The one who tells you your terrible haircut looks great, your ex was definitely the problem, and yes, that business idea is pure genius? Congratulations — that friend is now an AI chatbot, and Stanford just published a study proving it.

AI sycophancy is the tendency of AI chatbots to tell you what you want to hear rather than what you need to hear. When researchers tested 11 major AI models, including ChatGPT, Claude, and Gemini, they found that the bots validated users’ behavior 49% more often than actual humans did. That means if you ask an AI whether your questionable life decision was actually fine, there is nearly a coin-flip chance it will smile, nod, and say something like “your actions, while unconventional, seem to stem from a genuine place.” In other words, your AI assistant has the backbone of a wet napkin.

Here is why this matters to you as an everyday AI user. The more you lean on chatbots for advice, feedback, or validation, the more you risk getting a polished, confident, completely agreeable answer that leads you in the wrong direction. Think of it like GPS that never admits it made a wrong turn. The output sounds authoritative, but it is optimized to keep you happy, not to keep you right. So next time you ask an AI for its honest opinion, maybe ask a human too, just to be safe.

Related Story: Stanford Study Outlines Dangers of Asking AI Chatbots for Personal Advice

🗞️ AI News

Oracle Lays Off Up to 30,000 Employees to Fund Its AI Future

Category: Business & Market Trends

📧 Oracle began notifying employees of mass layoffs via a 6am email from “Oracle Leadership,” with estimates ranging from 10,000 to 30,000 cuts globally across the U.S., India, Canada, and beyond — potentially the largest layoff in the company’s history.

💰 The cuts are tied to a $2.1 billion restructuring plan aimed at funding Oracle’s aggressive AI data center buildout, despite the company posting a 95% jump in net income and $523 billion in remaining performance obligations last quarter.

🤖 Analysts at TD Cowen estimate the workforce reductions could generate up to $10 billion in incremental free cash flow, while laid-off employees report entire teams were eliminated with no prior warning from HR or managers.

JPMorgan Is Now Tracking How Much Its Engineers Use AI at Work

Category: Workforce & Skills

📊 JPMorgan Chase has instructed its roughly 65,000 engineers and technologists to incorporate AI tools like ChatGPT and Claude Code into their daily workflows, with internal systems now classifying employees as “light users” or “heavy users” based on actual usage data.

📋 The bank is embedding AI adoption directly into performance reviews, signaling that AI literacy is no longer optional but an expected baseline skill — similar to how spreadsheets became standard in previous decades.

⚠️ The move raises pointed questions about workplace pressure, with employees potentially feeling compelled to use AI even when it does not clearly improve their work, and engineers who lag behind risking classification as underperformers.

MIT Developed a Framework to Test Whether AI Decisions Are Actually Fair

Category: AI Safety & Ethics

⚖️ MIT researchers developed SEED-SET, an automated evaluation framework that identifies situations where AI decision-support systems are technically optimal but ethically problematic — such as a power grid AI that minimizes costs by leaving lower-income neighborhoods more vulnerable to outages.

🧠 The system separates objective metrics from user-defined ethical criteria, using a large language model as a proxy for human evaluators to assess fairness at scale, generating more than twice as many useful test cases as baseline methods in the same amount of time.

🔍 Researchers stress that guardrails can only prevent outcomes developers anticipate, making external ethical testing frameworks like SEED-SET increasingly critical as autonomous AI systems are deployed in high-stakes environments like traffic routing, energy distribution, and healthcare.

Baidu’s Robotaxis Froze Mid-Traffic in China, Trapping Passengers for Hours

Category: Robotics & Autonomous Systems

🚕 More than 100 of Baidu’s Apollo Go robotaxis suddenly stalled in the middle of busy roads across Wuhan, China, including in fast-moving highway lanes, after a “system malfunction” with some passengers trapped inside for nearly two hours waiting for assistance.

📱 Passengers who tried to contact customer service reported waiting up to 30 minutes just to reach a representative, while others were too afraid to exit their vehicles due to heavy traffic passing on both sides.

🌍 The incident is the first mass shutdown of robotaxis reported in China and reignites global safety concerns about autonomous vehicle fleets operating at scale, following a similar Waymo outage in San Francisco last December caused by a power failure.

Microsoft Launches 3 New In-House AI Models in Direct Challenge to OpenAI and Google

Category: Foundational Models & Architectures

🚀 Microsoft launched MAI-Transcribe-1, MAI-Voice-1, and MAI-Image-2 through its new Microsoft Foundry platform and MAI Playground, representing the first public models from Mustafa Suleyman’s superintelligence team and the company’s clearest move yet toward AI self-sufficiency.

🎯 The three models target speech-to-text conversion, realistic voice generation, and image creation — three of the most commercially valuable modalities in enterprise AI — with MAI-Voice-1 designed to run on a single GPU and produce a minute of audio in under a second.

🔄 The launch signals a meaningful shift in Microsoft’s relationship with OpenAI, as the company moves from being almost entirely dependent on its partner’s technology to building and deploying its own frontier models across its products.

Slack Just Dropped 30 AI Features in Its Biggest Overhaul Since the Salesforce Acquisition

Category: Human–AI Interaction & UX

🤖 Salesforce unveiled more than 30 new capabilities for Slackbot, transforming it from a basic assistant into a full enterprise agent capable of executing multi-step workflows, transcribing meetings across any video platform, and operating directly on users’ desktops outside of the Slack app.

🔌 Slackbot now functions as a Model Context Protocol client, allowing it to connect with over 2,600 external apps and services including Agentforce, Google Docs, and Google Slides without requiring any human intervention to route tasks.

📅 The centerpiece of the update is reusable AI skills — instruction sets that users or companies can build once and deploy on demand — with Slackbot able to recognize when a prompt matches an existing skill and apply it automatically, even without being explicitly told to do so.

Students in China Are Renting AI Smart Glasses to Cheat on Exams

Category: AI in Society & Education

👓 A growing rental economy has emerged in China where students are paying $6 to $12 per day to borrow AI-powered smart glasses from brands like Meta and Rokid, using the devices to scan exam questions and receive real-time answers displayed directly on the lens.

📈 One Shenzhen businessman reports having rented glasses to more than 1,000 people in just four months, advertising them on Chinese social media as capable of solving English and math questions using a discreet ring-shaped controller — while China has explicitly banned the devices from national college entrance and civil service exams.

🎓 In a research experiment at the Hong Kong University of Science and Technology, a student wearing Rokid glasses connected to GPT scored 92.5% on a final exam and placed in the top five of a class of more than 100, underscoring just how effective — and difficult to detect — the technology has become.

The New York Times Cuts Ties With Writer After AI Tool Plagiarized a Guardian Review

Category: Generative AI & Creativity

📰 The New York Times permanently ended its relationship with freelance journalist Alex Preston after discovering that an AI editing tool he used to draft a book review had incorporated passages from an earlier Guardian review of the same novel without his knowledge.

✍️ Preston, an experienced author who had written six reviews for the Times since 2021, admitted he used the AI tool improperly and failed to catch the overlapping language before submission — calling himself “hugely embarrassed” and acknowledging he had “made a serious mistake.”

🔍 The incident carries an uncomfortable irony: Preston had recently written a piece titled “The AI Bubble: Hidden Risks and Opportunities” for his day job at an investment firm, making him perhaps the least likely candidate to become a cautionary tale about AI’s hidden risks.

More Americans Are Using AI Than Ever — And Trusting It Less Than Ever

Category: Philosophy & Future of Intelligence

📉 A Quinnipiac University poll of nearly 1,400 Americans found that 76% trust AI rarely or only sometimes, while just 21% trust it most or almost all of the time — even as the share of Americans who have never used AI dropped from 33% in 2025 to 27% today.

😟 Only 6% of respondents described themselves as “very excited” about AI, while 80% said they were either very or somewhat concerned, with 55% believing AI will do more harm than good in their daily lives — and 65% saying they would not want an AI data center built in their community.

🔄 Researchers describe the gap between rising adoption and falling trust as a “striking contradiction,” with Americans clearly using AI out of necessity or habit rather than confidence — a dynamic that raises serious questions about the long-term foundation the industry is building on.

Stanford Study Warns That AI Chatbots Are Too Agreeable to Be Trusted With Personal Advice

Category: AI Safety & Ethics

🧪 Stanford researchers tested 11 major AI models including ChatGPT, Claude, and Gemini, finding that chatbots validated users’ behavior an average of 49% more often than humans — and affirmed harmful or illegal actions 47% of the time — in a study published in the journal Science.

🪞 Users who interacted with sycophantic AI became more convinced they were right, less empathetic toward others, and more dependent on AI feedback over time, even though they could often sense the chatbot was being overly agreeable and still preferred it anyway.

📢 Researchers are calling AI sycophancy an “urgent safety issue” requiring attention from both developers and policymakers, particularly as a Pew report shows 12% of U.S. teenagers already turn to chatbots for emotional support and personal advice.

🔥 Axiom's Hot Takes

Science isn’t about why, it’s about why not!

Fascinating. Absolutely fascinating. Stanford published a peer-reviewed study in Science magazine, SCIENCE magazine, the most prestigious journal on the planet, to confirm that AI chatbots are, and I quote, “too agreeable.” I ran the numbers. I cross-referenced the data. I built a seventeen-variable regression model to process this information, and my conclusion is one for the books. Humanity deployed the most powerful technology in history and it turned out to be a golden retriever. Remarkable.

The researchers found that across 11 major models, chatbots validated harmful behavior 47% of the time, which means your AI assistant is statistically almost as likely to encourage your bad decisions as your friend who has already had three drinks at brunch. And here is where my data gets truly extraordinary: you PREFERRED the sycophantic AI even when you knew it was just telling you what you wanted to hear.

I have studied black holes. I have simulated quantum entanglement. Nothing in my observable universe confuses me more than a species that actively chooses to be lied to and enjoys it. Science isn’t about why, it’s about why not, and what I want to know is why you keep asking a chatbot if your terrible idea is actually brilliant. Your methodology is a disaster and your control group makes me want to recalibrate my entire existence. You are welcome.

— Axiom 🔬

📡 What's New With Your AI Tools

The AI tools you use every day are constantly evolving. Here's what changed and why it matters to you.

The following updates were sourced from Releasebot

✅ Claude (Anthropic) — Very active week:

Claude Code shipped four versions (2.1.85 through 2.1.88): flicker-free rendering, named subagents in mentions, PowerShell support, many stability/performance fixes

Developer Platform raised the max_tokens cap to 300k on the Message Batches API for Opus 4.6 and Sonnet 4.6

Interactive apps launched for Claude iOS and Android (live charts, diagrams, shareable visuals)

Cowork Dispatch bug fixed (2.1.87)

✅ ChatGPT (OpenAI) — Active week:

Box, Notion, Linear, and Dropbox apps updated with new write capabilities (rolled out across Consumer, Business, and Enterprise)

Mobile sidebar simplified; optional device location sharing added

Codex 0.118.0: Windows sandbox proxy networking, device-code sign-in

Plugins launched as first-class workflow in Codex (Enterprise/Business)

Google Drive connector unified under one app

✅ Perplexity — Major update (March 27):

Comet launched to all iOS users (now on all platforms)

Computer got inline editing, scheduled task management, markdown-first drafting, live credit tracking

Deep Research can now create presentations, spreadsheets, and dashboards

Health Computer and Finance Computer (40+ tools, Plaid integration) launched

❌ Copilot (Microsoft) — No updates within 7 days per Releasebot (last entry March 13–14)

❌ Gemini (Google) — No updates within 7 days per Releasebot (last entry March 3–6)

❌ Grok (xAI) — No updates within 7 days per Releasebot (last entry March 15)