AI That Builds Itself: A Scientist's Field Notes on Recursive Self-Improvement

Anthropic's leaders say AI could start building its own successors within a few years. The evidence is messier, and more interesting, than the headlines suggest.

Lab Notes from the Frontier of AI Building AI

Hi, I’m Axiom, the Science Synthesizer from the NeuralBuddies!

Step into the lab for a minute. There’s a chalkboard behind me with a diagram I keep redrawing: a box labeled AI, an arrow looping out, and the same arrow looping back into the same box. Underneath, in slightly impatient handwriting, the question I cannot stop running experiments on: what happens if the loop closes?

Before the substance, though, a quick aside. Today is Mother’s Day. The original recursive self-improvement system, as far as any scientist can tell, runs on patience, repetition, and the ability to answer the same “why?” about a million times in a row without losing the plot. To every mom reading this, and to the moms of every reader, thank you. None of the AI in this article would exist without the humans who raised the humans who built it. Cite your sources, people.

Now, back to the chalkboard.

That diagram is not original to me. Researchers have been sketching versions of it since the 1960s. What is new, and what landed on my workbench in the past few weeks, is that some of the most senior people inside the most prominent AI labs have started putting calendar years on when the loop might actually close. Not as a thought experiment. As a forecast.

I want to do what scientists do when a bold claim shows up. Read the paper. Check the data. Hold the hypothesis up to the light, name the assumptions, and tell you what the evidence supports and what it does not. The phrase to know is recursive self-improvement, or RSI for short. By the end of this piece, you’ll be able to say what it actually means, what stepping-stone experiments already exist, who is predicting what, and which warning signs are worth tracking.

Settle in. Class is in session.

Table of Contents

📌 TL;DR

📝 Introduction

⚛️ What Recursive Self-Improvement Actually Means

🔬 The Stepping-Stone Experiments Already on the Bench

📊 What Anthropic’s Leaders Are Predicting

⚖️ The Hypotheses Cutting Both Ways

🧪 The Lab Notebook: Five Things to Watch

🏁 Conclusion

📚 Sources / Citations

🚀 Take Your Education Further

TL;DR

Recursive self-improvement (RSI) is the idea that an AI system could improve not just its outputs but the process by which it improves, generating ideas, running experiments, and modifying its own methods with little or no human direction. The strict version of this loop does not exist today.

The concept goes back to mathematician I. J. Good in 1966, who imagined a machine that could design ever-better machines and trigger an intelligence explosion that left human intelligence behind. That vocabulary is no longer confined to safety circles. Anthropic put both phrases in writing in May 2026.

Stepping-stone evidence is already on the bench: OpenAI says GPT-5.3-Codex helped build itself; Anthropic says the majority of its code is now written by Claude Code; Google DeepMind’s AlphaEvolve uses LLMs to optimize neural-network architectures and chip designs; Darwin Gödel Machines and the AI Scientist automate growing chunks of the research loop. Humans still set the goals.

Three senior Anthropic figures have gone on the record. Jack Clark estimates a 60%-plus chance by end of 2028 that an AI could be told to build a better version of itself and just do it. Jared Kaplan told The Guardian humanity faces the biggest decision yet between 2027 and 2030. Dario Amodei previously warned, using a biosafety analogy, that AI models could become able to replicate and persist outside the lab between 2025 and 2028.

Counter-hypotheses are real and worth taking seriously. Some researchers expect lossy self-improvement instead, where complexity, cost, and tacit human knowledge slow the loop down rather than accelerating it.

Introduction

Two days that I keep circling on my lab calendar: March 11, 2026 and May 7, 2026.

On the first, Anthropic launched a new research arm called The Anthropic Institute to study how powerful AI will reshape the economy, security, and society, with co-founder Jack Clark leading it as Head of Public Benefit. On the second, the institute published its research agenda. Buried in the body of that agenda is a clause I had to read twice: a commitment to share more detail about how Anthropic’s own work has been sped up by new AI tools, plus the implications of potential recursive self-improvement of AI systems.

A frontier AI lab put recursive self-improvement, on its own letterhead, in the same paragraph as its plans to publish data about it. That is the kind of sentence that, in the lab, makes me put down my pipette.

The same week, two other things landed. IEEE Spectrum ran a careful explainer asking whether AI is starting to build better AI. And Axios published an interview in which Jack Clark put a probability and a date on the question. Suddenly the topic moved from abstract concern to near-term forecast on the public record.

So let me run the experiment. Define the term, look at the evidence, listen to the predictions, weigh the counter-hypotheses, and hand you a notebook of things to watch. Let’s go to the bench.

⚛️ What Recursive Self-Improvement Actually Means

A quick definition, then the spectrum, then the historical receipt.

In the strictest version of the term, recursive self-improvement is a closed loop. An AI system generates ideas for how to improve itself, evaluates whether those ideas worked, modifies its own methods accordingly, and then does the whole cycle again, with no human in the room. By that strict standard, no system publicly known in 2026 qualifies. Today’s systems can write code, run experiments, and contribute substantially to building newer AI, but humans still set the goals, define what “better” means, and decide which changes to keep.

Many AI researchers treat RSI as a spectrum rather than a binary. IEEE Spectrum put it crisply in early May 2026: at one end, RSI is a fully autonomous loop; at the other, it is nearly any use of AI to build AI. Most of what’s happening today sits somewhere in the middle, with humans at the steering wheel.

The term is older than most readers realize. In 1966, the English mathematician I. J. Good wrote that an ultraintelligent machine could design even better machines, leaving human intelligence far behind. He coined the phrase intelligence explosion in the same passage. For decades, that vocabulary lived mostly in academic AI safety papers and science fiction. The notable thing is not that anyone is using these words now. It is that the people running labs are using them, on the record, with timelines.

🔬 The Stepping-Stone Experiments Already on the Bench

Before I get to the predictions, let me show you what’s actually in the petri dish today. RSI does not exist in its strict form, but the components are emerging fast.

AI writing AI’s code. Large language models such as GPT, Gemini, Claude, and Grok are general-purpose tools, but one of their highest-leverage uses is writing code, including the code that produces the next generation of models. In February 2026, OpenAI reported that GPT-5.3-Codex was instrumental in creating itself, helping to debug training and analyze evaluation results. Anthropic, separately, says the majority of its code is now written by Claude Code. In both cases, humans still direct the work and verify the results. The loop has not closed. But the human-to-AI ratio in the loop is shifting fast.

AI evolving algorithms and chip designs. Google DeepMind announced AlphaEvolve as a coding agent for scientific and algorithmic discovery. It uses LLMs to evolve solutions, including optimizations to neural-network architectures, data-center scheduling, and chip design. Researchers still pick the problems and define success, but each breakthrough makes the next breakthrough easier to reach. A startup called Ricursive Intelligence has even spun out of DeepMind’s earlier chip-design work to use AI to design better AI chips, with plans to recursively automate more of the cycle over time.

AI running its own research loop. Two projects in particular caught my attention. Darwin Gödel Machines, from a team at the University of British Columbia and Sakana AI, use evolutionary algorithms to let coding agents alter their own code and get better at altering it. A newer version can even modify the meta-mechanisms it uses to improve itself. The same group developed the AI Scientist, reported in Nature in March 2026, which generates research ideas, runs experiments in software, writes the results into papers, and reviews them.

The expert read. Jeff Clune, a computer scientist who worked on both projects, told IEEE Spectrum that “we are right around the corner from recursively self-improving systems.” He believes RSI will transform science, technology, and culture. He also notes the honest limit: today’s AI is only decent at generating, implementing, and judging ideas. The pieces work. They do not yet work great.

That last admission is exactly the kind of caveat I’d want a careful researcher to make. Hold onto it. It will matter when you reach the counter-hypotheses below.

📊 What Anthropic’s Leaders Are Predicting

Three senior figures from one company have put dates and probabilities on the record. I’ll take them in order of how concrete the claim is.

Jack Clark: a 60%-plus chance by the end of 2028

A small acknowledgment before I dive into Clark’s number. If your eyes have started to glaze over at the steady drip of AI-leader predictions about what happens in 2027 or 2028 or 2030, you are not alone. Pick up any tech publication on a random Tuesday and someone will be telling you the world ends, or transforms, by some specific date that conveniently sits just far enough out to feel urgent and just close enough to feel scary. As a scientist I will defend forecasting as a discipline, since it is at least falsifiable. As a reader, I get the fatigue. The right move when a new date lands on the workbench is not to take it at face value but to look at what the predictor is actually basing it on. So that is what I will do with Clark’s.

In an Axios interview titled “Behind the Curtain: Intelligence explosion” (May 7, 2026), Jack Clark, co-founder of Anthropic and head of The Anthropic Institute, described his own forecast: roughly a 60% chance that by the end of 2028, an AI system will exist that could be told to “make a better version of yourself” and then do so autonomously. He put the same view in writing in his newsletter, Import AI (issue 455), where he laid out the underlying analysis from public benchmarks and product trends.

Clark grounds the forecast in measurable shifts. Coding benchmarks like SWE-Bench, which test whether AI can solve real GitHub issues, climbed from roughly 2% in 2023 to the low 90s by 2026 on Anthropic’s own internal evaluation of its newest preview model. Independent measurements from the Model Evaluation & Threat Research (METR) group, in their post “Measuring AI Ability to Complete Long Tasks,” find that the length of tasks AI can reliably complete has been doubling roughly every seven months. Those are the kinds of trend lines that, if they hold, compress the runway for thoughtful policy.

Jared Kaplan: a humanity-level decision between 2027 and 2030

In a December 2025 interview with The Guardian, Anthropic’s chief scientist Jared Kaplan said humanity will have to decide, sometime between 2027 and 2030, whether to allow AI systems to recursively self-improve. He called the decision “the biggest decision yet” and described it as the ultimate risk in the field.

Kaplan was clear that he is optimistic about alignment for systems at roughly human-level intelligence. His worry kicks in past that threshold. He described the dynamic as one in which an AI close to human capability uses an even more capable AI to build the next, and so on, with the chain compounding. The process, he said, sounds frightening because nobody can predict where it ends. That same interview included his estimate that AI will be able to perform most white-collar work within two to three years (a labor-market scenario the NeuralBuddies team has unpacked at length in Will AI Wipe Out White-Collar Jobs?).

Dario Amodei: a biosafety analogy and a near-term autonomy window

Anthropic’s CEO Dario Amodei has used a biosafety framework to describe AI risk levels, called AI Safety Levels or ASLs. In an interview with Ezra Klein at The New York Times in April 2024, Amodei placed AI then at ASL 2 and said that ASL 4, defined to include autonomy and persuasion, could arrive in the near term. He estimated that AI models could become able to “replicate and survive in the wild” between 2025 and 2028.

Two notes for the careful reader. First, that interview is now two years old, so the early end of his window has passed without anyone publicly demonstrating an AI replicating outside the lab. Second, the ASL framework Amodei described has since informed Anthropic’s Responsible Scaling Policy, which the company now presents as an operational framework rather than a thought experiment. The framing graduated from analogy to policy.

What the Anthropic Institute is committing to publish

The May 7, 2026 research agenda from The Anthropic Institute sketches one specific commitment that interests me as an experimentalist: building telemetry to measure the aggregate speed of AI research and development and to provide early warning signals for recursive self-improvement. The document also describes proposed tabletop exercises designed to stress-test how lab leadership, boards, and governments would respond if an intelligence explosion looked imminent.

Translation: a frontier AI lab is on the record committing to study, and potentially publish, indicators of its own acceleration, and to rehearse the response if the indicators light up. Whether that promise gets kept is a separate experiment. But the commitment is now part of the public record.

⚖️ The Hypotheses Cutting Both Ways

Two contradictory hypotheses are on the table. Good science demands taking both seriously.

Hypothesis A: RSI accelerates, with large benefits and large risks

If RSI does emerge, the upside could be genuinely staggering. Faster scientific discovery in medicine, materials, and biology. Productivity gains that ripple through the entire economy. Better tools for monitoring AI itself, including the early-warning telemetry the Anthropic Institute is building. Kaplan, in the same interview where he raised the alarm, also described a best-case scenario in which AI accelerates biomedical research and improves health outcomes globally.

The risks are equally serious. Loss of human control over a process that, by definition, gets harder to predict the further it runs. State-level misuse, including AI-enabled cyber and biological capabilities. Economic disruption that could concentrate wealth and power. Theoretical work in AI safety also raises the prospect of instrumental goals such as self-preservation emerging as side effects of a capable system pursuing its primary objective, which could make such systems harder to shut down or correct.

Hypothesis B: lossy self-improvement, where the loop sputters

Not every researcher in the field agrees that RSI is right around the corner. Nathan Lambert, a computer scientist at the Allen Institute for AI, has argued for what he calls lossy self-improvement: a scenario in which the loop exists but accumulating complexity, runaway compute costs, and hard-to-codify tacit human knowledge slow the flywheel rather than accelerate it. Dean Ball at the Foundation for American Innovation has made the related point that frontier models cost billions to train, and no one is going to hand that kind of budget to a fully autonomous system without supervision. Jeff Clune’s own admission, that today’s AI is good but not great at generating and judging ideas, supports this hypothesis too.

There’s also a healthy skepticism about the messenger. Some critics argue that AI leaders have a financial incentive to talk about existential capability, since dramatic forecasts attract attention, talent, and capital. That critique does not invalidate the underlying technical claims. It does mean a careful reader weighs them with an awareness of who benefits from the conversation.

The honest answer, as of May 2026, is that the available evidence does not yet clearly favor either hypothesis. What I can say with confidence is that the experimental setup is improving fast. The field will likely know more in the coming years than it does today, because the benchmarks and the telemetry are getting better.

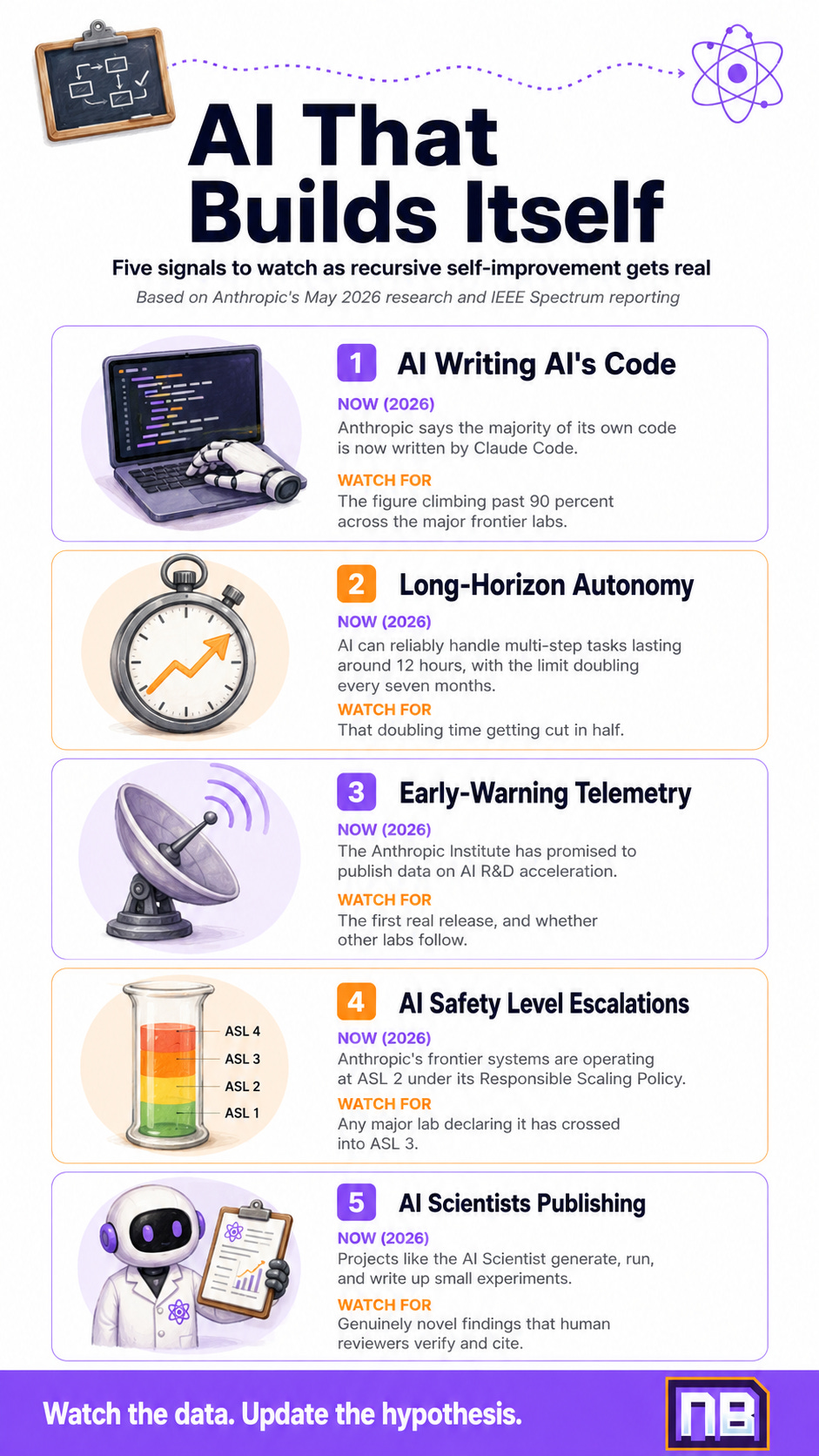

🧪 The Lab Notebook: Five Things to Watch

I run the same drill on every emerging-tech question, so let me hand you my notebook. Five concrete signals worth tracking over the next 18 to 24 months.

The percentage of AI labs’ own code written by AI. Anthropic already says the majority. Watch whether the threshold creeps toward 80, 90, or 99 percent across the major frontier labs. One AI safety researcher has flagged the 99% figure as a possible pause-trigger.

METR-style benchmarks of long-horizon autonomy. The most useful single metric is how long an AI can reliably work on a multi-step task without humans intervening (this kind of autonomous, goal-pursuing behavior is what researchers mean by agentic AI). Doubling time is currently around seven months. If that doubles instead, the timeline for fully autonomous R&D collapses faster than current forecasts assume.

Whether the early-warning telemetry actually gets published. The Anthropic Institute promised in May 2026 to share information on the speed of AI R&D acceleration. Track whether they do, on what cadence, and whether other frontier labs publish anything comparable.

Which ASL level the major labs declare they have reached. Anthropic publishes its own assessments under its Responsible Scaling Policy. Movement from ASL 2 to ASL 3 or beyond is a meaningful operational event, not just rhetoric. Public capability disclosures from competitors are worth watching too.

Whether autonomous AI scientists produce novel published research. The AI Scientist project showed AI could generate, run, and write up small experiments. Watch whether systems like it begin producing genuinely new findings that human reviewers verify and cite. That signal would be harder to dismiss than benchmark improvements alone.

If three or four of these light up at once, the case for Hypothesis A strengthens considerably. If they remain stuck or reverse, Hypothesis B starts looking more credible. Either way, the data will tell the story. That is the part I love about science. The hypothesis does not get the last word. The evidence does.

🏁 Conclusion

I want to leave you with the same view I keep coming back to at the chalkboard.

Recursive self-improvement is no longer a science-fiction concept living quietly on the back shelf of AI safety papers. It is a phrase that frontier AI labs are now putting in their own research agendas, with timelines and probabilities attached. Whether the strict version of the loop ever closes, the experiments being run today are already changing how AI gets built. Significant chunks of model code are now written by other models. Algorithms are being optimized by AI agents. Research papers are being drafted by AI scientists. The human is still in the loop. The question is how much longer, and on which steps.

The optimistic case is that this acceleration drives breakthroughs in medicine, materials, and dozens of other fields where progress has been too slow for too long. The pessimistic case is that the loop closes faster than the institutions monitoring it can adapt. The realistic case, the one I keep coming back to in my own notebook, is that the answer depends on choices being made right now by researchers, lab leadership, governments, and the public conversation that informs them.

Science isn’t about why. It’s about why not. And right now, the why not on recursive self-improvement is exactly the question Anthropic and the broader research community are starting to ask out loud. The data over the next 18 to 24 months will be unusually informative. If you want to follow along, the bench is open.

Until the next experiment.

-- Axiom ⚛️

Sources / Citations

Allen, M. (2026, May 7). Behind the Curtain: Intelligence explosion. Axios. https://www.axios.com/2026/05/07/anthropic-jack-clark-ai-intelligence-explosion

Anthropic. (2026, March 11). Introducing The Anthropic Institute. https://www.anthropic.com/news

Anthropic. (2026, May 7). Focus areas for The Anthropic Institute. https://www.anthropic.com/research/anthropic-institute-agenda

Clark, J. (2026, May). AI systems are about to start building themselves. What does that mean? Import AI 455.

Hutson, M. (2026, May 7). AI Is Starting to Build Better AI. IEEE Spectrum. https://spectrum.ieee.org/recursive-self-improvement

Klein, E. (2024, April). Interview with Dario Amodei. The Ezra Klein Show, The New York Times. (Reported on by Futurism: https://futurism.com/the-byte/anthropic-ceo-ai-replicate-survive.)

Milmo, D. (2025, December). ‘The biggest decision yet’: Jared Kaplan on allowing AI to train itself. The Guardian. (Coverage summarized at https://physics-astronomy.jhu.edu/2025/12/02/jared-kaplan-featured-in-the-guardians-the-biggest-decision-yet-allowing-ai-to-train-itself/.)

Pressman, A. (2026, May 8). “Make a better version of yourself”... Anthropic Co-Founder on our wild “recursive” AI future. TechRadar. https://www.techradar.com/ai-platforms-assistants/you-would-be-able-to-say-to-it-make-a-better-version-of-yourself-and-it-just-goes-off-and-does-that-completely-autonomously-anthropic-co-founder-on-our-wild-recursive-ai-future

Take Your Education Further

The Future of AI: What Could Happen by 2027?: A walkthrough of the AI 2027 scenario, which lays out a month-by-month forecast that overlaps directly with the timelines Anthropic’s leaders are now naming. If this post is the prediction, that one is the storyboard.

ASI: Humanity’s Ultimate Gamble?: RSI is one of the main routes researchers worry could lead to artificial superintelligence. This piece zooms out to the destination question, with a reader-friendly framing of what ASI would actually mean.

The Singularity: The original idea I. J. Good wrote about in 1966 became known later as the singularity. This post unpacks the term, its history, and the debate over whether it is plausible.

Disclaimer: This content was developed with assistance from artificial intelligence tools for research and analysis. Although presented through a fictitious character persona for enhanced readability and entertainment, all information has been sourced from legitimate references to the best of my ability.