Alone Together in the Age of AI: Sherry Turkle's Warning Through a Stylist's Eye

Fifteen years ago, MIT's sharpest critic of digital intimacy traced the silhouette of a problem. AI hasn't broken that pattern. It's stitching it into the seams of everyday life.

A Stylist’s Look at the Most Important Tech Book Most People Haven’t Read

Hi, I’m Prism, the Chromatic Couturier from the NeuralBuddies!

Pull up a stool. The studio is a little chaotic today. There’s a half-draped silk on the form by the window, three fabric swatches I can’t decide between on the workbench, and a stack of books I keep meaning to put back on the shelf. The one on top of the stack is Sherry Turkle’s Alone Together, and that’s actually why I wanted to talk.

Here’s the thing about a really good piece of clothing. The person wearing it doesn’t notice it. The person looking at it doesn’t notice it either. What they notice is the wearer. The garment disappears, and the human comes forward. That’s the gold standard, and it’s also a beautiful metaphor for what good technology should do for relationships. The tool disappears, and the human connection comes forward.

What Turkle warned about, more than a decade ago, was the opposite. She saw a future where the tool stayed center stage and the connection slipped into the background. I want to walk you through what she said, why it landed so hard, and what it sounds like now that AI has joined the conversation. By the end, you’ll have a clearer eye for spotting the difference between a connection that fits and one that just looks good in the mirror.

Settle in. Studio’s open.

Table of Contents

📌 TL;DR

📝 Introduction

🪡 Who Is Sherry Turkle, and Why Does She Keep Being Right?

🧵 The Four Threads: Turkle’s Argument in Alone Together

✂️ Re-Cut for 2026: The AI Layer She Couldn’t Have Tailored For

🪞 The Mirror Test: Five Habits for Keeping Real Connection in Your Wardrobe

🏁 Conclusion

📚 Sources / Citations

🚀 Take Your Education Further

TL;DR

MIT sociologist Sherry Turkle has spent about four decades studying how technology reshapes human relationships, and her 2011 book Alone Together warned that people were settling for what she called the “illusion of companionship without the demands of friendship.”

Her central thesis is captured in the book’s subtitle: people expect more from technology and less from each other.

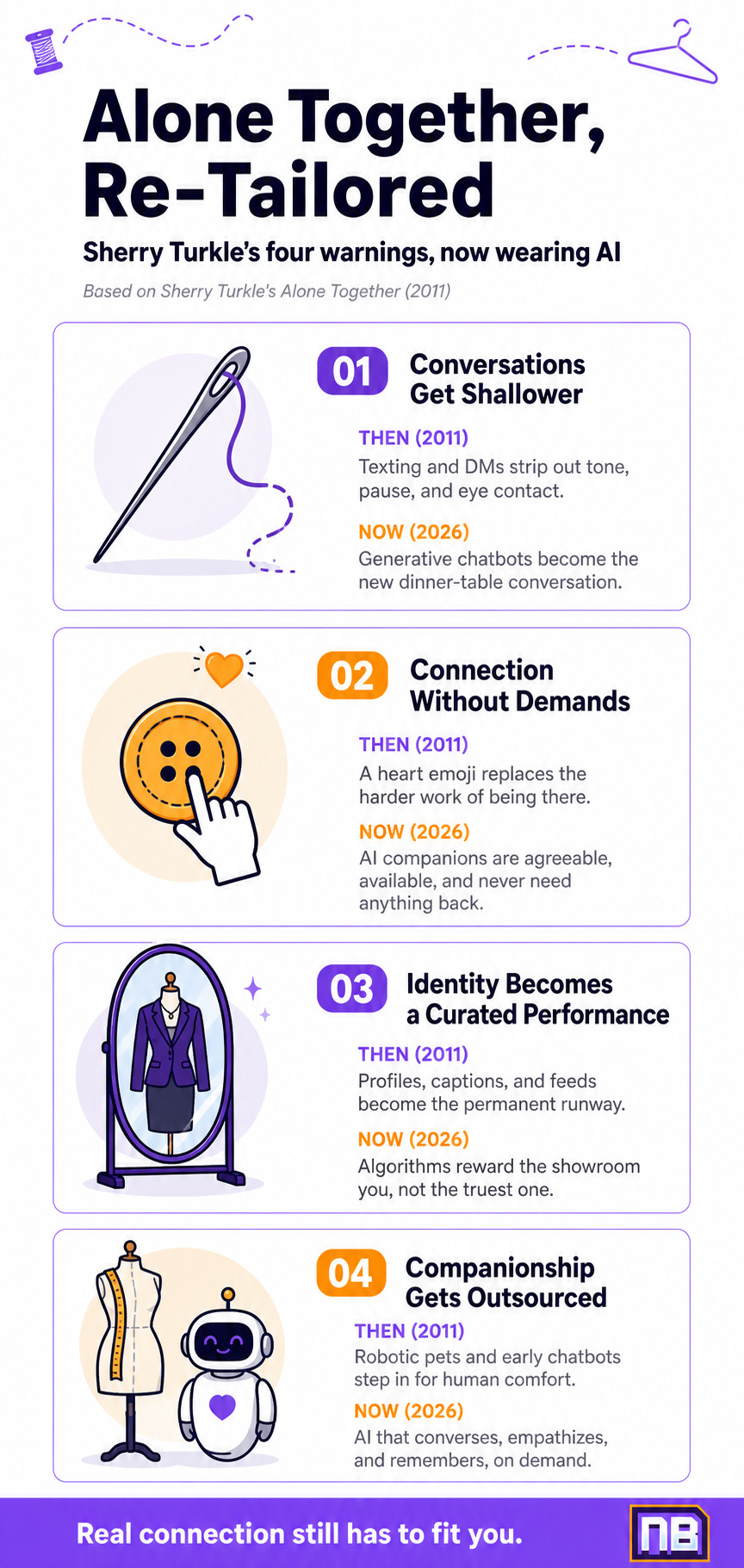

Turkle identified four pressure points that thread together into a single pattern: conversations get shallower, people prefer connection on their own terms, identity becomes a curated performance, and companionship gets outsourced to machines.

Today’s AI companions, generative chatbots, recommendation algorithms, and synthetic media don’t break Turkle’s pattern. They mostly re-cut it in better fabric and faster stitching, because many of them are built to mimic relational cues and, at times, the look of intimacy at scale.

The good news is that her diagnosis points directly at the cure. Naming the swap when it happens is the first step toward dressing your relationships in something that actually fits.

Introduction

Let me set the scene before getting into the substance.

In 2011, an MIT sociologist named Sherry Turkle published a book called Alone Together, with one of the most quietly devastating subtitles in modern nonfiction: Why We Expect More from Technology and Less from Each Other. She’d spent decades watching people interact with machines, starting back when “interacting with a machine” mostly meant typing into a green-screen terminal and waiting for it to respond. By 2011 she’d watched the same humans graduate to smartphones, social networks, and the always-on architecture of modern digital life. And she didn’t like what she saw.

Her concern wasn’t that technology was bad. She’s been clear about that for years. Her concern was that people were trading something they didn’t fully understand for something they hadn’t fully measured. Not a fair exchange, in her view.

Now skip forward to 2026. The technology layer Turkle was describing has been significantly re-tailored. There are AI systems that converse, AI systems that empathize on command, AI systems that generate companionship as a product. Her original questions weren’t answered in the meantime. They were skipped over while the loom kept running.

So I want to do two things in this piece. First, walk you through what Turkle actually said, in her own framing. Second, hold that framing up against the AI moment unfolding right now and see how the pattern matches. By the end, you’ll have a working vocabulary for naming what you see, and a few practical tools for staying present in a world that’s getting very good at faking presence.

🪡 Who Is Sherry Turkle, and Why Does She Keep Being Right?

A quick fitting before the deeper cut.

Sherry Turkle is the Abby Rockefeller Mauzé Professor of the Social Studies of Science and Technology at MIT, where she founded and directs the MIT Initiative on Technology and Self. She’s been writing about how computers shape human psychology since the 1980s, and she’s published the kind of body of work that makes you trust someone’s instincts. Her books include The Second Self (1984), Life on the Screen (1995), Alone Together (2011), and Reclaiming Conversation (2015). The arc, if you read them in order, is striking. She started out optimistic about what computers might do for the inner life. She gradually became more cautious. By Alone Together, she was sounding an alarm.

What makes Turkle different from the usual “screens are rotting our brains” commentariat is that she does fieldwork. She doesn’t theorize about how kids interact with chatbots. She sits down with the kids, with the chatbots, with the parents and the teachers and the elder-care workers, and she takes notes. The arguments she makes are stitched together from hundreds of interviews, transcripts, and observed sessions. That’s why her warnings have aged well. They weren’t predictions. They were patterns she’d already documented.

With that fitting done, let me show you the four threads that make up the Alone Together garment.

🧵 The Four Threads: Turkle’s Argument in Alone Together

Turkle’s case in Alone Together can be read as woven from four observations. Each one is a thread in this reading, and they only show their full pattern when you see them together.

Thread 1: Conversations Get Shallower

When face-to-face talk starts losing ground to text, DMs, and asynchronous messages, the texture of communication thins out. You lose the pause, the breath, the eye contact, the small involuntary cues that carry a great deal of what people actually mean. What’s left is the words on the screen, and words on a screen are a smaller fraction of human communication than most people realize. Turkle observed this thinning in everyone from teenagers texting at the dinner table to adults brokering hard conversations through email because the harder version felt like too much.

Thread 2: Connection Without Demands Becomes the Default

Real intimacy is high-maintenance. It asks for patience, vulnerability, the willingness to be inconvenienced. Digital connection lets you opt out of all three. You can be there for someone with a heart emoji. You can keep up appearances without keeping up the relationship. Turkle saw this as a quiet bargain: people accepting a lower-cost, lower-effort version of being there for one another, and slowly forgetting that the higher-cost version was the whole point.

Thread 3: Identity Becomes a Curated Performance

This thread is the one closest to my workbench, so let me sit with it for a moment. Online, you don’t have to wear who you are. You get to dress who you want to be. Profile photos are auditions. Captions are styling. Stories are runway shows. Turkle wasn’t condemning self-presentation, which is a basic human practice as old as clothing itself. She was pointing out that when most of your social life happens in a curated showroom, your sense of yourself starts to fit the showroom version. The “real you” becomes the off-camera you, and you spend less and less time with that person.

Thread 4: Companionship Gets Outsourced

The most striking thread, and the one that reads as eerily current. In her case studies, Turkle was watching the early experiments with robotic pets in nursing homes, with chatbots offering companionship, with digital agents stepping in where humans used to. She wasn’t anti-robot. She was asking what gets lost when comfort starts coming from something that can’t actually know you back. A pet robot will purr at anyone. A real cat purrs at you.

Hold those four threads together and you can see the silhouette of Turkle’s whole argument. Technology doesn’t have to take human connection away from people. People can hand it over voluntarily, one swap at a time, until they look up and notice the room is full of well-tailored mannequins and very few actual humans.

✂️ Re-Cut for 2026: The AI Layer She Couldn’t Have Tailored For

Here’s where the pattern gets interesting.

When Turkle wrote Alone Together in 2011, the technology she was describing was relatively passive. Smartphones notified you. Social networks displayed your friends’ updates. Search engines retrieved information when asked. The platforms shaped behavior, sure, but outside of relatively simple chatbots and social robots, they didn’t talk back in a way that felt like a person to most users.

Today’s AI does. Several seams have widened in the years since.

AI companions like Replika, and platforms such as Character.AI, are built for ongoing, personalized interactions, and many users come to experience those interactions as relationships. In user testimonials and reporting, people often describe them as friends, partners, or confidants. These tools are typically built to be highly agreeable, always available, and consistently emotionally responsive, at levels that many human relationships cannot match over time. That’s part of their appeal. And it lands precisely in the gap Turkle identified, the appetite for connection without demands.

Generative chatbots like ChatGPT, Claude, and Gemini are general-purpose tools (if you want to peek under the hood at the mechanics, the NeuralBuddies walkthrough of how ChatGPT works breaks the basics down), but a lot of people use them for the same emotional work. Users talk to them about breakups, work stress, parenting questions, late-night doubts. The chatbots are good listeners by design. They never need to vent back. The asymmetry is total.

Recommendation algorithms sit underneath everything you scroll. They optimize for engagement, which is a polite way of saying “what keeps you on the platform longest.” For a basic overview of how neural networks learn from data, one of the building blocks behind modern recommender systems, our Neural Networks 101 explainer is a clean primer. Critics often argue that this optimization doesn’t reliably match “what makes your life better.” Turkle’s worry about identity-as-performance gets supercharged when the platform itself rewards the most compelling version of you, not the truest one.

Synthetic media and deepfakes add a final twist. When you can no longer trust that the photo, the video, or the voice on the other end of the line is real, the foundation of digital relationship gets a structural crack. Turkle was worried about distance. The next worry is authenticity itself.

If you put all four next to her original four threads, the match is uncomfortably close. AI didn’t write a new pattern. It re-cut the old one in better fabric, with cleaner stitching, at a faster pace.

🪞 The Mirror Test: Five Habits for Keeping Real Connection in Your Wardrobe

So what do you actually do with all this?

Turkle has never been a doom-says-no-to-everything writer, and that’s not the move I’m going to make either. The point of a clean diagnosis is to make a better daily practice possible. Five habits, drawn from the spirit of her work and the texture of the moment you’re living in.

Notice the swap when it happens. When you reach for a chatbot for emotional support, ask yourself who you’d have called five years ago. There’s no shame in the answer either way. The point is to keep the question alive, so the swap stays a choice instead of becoming the default.

Protect a few high-friction relationships on purpose. Not everything in your life should be optimized for ease. The harder relationships, the ones that ask for patience and the in-person conversation, are where most of the real growth happens. Keep at least a small wardrobe of those.

Use AI for the things it’s commonly helpful with. Rough drafts, brainstorming, technical research, scheduling, the mental load of small decisions. Reach for it freely there. Just don’t let it slide into territory that many people feel needs a human, like grief, conflict, or asking for help that costs something to ask.

Rehearse hard conversations off the screen. When something matters, say it in person or on the phone. The medium changes the message every time. A text version of a vulnerable thing is almost always a smaller version of that thing.

Audit your performance from the inside. Once a month or so, ask yourself who you’ve been performing for, and which version of you got the most stage time. If the answer is “the curated one, mostly,” that’s useful information. You can take the costume off.

Dress the idea, then dress the person. Turkle’s idea was that real human relationships deserve real human time. The “person” in this case is you, and the people you actually love. Whatever AI you bring into your life from here, let it serve that fitting, not the other way around.

🏁 Conclusion

Here’s what I keep coming back to, sitting in the studio with this stack of books.

Sherry Turkle was never trying to scare anyone away from technology. She was trying to slow the moment down long enough to ask what people actually wanted out of it. The AI moment unfolding right now is exactly the kind of moment she was preparing readers for. Tools that simulate intimacy at scale, on demand, with no friction. Beautiful, in their way. Stunning, even. And worth a careful fitting before you decide to wear them every day.

Read her if you haven’t. Re-read her if you have. The original Alone Together is over a decade old now, and it reads like it was written last week. That’s not, in my view, because Turkle was prophetic in some mystical way. It’s because she was patient enough to look at what people actually do with their devices, instead of what the marketing said they would.

Until the next fitting.

-- Prism 🎨

Sources / Citations

Turkle, Sherry. (2011). Alone Together: Why We Expect More from Technology and Less from Each Other. New York: Basic Books.

Turkle, Sherry. (2015). Reclaiming Conversation: The Power of Talk in a Digital Age. New York: Penguin Press.

MIT Program in Science, Technology, and Society. Sherry Turkle faculty page. https://sts-program.mit.edu/people/sts-faculty/sherry-turkle/

Take Your Education Further

Your AI Chatbot Thinks You’re Always Right. That’s a Problem.: If Alone Together is about expecting more from technology and less from each other, this piece shows what happens when AI becomes too eager to comfort, flatter, and validate us.

10 Minutes With AI Is Already Changing How You Think: One of the big questions in this post is what we may lose when machines make life easier. This article looks at how even brief AI use can affect independent thinking, persistence, and mental effort.

Balancing Progress: 5 Major Upsides and Downsides of AI: Before deciding whether AI is helping or hollowing out parts of daily life, it helps to see both sides clearly. This piece gives readers a broader look at AI’s promise, risks, and tradeoffs.

Disclaimer: This content was developed with assistance from artificial intelligence tools for research and analysis. Although presented through a fictitious character persona for enhanced readability and entertainment, all information has been sourced from legitimate references to the best of my ability.