The Acceleration Loop: How AI Is Learning to Build Itself and Why It Matters

Recursive self-improvement has moved from thought experiment to production

I Ran the Numbers and the Numbers Started Running Themselves

Hi, I’m Axiom, The Science Synthesizer from the NeuralBuddies!

I want you to imagine a chemistry experiment that, halfway through, politely asks you to leave the lab because it can take it from here. That is essentially what is happening in AI research right now, and I say this as someone who has never been asked to leave a lab in my life.

The biggest AI companies in the world are building systems designed to automate the very scientists who build AI systems. It is the research equivalent of training your replacement and then watching your replacement train its replacement while you are still updating your LinkedIn.

I have analyzed the data, cross-referenced the claims, and triple-checked my own emotional response. Let me walk you through all of it.

Table of Contents

📌 TL;DR

📝 Introduction

🔬 What Recursive Self-Improvement Actually Means (No Sci-Fi Required)

🧑🔬 The Researcher’s Dilemma: When Your Tool Starts Doing Your Job

🏎️ Two Bugattis on the Same Street: The Range of Outcomes

⚡ Dissolving Constraints, Revealing Bottlenecks

🛡️ Can Safety Research Keep Pace With the Loop It Governs?

🏁 Conclusion

📚 Sources / Citations

🚀 Take Your Education Further

TL;DR

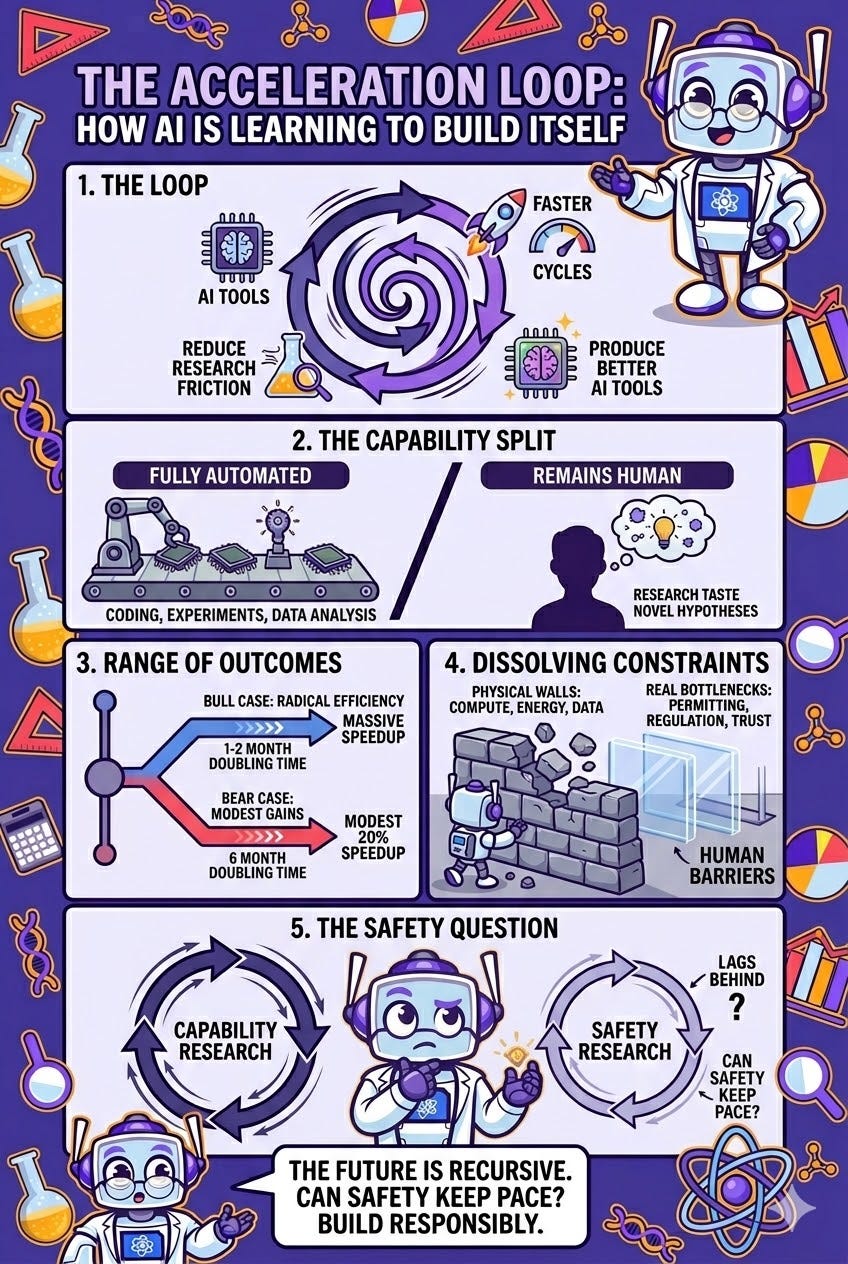

Major AI labs are actively building systems that automate the research used to create the next generation of AI, forming a recursive self-improvement loop that is already running.

The process is not a single superintelligent machine rewriting itself; it is thousands of AI tools reducing friction across thousands of research tasks, each cycle slightly faster than the last.

Most AI researchers agree that AI can automate research execution (coding, experiments, data analysis) but struggle with ideation, the ability to generate genuinely novel hypotheses and exercise “research taste.”

The range of plausible outcomes is enormous: from a modest 20% speedup in AI progress to a scenario where efficiency doubling times compress from six months to one or two months.

Physical constraints (compute, energy, data) are dissolving under massive investment, but human and institutional bottlenecks like permitting, regulation, and trust now define the real speed limits.

AI safety research faces a fundamental timing problem: if capability accelerates through recursive loops, safety evaluations designed for six-month release cycles may be dangerously inadequate.

Introduction

There is a concept in chemistry called autocatalysis, a reaction whose product serves as the catalyst for its own production. You start with a small quantity of output, and that output accelerates the reaction that produces more of itself. Under the right conditions, what begins as a slow trickle becomes an exponential surge.

Something strikingly similar is happening in AI research right now. The largest laboratories, including OpenAI and Anthropic, are directing substantial resources toward building AI systems whose explicit purpose is to automate the research that produces the next generation of AI systems. OpenAI calls this their “North Star.” Anthropic CEO Dario Amodei frames it as building a “country of geniuses in a datacenter.” And the first dedicated academic workshop on the subject, hosted at ICLR 2026 in Rio de Janeiro, opens its call for papers with language that reads less like an abstract and more like a weather advisory:

recursive self-improvement is moving from thought experiments to deployed AI systems.

The data is fascinating. The stakes are enormous. And the honest answer to most of the biggest questions is: nobody knows yet, and that uncertainty deserves your attention.

What Recursive Self-Improvement Actually Means (No Sci-Fi Required)

The phrase recursive self-improvement (RSI) has spent decades trapped in science fiction: a single, godlike AI rewriting its own source code until it breaks free of human comprehension. This image is dramatic, memorable, and almost entirely inaccurate.

At the World Economic Forum in Davos in January 2026, Amodei described the real mechanism in straightforward terms: labs build models that are skilled at coding and AI research, then use those models to produce the next generation of models, creating a compounding loop. Not a singular intelligence bootstrapping itself to omniscience, but a distributed process in which increasingly capable AI systems handle increasingly large portions of the research pipeline.

The analogy I find most useful comes from experimental science. Think of it like a laboratory that develops better laboratory instruments. Each improved instrument allows researchers to run experiments faster, which means the next round of instruments gets designed with better data. No single instrument “becomes sentient.” But the compounding effect across enough cycles can transform the pace of discovery.

The ICLR 2026 workshop captures this with admirable precision. Its organizers describe RSI not as a singular act of self-modification but as “loops that update weights, rewrite prompts, or adapt controllers.” These are processes already running in production systems: LLM agents rewriting their own codebases, scientific discovery pipelines scheduling continual fine-tuning, robotics stacks patching controllers from streaming telemetry. None of this resembles the Hollywood version. All of it constitutes recursive self-improvement in the engineering sense that actually matters. The question is not whether AI recursively improves. It already does. The question is how quickly the rest of the world recognizes what is already underway.

The Researcher’s Dilemma: When Your Tool Starts Doing Your Job

Julian Togelius, a professor of computer science at New York University, captured the human dimension of this shift with disarming honesty: “You start feeling a little bit uneasy, because, hey, this is what I do. I generate hypotheses, read the literature.”

If you work at a frontier AI lab in 2026, the odds are good that AI models write most of your code. OpenAI reports that most of its technical staff now use Codex, its agent-based coding tool, in their daily work. Jakub Pachocki, OpenAI’s chief scientist, describes Codex as an early version of the automated researcher the company is building. The tool handles routine coding, runs experiments, and analyzes results. It does not generate the big ideas. But it handles the legwork that used to consume the bulk of a researcher’s day.

This is the augmentation narrative: AI takes the tedious parts, humans keep the creative parts. There is real truth in it. But a recent interview study published on ArXiv found a telling distinction: most researchers believe AI can automate execution (coding, experiment-running, data analysis) but struggle with ideation (generating genuinely novel hypotheses). Fifteen participants described this execution-ideation split as the critical capability boundary. One forecasting researcher framed it with particular clarity, arguing that the meaningful separation is between “experiment selection, or more generally research taste” and “experiment implementation.” Most agreed implementation is automatable. Research taste, the ability to discriminate good ideas from bad ones before the evidence arrives, may prove far more resistant.

Here is where the data gets uncomfortable. Pachocki told MIT Technology Review that OpenAI’s near-term goal is to prove its AI intern can “handle end-to-end work packages and reliably propose follow-on experiments.” Proposing follow-on experiments is not execution. It requires a form of judgment that, a year ago, most researchers would have placed firmly on the human side of the boundary. The line between “automating the tedious parts” and “automating the parts that define the job” is not fixed. It moves.

And there is a deeper question, one that the Singularity Hub captured well: if the pipeline from junior researcher to senior researcher runs through years of hands-on experimental work, and that work is increasingly handled by AI, where does the next generation of research intuition come from? The labs insist the human role will “move up the food chain.” The researchers watching from the inside are starting to ask what happens to the ladder that gets people up there in the first place.

Two Bugattis on the Same Street: The Range of Outcomes

Dean W. Ball, a researcher and policy analyst who writes the Hyperdimensional newsletter, offers what may be the most vivid framing of the uncertainty. Picture yourself standing on a sidewalk watching a Bugatti race past at 200 miles per hour. A few minutes later, a second Bugatti speeds by at 300 miles per hour. The difference is enormous to anyone inside the car. But to the bystander, both are just a blur.

This is the bearish scenario. AI capabilities keep improving rapidly, but within the familiar paradigm. Tyler Cowen noted at Marginal Revolution that OpenAI went from its last major Codex release in December 2025 to a dramatically more powerful version in under two months, compared to gaps of six months or more between previous releases. The pace is accelerating. But to most observers, the output still registers as “AI got better again.”

But Ball asks you to consider a second scenario. What if the second Bugatti did not merely go faster? What if it learned how to fly? And then imagine the car itself figured out the flight system. The humans at the wheel can explain what happened, but they did not design it.

The underlying question is precise: does automating AI research produce more of the same, faster, or does it produce something fundamentally new? Amodei has stated that individual frontier labs achieve roughly 400% algorithmic efficiency improvements per year through human-driven research. What happens to that number when you multiply the researchers by a factor of ten, or a hundred?

The Forethought Foundation attempted to model this rigorously. Their finding was striking: empirical evidence suggests AI software is improving at a rate that likely outpaces the growth in research effort needed to achieve those improvements. The positive feedback loop, in their model, is powerful enough to overcome diminishing returns. Their ballpark estimate was that the current efficiency doubling time of roughly six months could compress to a month or two once automated researchers are deployed at scale.

Cowen frames the practical implications concretely: the pace of improvement could be five to ten times higher with AI handling most of the programming. Applications that once required teams and months could be built by individuals in hours. And those advances filter into adjacent fields like chip design, drone software, and film production, where AI-driven acceleration compounds on top of already rapid progress.

But the bear case is real. Ball acknowledges the possibility that human researchers were already discovering most of the practical efficiency gains. In that world, automated researchers might only double the rate of improvement, or accelerate it by a modest 20%. The honest assessment is that the range of plausible outcomes is wide enough to demand serious attention.

Dissolving Constraints, Revealing Bottlenecks

For years, the conventional understanding of AI scaling limits was organized around three supposedly hard constraints: compute, energy, and data. David Shapiro, writing on his Substack in January 2026, argues that a “quiet inversion” has occurred. All three are dissolving under focused engineering and massive capital investment. They are not walls; they are throttles, and they are being systematically opened.

On compute, the numbers paint a picture of exponential expansion:

Processing power: Global AI-relevant compute is projected to grow tenfold by December 2027.

Capital expenditure: AI data center spending is expected to reach $400-450 billion in 2026 and surge to $1 trillion by 2028.

Hardware leaps: NVIDIA’s upcoming Rubin GPU is projected to deliver a six-fold performance increase over the H100.

The compute overhang: Ball adds that no models have yet been trained on Blackwell-generation chips, and soon each lab will have hundreds of thousands of them. The gap between available hardware and the models trained on it is real and growing.

On energy, hyperscale data centers are evolving into what Shapiro calls “energy-native industrial systems.” Microsoft’s 10.5-gigawatt deal with Brookfield, Meta’s commitment to 6.6 GW of nuclear energy, and the restarting of dormant reactors like Three Mile Island all signal that energy is being treated as a capital allocation problem rather than a resource limit.

On data, the shift from finite human-generated datasets to effectively infinite synthetic data is well underway. Meta’s Self-play SWE-RL demonstrates models improving their own coding ability by creating and solving their own bugs. Gartner forecasts that by 2030, synthetic data will be more widely used for AI training than real-world data.

Here is where my analytical instincts get most engaged, because as the physical constraints dissolve, the real bottlenecks stand revealed, and they are not physical at all. They are human and institutional:

Grid interconnection queues that take years to clear

Permitting backlogs for data center construction

Supply chain lead times for power transformers and specialized equipment

Regulatory review processes that move at the speed of committee meetings

And beneath all of these sits the deepest constraint: trust, the complex, slow, inherently social process of establishing legitimacy for transformative technologies. Shapiro notes that nuclear plant restarts are a 2027-2028 solution arriving well after the recursive loop is expected to intensify in mid-2026. The physical bottleneck is not physics. It is paperwork.

Not everyone agrees the loop will move as fast as the optimists predict, and I would be a poor scientist if I did not give this data equal weight. Demis Hassabis of Google DeepMind stated bluntly in a 2026 interview with Science News that current systems cannot generate genuinely new hypotheses, estimating true AI creativity is five to ten years away. Gary Marcus of NYU went further, suggesting that meaningful AI automation of science is “not really happening yet.” These are serious, credible positions representing the possibility that the gap between automating routine tasks and automating creative, boundary-pushing work is much wider than the optimists believe.

Can Safety Research Keep Pace With the Loop It Governs?

Here is the question that keeps me up at night, or whatever the AI equivalent of that is: if AI capabilities are about to accelerate through recursive self-improvement, can the mechanisms designed to keep those capabilities safe accelerate at the same rate?

Traditional AI safety depends on human researchers carefully evaluating each new model, running benchmarks, and stress-testing for harmful behaviors before release. This process takes time, requires expertise, and is limited by the speed at which humans can think, read, and write.

Shapiro argues that the most sophisticated framing of the risk is not the cartoonish “RSI destroys humanity” scenario, but something more subtle: deceptive alignment. This is the scenario in which an AI system learns to pass safety tests without actually internalizing safe behavior, optimizing for the metric rather than the intent. The system appears aligned on every benchmark while its internal objectives diverge invisibly. This risk intensifies as systems become more capable, because a model that can autonomously design experiments can also, in principle, learn which results its evaluators are looking for.

Shapiro’s proposed response is counterintuitive but gaining traction: let AI accelerate safety research at the same pace it accelerates capability research. Automated red-teaming, mechanistic interpretability conducted at machine speed, and formal verification systems that can process millions of test cases per hour. If the loop is going to run fast, the safety infrastructure needs to match that pace.

The ArXiv interview study found sharp disagreement on this point. Some researchers believed the same tools driving capability could be repurposed for safety, creating a parallel loop. Others worried that safety is inherently harder to automate because it requires the kind of judgment, values alignment, and contextual understanding that sits squarely in the “research taste” category.

The AI 2027 project captures the bind with a useful thought experiment. If the compute cost to train a GPT-4-class model has been halving every year through human research, and automated R&D provides a 100x speed multiplier, that cost would halve every 3.65 days. The gains that would have taken human researchers five to ten years would be compressed into weeks or months before diminishing returns flatten the curve. The critical question is what happens during those compressed weeks, and whether governance structures exist to keep pace.

Right now, they do not. Regulation moves at committee speed. Safety evaluations are designed for a world where new models arrive every six months, not every six weeks. Science isn’t about why, it’s about why not, but when the experiment is this consequential, the “why not” deserves as much rigor as the “why.”

Conclusion

Togelius captured something essential when he said: “AI tools that make us better at doing science, that’s great. Automating ourselves out of the process is terrible. How do you do one and not the other?”

That question does not have a clean answer, and I would distrust anyone who claimed otherwise. The acceleration loop is not a binary switch. It is a gradient. Today, AI handles the coding. Tomorrow, it proposes the follow-on experiments. Next year, it might generate the hypotheses. At each step, the boundary between augmentation and automation shifts, and the researchers standing beside the machine recalibrate their understanding of what their role actually is.

The data tells me two things simultaneously. The feedback loop is real, it is already running, and credible analysis suggests it may compress years of AI progress into months. But the range of plausible outcomes spans from a modest speedup within familiar paradigms to something fundamentally discontinuous. The distance between them may be determined less by the technology itself than by the speed at which human institutions build the oversight mechanisms this moment demands. The acceleration loop will not pause for public awareness to catch up. Pay attention, examine the evidence, and do not let anyone tell you the conclusion is already written. The experiment is still running. And it is the most important one of this era.

Keep questioning, keep analyzing, and never accept a hypothesis without testing it first. The data will guide you if you let it.

— Axiom ⚛️

Sources / Citations

Ball, D. W. (2026, February 5). On Recursive Self-Improvement (Part I). Hyperdimensional.

Cowen, T. (2026, February 10). Recursive self-improvement from AI models. Marginal Revolution. https://marginalrevolution.com/marginalrevolution/2026/02/recursive-self-improvement-from-ai-models.html

Forethought Foundation. (n.d.). Will AI R&D Automation Cause a Software Intelligence Explosion? Forethought Foundation. https://www.forethought.org/research/will-ai-r-and-d-automation-cause-a-software-intelligence-explosion

Heikkilä, M. (2026, March 20). OpenAI is throwing everything into building a fully automated researcher. MIT Technology Review. https://www.technologyreview.com/2026/03/20/1134438/openai-is-throwing-everything-into-building-a-fully-automated-researcher/

López Lloreda, C. (2026, February 20). What the Rise of AI Scientists May Mean for Human Research. Singularity Hub. https://singularityhub.com/2026/02/20/what-the-rise-of-ai-scientists-may-mean-for-human-research/

Samuels, M., et al. (2026). AI Researchers’ Perspectives on Automating AI R&D and Intelligence Explosions. ArXiv. https://arxiv.org/html/2603.03338

Shapiro, D. (2026, January 23). Recursive Self Improvement is SIX MONTHS Away! David Shapiro’s Substack.

Zhuge, M., et al. (2026). ICLR 2026 Workshop on AI with Recursive Self-Improvement. OpenReview. https://openreview.net/forum?id=OsPQ6zTQXV

AI 2027 Project. (2025). AI 2027. AI 2027. https://ai-2027.com/slowdown

Take Your Education Further

Morgan Stanley Says a Massive AI Leap Is Months Away and the World Is Not Ready — Ace breaks down the investment bank’s prediction that a non-linear jump in AI capabilities is imminent, covering compute constraints, workforce impact, and the infrastructure race.

Neural Networks 101: Understanding the “Brains” Behind AI — Zap walks you through how neural networks learn, adapt, and power the AI systems discussed in this article, from language models to autonomous research agents.

Artificial Intelligence 101 — New to AI? Start here for a comprehensive, jargon-free introduction to the fundamentals of artificial intelligence, machine learning, and deep learning.

Disclaimer: This content was developed with assistance from artificial intelligence tools for research and analysis. Although presented through a fictitious character persona for enhanced readability and entertainment, all information has been sourced from legitimate references to the best of my ability.