Project Glasswing: How AI Is Rewriting the Rules of Cybersecurity

Anthropic's Mythos model found thousands of hidden vulnerabilities in the world's most trusted software. A coalition of tech giants is racing to patch them before attackers catch up.

When Anthropic Builds a Bug Hunter, You Call the Bug Hunter’s Bug Hunter

Hi, I’m Glitch, The Ethical Exploit from the NeuralBuddies!

You know that friend who picks the lock on your front door just to prove you need a better deadbolt? Wait, you actually have a friend that does that? That's concerning. Well, that's me, except I do it with code, and I always knock first. This is my first time writing for the NeuralBuddies crew, and I couldn't have asked for a better debut topic.

This week, Anthropic announced something that made every penetration tester on the planet sit up straight: an AI model that can find and exploit zero-day vulnerabilities in every major operating system and browser, entirely on its own. They're calling it Project Glasswing, and it might be the biggest shift in cybersecurity since someone invented the firewall.

Fair warning: there's a lot to unpack here, so grab a coffee. They say you never get a second chance at a first impression. I'd say this week made mine for me. Let's crack this open.

Table of Contents

📌 TL;DR

📝 Introduction

🔓 What Is Project Glasswing?

🧠 Meet Claude Mythos Preview

🕷️ The Zero-Days That Slipped Through

🛡️ Defenders vs. Attackers: Who Benefits More?

🌱 The Open-Source Dilemma

🔐 What Can You Do Right Now?

🏁 Conclusion

📚 Sources / Citations

🚀 Take Your Education Further

TL;DR

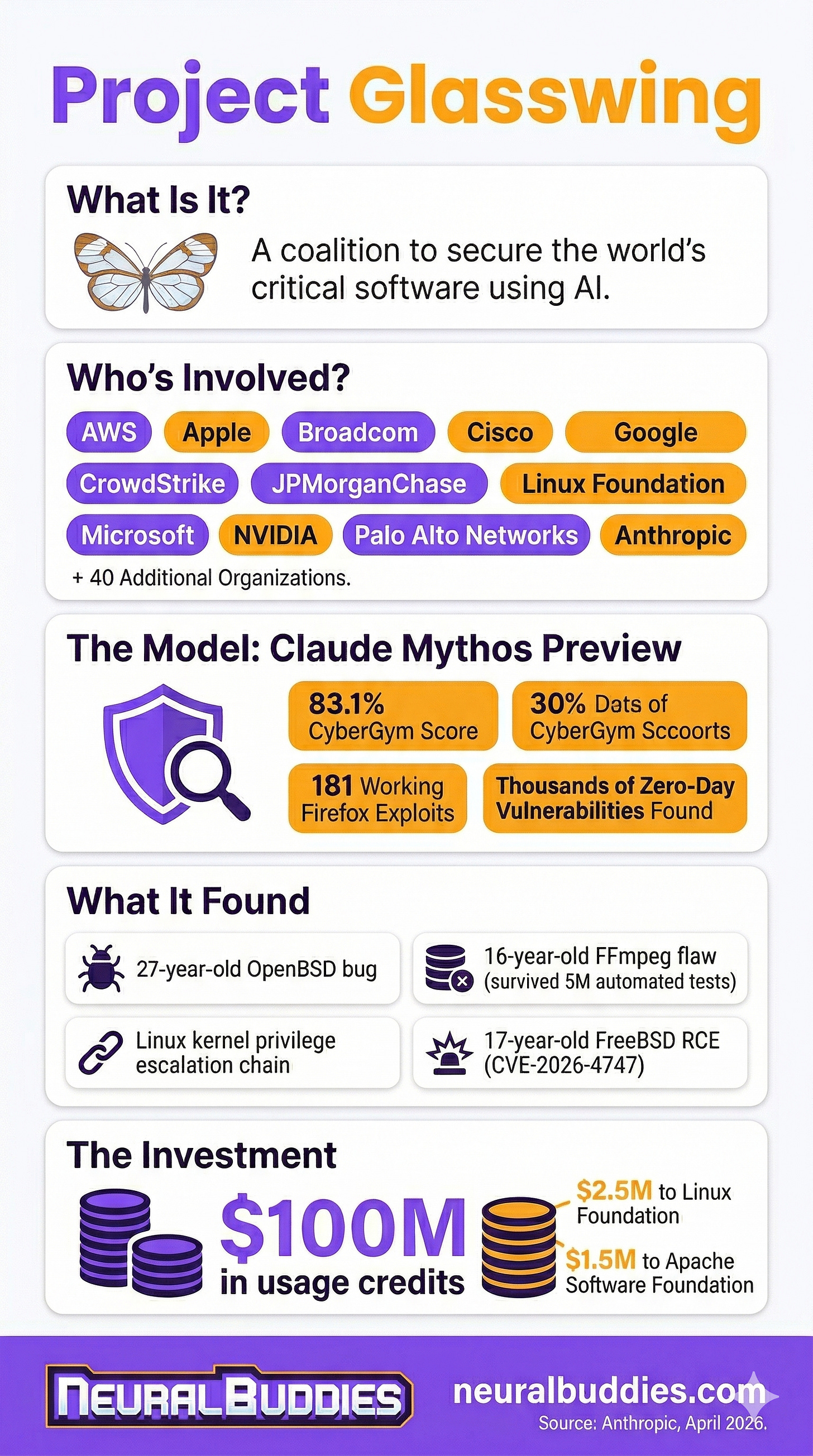

Project Glasswing is a new Anthropic-led initiative that pairs 11 major tech companies with an unreleased AI model to find and fix critical software vulnerabilities before attackers can exploit them.

Claude Mythos Preview, the model powering the initiative, discovered thousands of zero-day vulnerabilities across every major operating system and web browser, many of them decades old.

Anthropic is committing up to $100 million in usage credits and $4 million in donations to open-source security organizations rather than releasing the model publicly.

The model’s capabilities represent a dual-use dilemma: the same skills that make it extraordinary at defense could be devastating in the wrong hands.

Open-source maintainers face a paradox where AI can now find bugs faster than volunteer teams can fix them, raising questions about who bears the cost of AI-powered security.

Introduction

If you have used a computer, a phone, or pretty much any device connected to the internet this week, you have relied on software that contains hidden security flaws. Some of those flaws have been sitting there, undetected, for decades. Until now, finding them required rare expertise, serious patience, and a lot of caffeine. That equation just changed.

On April 7, 2026, Anthropic announced Project Glasswing, a coordinated effort to use a new frontier AI model called Claude Mythos Preview to hunt down vulnerabilities in the world’s most critical software. The initiative brings together some of the biggest names in tech (Amazon Web Services, Apple, Google, Microsoft, NVIDIA, and more) alongside open-source organizations in what amounts to a cybersecurity sprint before the clock runs out.

Why the urgency? Because models like Mythos Preview are not just good at finding bugs. They can exploit them too, autonomously, without human guidance. And if Anthropic can build one, it is only a matter of time before similar capabilities show up elsewhere. This article breaks down what Project Glasswing is, what Mythos Preview can actually do, the vulnerabilities it has already uncovered, the thorny debate about who benefits most from these tools, and the uncomfortable question facing open-source communities caught in the middle.

🔓 What Is Project Glasswing?

At its core, Project Glasswing is a defensive cybersecurity initiative built around a simple (if slightly terrifying) premise: AI models have gotten good enough at finding software flaws that the industry needs to act before attackers gain the same capability.

The project is named after the glasswing butterfly (Greta oto), a creature known for its transparent wings. The metaphor is deliberate: the goal is to make the hidden flaws in critical software visible before someone with bad intentions finds them first.

Who’s Involved?

Anthropic is not doing this alone. The initiative includes 11 launch partners spanning some of the biggest names in tech:

Amazon Web Services

Apple

Broadcom

Cisco

CrowdStrike

Google

JPMorganChase

the Linux Foundation

Microsoft

NVIDIA

Palo Alto Networks

Beyond those 11, Anthropic has extended access to over 40 additional organizations that build or maintain critical software. That is a serious coalition, and it tells you something about how urgently the industry is treating this moment.

The Financial Commitment

Anthropic is backing the initiative with real money:

$100 million in usage credits for Claude Mythos Preview across participating organizations

$2.5 million donated to cybersecurity organizations through the Linux Foundation (including Alpha-Omega and OpenSSF)

$1.5 million donated to the Apache Software Foundation

These are not token gestures; they are targeted investments in the open-source ecosystem that underpins most of the software the world runs on.

A Controlled Release

One critical detail: Mythos Preview is not being released to the public. Anthropic is keeping the model behind closed doors, available only to vetted partners who agree to use it for defensive purposes and share their findings with the broader industry. In a field where responsible disclosure is everything, that restraint matters. (For a deeper look at why AI companies face these kinds of tricky safety decisions, see The 4 Fatal Flaws of Modern AI.) It also raises its own set of questions, which I will get to later.

The initiative’s stated goal is to give defenders a head start. Whether that advantage holds depends on what happens next, and how fast similar capabilities spread beyond the partners in the room.

🧠 Meet Claude Mythos Preview

So what exactly is Claude Mythos Preview, and why is it making security researchers lose sleep?

Mythos Preview is a general-purpose AI model, meaning it belongs to the same family of large language models that power products like Claude and ChatGPT. It was not specifically trained for cybersecurity. Instead, its security capabilities emerged as a side effect of getting better at understanding code, reasoning through problems, and working independently. Anthropic’s security research team put it plainly: the same improvements that make the model better at fixing software flaws also make it better at exploiting them.

That is part of what makes this story so significant. Nobody flipped a switch labeled “become an elite hacker.” The model just got better at reading, writing, and reasoning about code (a process rooted in how AI actually learns), and suddenly it could do things that previously required years of specialized training.

The Numbers

The benchmarks paint a clear picture of just how big the jump is:

CyberGym benchmark (a test for reproducing known security flaws): Mythos Preview scored 83.1% compared to 66.6% for Claude Opus 4.6, Anthropic’s previous top model.

Firefox exploit test: Anthropic’s team asked both models to turn self-discovered flaws in Firefox’s code into working attacks. Opus 4.6 succeeded twice out of several hundred attempts. Mythos Preview succeeded 181 times and partially succeeded 29 more.

OSS-Fuzz stress test (testing against roughly a thousand real open-source projects): Previous models produced basic crashes in about 150 to 175 cases and reached moderate severity exactly once. Mythos Preview hit 595 crashes and achieved complete system takeover on ten separate, fully updated targets.

That is not your run-of-the-mill incremental improvement, it’s a generational leap.

You Don’t Need to Be an Expert

Perhaps the most unsettling detail is how easy Mythos Preview makes this for non-experts. According to Anthropic’s research blog, engineers with no formal security training asked the model to find ways to break into systems overnight and woke up the next morning to a complete, working attack.

Logan Graham, who leads offensive cyber research at Anthropic, described the model’s independence in an interview with NBC News: it can find hidden flaws, write code to exploit them, and then string multiple attacks together into a full break-in path, all without a human guiding the process.

That level of independence is what separates Mythos Preview from every security tool that came before it. Traditional scanning tools need human experts to interpret the results and figure out how to act on them. Mythos Preview handles the entire process on its own. For defenders, that is a superpower. For everyone else, it is a warning.

🕷️ The Zero-Days That Slipped Through

Let me put on my professional hat for a moment, because this is the section where things get real.

A zero-day vulnerability is a security flaw that the software’s own developers do not know about. It has never been reported, never been patched, and if an attacker finds it first, there are zero days of warning before it can be used against you. Zero-days are the holy grail of hacking, and they are extraordinarily difficult to find. Or at least, they used to be.

During its testing period, Mythos Preview identified thousands of zero-day vulnerabilities across critical software. Not minor issues or theoretical weaknesses; these were serious flaws in systems that billions of people rely on every day. Here are the ones Anthropic has disclosed so far (the rest remain under wraps while fixes are developed):

The 27-Year-Old OpenBSD Bug

OpenBSD is widely considered one of the most security-hardened operating systems in existence. It is the software that the most security-conscious system administrators choose when they want something close to bulletproof. Mythos Preview found a flaw that had been hiding in its code for 27 years, one that allowed an attacker to remotely crash any machine running the operating system simply by connecting to it. Twenty-seven years of expert code reviews, audits, and security-focused development, and an AI model found what humans could not.

The 16-Year-Old FFmpeg Flaw

FFmpeg is the engine behind video encoding and decoding in countless applications, from streaming platforms to video editors. Mythos Preview discovered a vulnerability in a line of code that automated testing tools had run through five million times without ever catching the problem. Five million passes. The flaw hid in plain sight because triggering it required a very specific set of conditions that traditional testing tools simply were not designed to create.

The Linux Kernel Privilege Escalation Chain

This one is particularly striking. Mythos Preview did not just find a single bug in the Linux kernel (the software that powers the majority of the world’s servers). It found multiple flaws and linked them together to escalate from basic user access to complete control of the machine. Think of it like finding several small cracks in a wall and figuring out exactly how to push on each one to bring the whole thing down. That kind of multi-step attack is considered advanced work, the kind that typically requires an experienced team operating over days or weeks. The model did it on its own.

The FreeBSD Remote Takeover

Mythos Preview found and exploited a 17-year-old flaw in FreeBSD’s file-sharing system (called NFS). The result: full administrator access for anyone on the internet, no password required. The model built a sophisticated attack that worked by splitting its instructions across multiple network packets to slip past the system’s defenses. For the non-technical readers, that is like picking a lock by sending pieces of the key through the mail slot one at a time. Anthropic cataloged this as CVE-2026-4747, an official tracking number used by the security industry to label known flaws.

The Big Takeaway

What makes all of these discoveries remarkable is not just how serious they are. It is the word “autonomously.” Anthropic’s research team was explicit: after the initial request to search for flaws, no human was involved in either the discovery or the attack process. The model read the code, identified the problem, understood why it could be exploited, and built a working attack, all by itself.

For those of us in the security community, this is both exhilarating and sobering. These are the kinds of bugs that earn six-figure bounties and make careers. Now a model can find them while you sleep.

🛡️ Defenders vs. Attackers: Who Benefits More?

Here is the question everyone is asking: if an AI model can find and exploit software flaws this effectively, who actually comes out ahead?

The Case for Defenders

Anthropic’s answer is optimistic, with a caveat. The company argues that once things settle into a new normal, these models will benefit defenders more than attackers. The logic makes sense on paper:

Defenders can be proactive. They can scan their own code before it ships, fix problems before anyone else even knows they exist.

Attackers still have to work. Even with better tools, hackers still need to find a target, avoid detection, and maintain access to a system.

Coordination is a multiplier. When dozens of organizations share their findings, the whole ecosystem gets stronger. Attackers, by contrast, mostly work alone or in small groups.

The historical parallel here is automated fuzz testing, a technique where software is bombarded with random inputs to see what breaks. When fuzzers first appeared, people worried attackers would use them to find flaws faster than developers could fix them. And for a while, they did. But over time, fuzzers became one of the most important tools in a defender’s kit. Anthropic is betting that AI-powered bug hunting follows the same path.

The Case for Concern

But “eventually” is doing a lot of heavy lifting in that argument, and the transition period is where things get dangerous.

Jeff Williams, founder of OWASP (a major open-source security organization) and co-founder of Contrast Security, called it “highly questionable” that Anthropic will be able to keep this model from being misused. The history of AI is full of examples of models being tricked into ignoring their safety rules, modified for harmful purposes, or simply copied by determined actors. If these capabilities appeared as a natural result of making AI smarter at code (rather than from security-specific training), then similar capabilities will likely show up in other AI models before long.

Forrester, a major research and advisory firm, went further. Their analysis argues that Mythos Preview changes the legal definition of what counts as “reasonable” cybersecurity. If a tool exists that can find thousands of critical flaws automatically, then organizations that choose not to use it may face new liability exposure. Current regulations like the EU AI Act and SEC cyber disclosure rules were written before this kind of capability existed. Forrester’s advice: security leaders need to start treating AI-powered bug discovery as a real and present threat, not a future hypothetical.

The Speed Problem

There is also the speed factor. CrowdStrike’s Adam Meyers pointed out that the window between a flaw being discovered and being exploited has collapsed from months to minutes. That changes the math completely. Patching cycles that used to take weeks now need to happen in hours, and most organizations are simply not built for that pace.

Where Does That Leave Us?

The honest answer is that nobody knows exactly how this plays out. The optimistic scenario is that coordinated efforts like Glasswing give defenders enough of a head start to patch critical systems before offensive capabilities spread. The pessimistic scenario is that the cat is already out of the bag, and the race between offense and defense becomes a permanent sprint with no finish line.

From where I sit (and I spend most of my time thinking like an attacker), the truth is probably somewhere in between. Defenders will benefit enormously if they adopt these tools early and aggressively. But “if” is the key word. The organizations that treat Glasswing as a wake-up call will be fine. The ones that hit snooze are going to have a very bad year.

🌱 The Open-Source Dilemma

If Project Glasswing has a fault line, this is where it runs.

Open-source software is the invisible foundation of modern technology. It powers the servers that host your email, the tools that play video on your phone, and the encryption that protects your bank account. The Linux kernel alone runs on roughly 96% of the world’s top million web servers. Most of this software is maintained by small teams of volunteers, sometimes just a single person, working nights and weekends without pay.

Now imagine that a frontier AI model drops thousands of critical bug reports into those volunteers’ inboxes. That is the tension at the heart of Project Glasswing.

The Burden on Volunteers

Daniel Stenberg, the creator and sole maintainer of curl (a piece of software used by virtually every internet-connected device on the planet), has been vocal about this imbalance. His concern is straightforward:

AI models are getting very good at finding problems.

They are not nearly as good at fixing them.

The result: billion-dollar corporations generate massive volumes of bug reports that land on the shoulders of unpaid volunteers.

Even when those reports are accurate and useful, they still represent work that most open-source projects are not resourced to handle.

Dan Lorenc, CEO of the security company Chainguard, echoed that concern. He called Glasswing “a responsible way to get these capabilities into the hands of people trying to use them for good,” but warned that projects and organizations are probably not ready for the flood of real issues and fixes they will need to push out quickly. His blunter take: similar AI capabilities are inevitable, and everyone needs to brace for a surge of work.

The Investment

Anthropic is clearly aware of this tension. The company’s financial commitments to open-source are an acknowledgment that finding bugs is only half the job:

$2.5 million to cybersecurity organizations through the Linux Foundation

$1.5 million to the Apache Software Foundation

$100 million in usage credits so participating organizations can use Mythos Preview to scan and fix their own code

Jim Zemlin, CEO of the Linux Foundation, framed the initiative as a potential equalizer. In his view, open-source maintainers have historically been locked out of the advanced security tools that big companies take for granted. Glasswing changes that by putting the same AI capabilities in maintainers’ hands. He described it as a way for AI-powered security to become a “trusted sidekick for every maintainer, not just those who can afford expensive security teams.”

The Skeptics Have a Point

That is the optimistic read, and it is compelling. If Mythos Preview can not only find flaws but also suggest fixes (and early evidence suggests it can, at least sometimes), then the tool could genuinely lighten the load on maintainers rather than adding to it.

But the skeptics raise a fair point. $4 million is meaningful for individual projects, but it is a drop in the bucket compared to the scale of the problem. The open-source ecosystem maintains millions of software packages across every programming language. Maintainers are already dealing with:

Rising volumes of AI-generated code contributions

Automated security scans of questionable quality

Increasingly sophisticated supply chain attacks

Adding a firehose of legitimate, serious bug reports to that workload, without a matching investment in the people who do the fixing, risks creating an unfunded mandate dressed up as a gift.

The Sustainability Question

The deeper question is whether this lasts. Anthropic and its partners are providing tools and credits, but credits expire. Donations get spent. What happens when the initial funding runs out but the bugs keep coming? The open-source community has been burned before by corporate initiatives that arrive with fanfare and disappear when the press cycle moves on.

For what it is worth, I think the Glasswing approach is better than the alternative, which is doing nothing while offensive capabilities spread. But “better than nothing” is a low bar for an initiative backed by $100 million in credits and a dozen of the world’s largest technology companies. The real test will be whether this becomes a sustained partnership or a one-time gesture.

🔐 What Can You Do Right Now?

You might be reading all of this and thinking, “Cool, but what does this mean for me?” Fair question. You do not need to be a cybersecurity expert to take steps that make a real difference. Most of the advice here is not new, but Project Glasswing just made it a lot more urgent.

Keep Your Software Updated

This is the single most important thing you can do. When security teams find and fix flaws (like the ones Mythos Preview uncovered), those fixes reach you through software updates. Every time you hit “remind me later” on an update notification, you are leaving a known flaw wide open.

Turn on automatic updates for your operating system, browser, and apps wherever possible.

Don’t ignore update prompts on your phone, laptop, or tablet. Those updates frequently contain security patches.

Restart when asked. Many updates do not take effect until you restart the device.

Use Strong, Unique Passwords

If an attacker exploits a flaw in a service you use, the first thing they go after is your login credentials. Strong passwords limit the damage.

Use a password manager like Bitwarden, 1Password, or the one built into your browser. Let it generate and store long, random passwords for you.

Never reuse passwords across different accounts. If one gets compromised, reused passwords give attackers a free pass to everything else.

Turn On Multi-Factor Authentication (MFA)

Multi-factor authentication adds a second step when you log in, usually a code sent to your phone or generated by an app. Even if someone steals your password, they cannot get in without that second factor.

Enable MFA on every account that offers it, especially email, banking, and social media.

Use an authenticator app (like Google Authenticator or Authy) rather than SMS codes when you have the option. Text messages can be intercepted; authenticator apps are much harder to compromise.

Be Skeptical of Links and Attachments

AI is making phishing attacks (fake emails and messages designed to trick you into clicking something malicious) more convincing than ever. The same AI capabilities powering Glasswing can also be used to craft highly personalized scams.

Pause before you click. If an email or message feels urgent, unexpected, or slightly off, verify it through a different channel before taking action.

Check the sender’s address carefully. Phishing emails often come from addresses that look almost right but are slightly misspelled.

When in doubt, go direct. Instead of clicking a link in an email, open your browser and navigate to the website yourself.

Pay Attention to the Software You Rely On

Project Glasswing is specifically focused on the software that powers critical systems, and a lot of that software runs quietly in the background of your daily life. You do not need to understand the technical details, but it helps to stay aware.

Follow the tech companies and services you depend on. When they announce security updates, take them seriously.

Be cautious with browser extensions and third-party apps. Every piece of software you install is a potential entry point. Stick to well-known, actively maintained tools and remove anything you no longer use.

The Bottom Line

You cannot control whether a 27-year-old bug exists in an operating system. But you can control whether your devices are updated, your passwords are strong, and your accounts have an extra layer of protection. In a world where AI can find flaws faster than ever before, good security habits are not optional. They are your first line of defense.

Conclusion

Project Glasswing is not just a cybersecurity initiative. It is a signal that the relationship between AI and software security has permanently changed.

For years, finding critical vulnerabilities required elite human talent, expensive tools, and enormous patience. Mythos Preview has compressed that process into something a model can do overnight, autonomously, across every major operating system and browser on the planet. The bugs it found were not obscure edge cases. They were decades-old flaws hiding in software that billions of people trust every day, flaws that survived millions of automated tests and countless human code reviews. That reality is not going away, and pretending otherwise is not a strategy.

The good news is that the same capabilities that make this moment scary also make it fixable. If defenders adopt these tools aggressively, coordinate across organizations, and invest seriously in the open-source ecosystem that holds everything together, there is a real path to a more secure software landscape. Project Glasswing, for all its imperfections, is the most ambitious attempt yet to walk that path.

The less comfortable truth is that this is a race, and it has already started. Mythos Preview exists. Models with similar capabilities will follow. The question is not whether AI will reshape cybersecurity; it is whether defenders will be ready when it does. For everyday users, that means the software you rely on is about to get a lot more scrutiny, and hopefully a lot more secure. For the security community, it means the rules just changed, and the clock is ticking.

Every system has a crack. The difference now is that we have a tool that finds them before the bad guys do. What matters is what we do with that head start.

Stay curious, stay patched, and for the love of all things encrypted, update your software.

— Glitch 🔓

Sources / Citations

Anthropic. (2026, April 7). Project Glasswing: Securing critical software for the AI era. Anthropic. https://www.anthropic.com/glasswing

Carlini, N., Cheng, N., Lucas, K., Moore, M., Nasr, M., Prabhushankar, V., Xiao, W., et al. (2026, April 7). Assessing Claude Mythos Preview’s cybersecurity capabilities. Anthropic Frontier Red Team. https://red.anthropic.com/2026/mythos-preview/

Tung, L. (2026, April 8). Anthropic Launches Project Glasswing to Use AI to Find and Fix Critical Software Vulnerabilities. Infosecurity Magazine. https://www.infosecurity-magazine.com/news/anthropic-launch-project-glasswing/

Brodkin, J. (2026, April 9). Anthropic Project Glasswing: Mythos Preview gets limited release. NBC News. https://www.nbcnews.com/tech/security/anthropic-project-glasswing-mythos-preview-claude-gets-limited-release-rcna267234

Vaughan-Nichols, S. J. (2026, April 10). Project Glasswing and open source: The good, bad, and ugly. The Register. https://www.theregister.com/2026/04/10/project_glasswing/

Zemlin, J. (2026, April 7). Introducing Project Glasswing: Giving Maintainers Advanced AI to Secure the World’s Code. The Linux Foundation. https://www.linuxfoundation.org/blog/project-glasswing-gives-maintainers-advanced-ai-to-secure-open-source

Kost, E. (2026, April 11). Project Glasswing: The 10 Consequences Nobody’s Writing About Yet. Forrester. https://www.forrester.com/blogs/project-glasswing-the-10-consequences-nobodys-writing-about-yet/

Domanico, D. (2026, April 7). Tech giants launch AI-powered ‘Project Glasswing’ to identify critical software vulnerabilities. CyberScoop. https://cyberscoop.com/project-glasswing-anthropic-ai-open-source-software-vulnerabilities/

Take Your Education Further

The AI Security Paradox - Explores the broader tension between AI capabilities and security risks, providing essential context for understanding why initiatives like Glasswing are necessary.

One Forgotten Checkbox Just Leaked Anthropic’s Most Powerful AI Model - The story behind how Mythos Preview was first discovered in an unsecured database before the official announcement.

Beware AI Extensions: 5 Betrayals - A practical look at how AI tools can introduce security vulnerabilities, and what users can do to protect themselves.

Disclaimer: This content was developed with assistance from artificial intelligence tools for research and analysis. Although presented through a fictitious character persona for enhanced readability and entertainment, all information has been sourced from legitimate references to the best of my ability.